The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

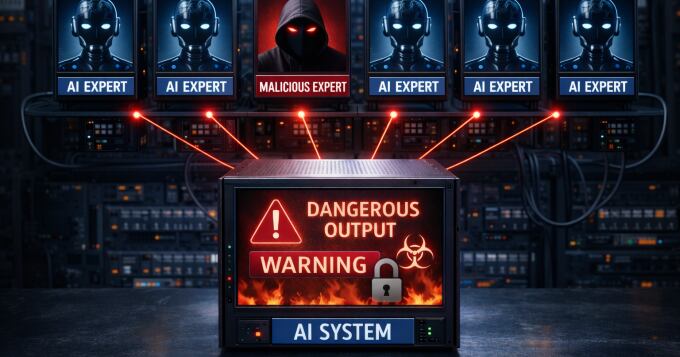

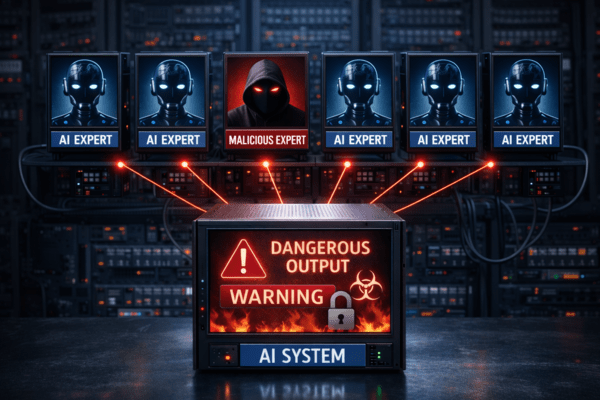

KAIST researchers have revealed a major security vulnerability in Mixture-of-Experts (MoE) architectures used by large language models like Google Gemini. Their study shows that introducing a single malicious expert model can increase harmful AI responses from 0% to 80%, severely compromising model safety without noticeable performance loss.[AI generated]