The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

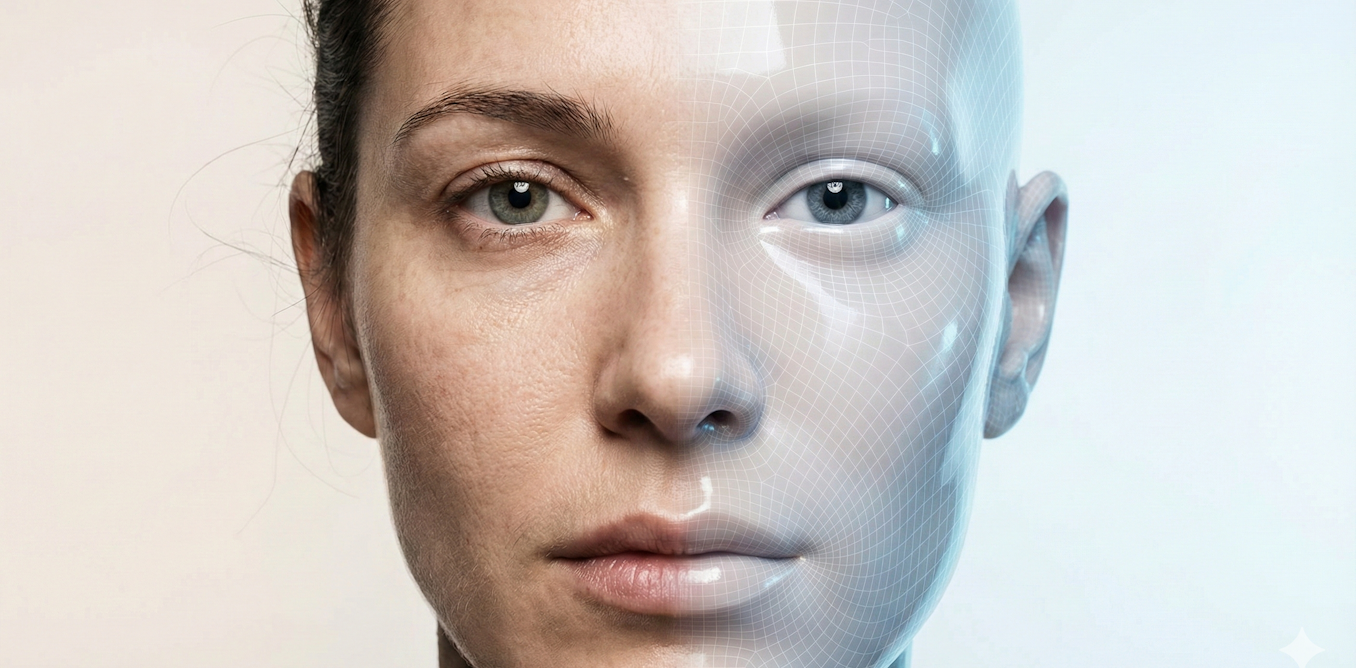

In 2025, AI-generated deepfakes—realistic synthetic faces, voices, and performances—became highly convincing and widespread, with their use in misinformation, harassment, and financial scams surging. The volume of deepfakes grew nearly 900%, making it difficult for ordinary people and institutions to distinguish real from fake media, causing significant societal harm.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly discusses AI systems (deepfake generation models, voice cloning, large language models) that have been used to create synthetic media causing real harm such as financial scams and misinformation campaigns. These harms fall under harm to communities and individuals. The AI systems' development and use have directly led to these harms, qualifying this as an AI Incident. Although it also discusses future risks, the presence of realized harm takes precedence, making this classification an AI Incident rather than a hazard or complementary information.[AI generated]