The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

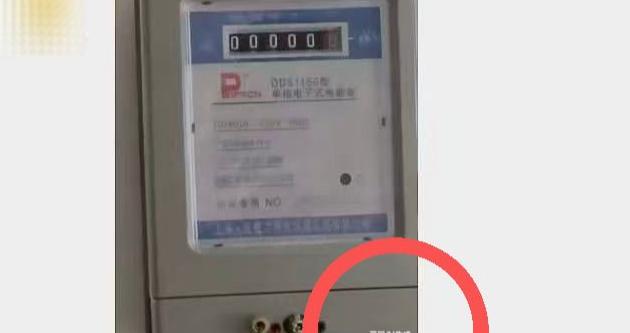

In Hubei, China, a plaintiff's representative used an AI image generator to create fake photographic evidence in a rental dispute, submitting images with visible AI watermarks to the court. The forgery was detected by the judge, leading to legal admonishment and the rejection of the falsified evidence, highlighting risks of AI misuse in legal proceedings.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves the use of an AI system to generate forged photographic evidence submitted in a legal proceeding. This AI-generated fake evidence misled the court and disrupted the judicial process, which is a violation of legal rights and judicial order. The harm is realized and directly linked to the AI system's use in fabricating evidence. Hence, it meets the criteria for an AI Incident due to violation of legal rights and harm to the judicial system.[AI generated]