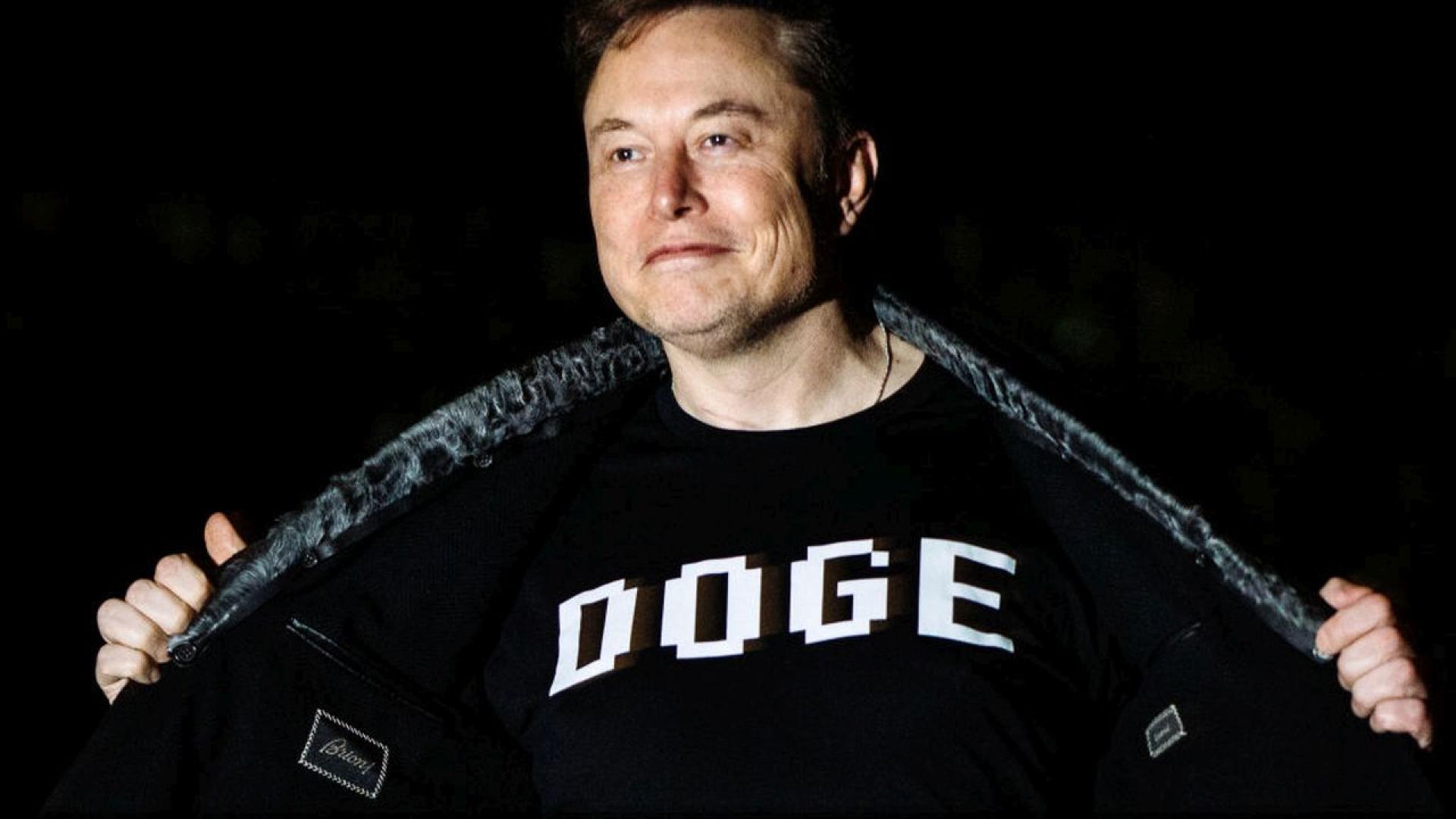

The Tesla FSD and Grok AI systems are explicitly mentioned as controlling the vehicle autonomously for 2,732.4 miles across the US with zero human intervention, verified by a third party. This indicates direct use of AI systems leading to a real-world outcome involving physical operation of a vehicle. While the article is celebratory and does not report any harm or accident, the event involves the use of AI systems in a safety-critical context with potential for harm if malfunctioned. However, since no harm or incident is reported, and the event is a demonstration of AI capability and milestone achievement, it does not qualify as an AI Incident. The event also does not describe any plausible future harm or risk beyond the demonstration itself, so it is not an AI Hazard. The article mainly reports on the AI system's successful use and technological progress, which is a significant development but not a harm or risk event. Therefore, the classification is Complementary Information, as it provides important context and update on AI system deployment and capabilities without describing harm or hazard.

:strip_icc()/i.s3.glbimg.com/v1/AUTH_63b422c2caee4269b8b34177e8876b93/internal_photos/bs/2023/0/C/f7F1BqQz2QBp8pryGtAQ/grok.jpg)

/https://i.s3.glbimg.com/v1/AUTH_08fbf48bc0524877943fe86e43087e7a/internal_photos/bs/2025/0/M/wdikfySZmJEB94dI7xbQ/grok-e-elon-musk.png)

)

)

.jpg)

/s3/static.nrc.nl/wp-content/uploads/2026/01/03130134/030126VER_2028830781_Elon.jpg)