The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

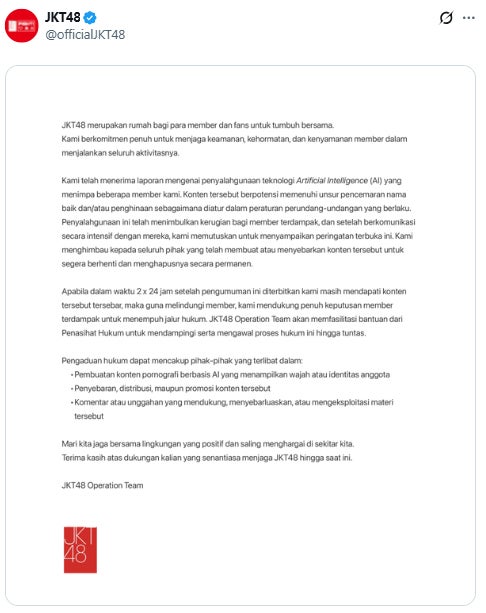

Indonesian idol group JKT48 publicly warned against the malicious use of AI to create and spread sexually explicit, defamatory images of its members without consent. The group demanded immediate removal of such content and threatened legal action to protect affected members' rights and reputations.[AI generated]