The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

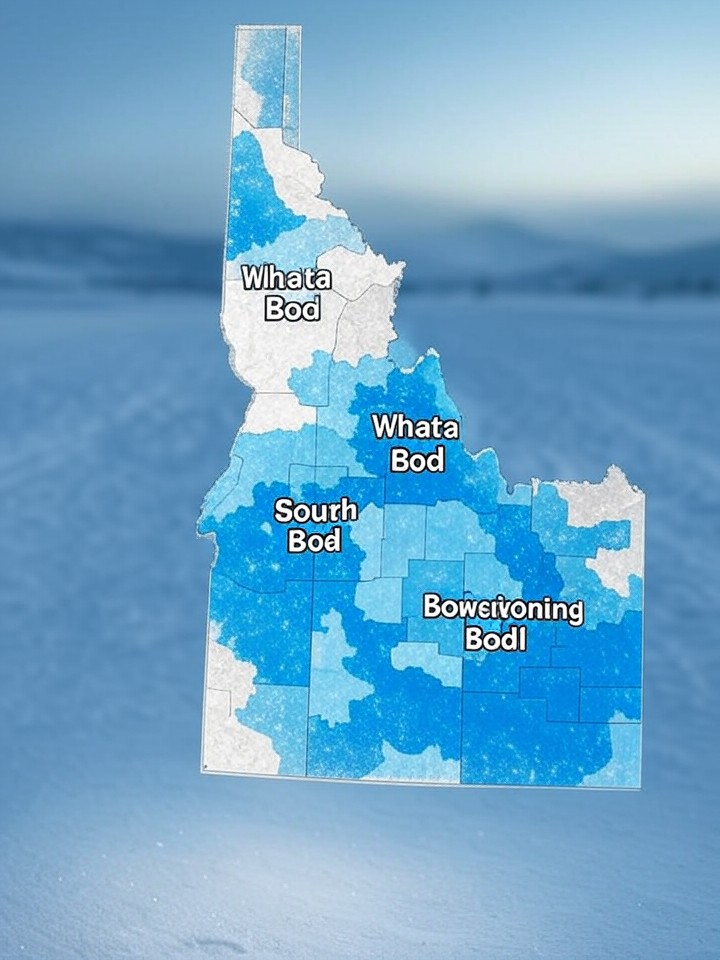

The U.S. National Weather Service used generative AI to create weather maps that included fictional town names in Idaho, such as "Whata Bod" and "Orangeotild." The incident, which led to public misinformation and eroded trust, highlights the risks of unsupervised AI in critical government communications. The errors were later corrected.[AI generated]