The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

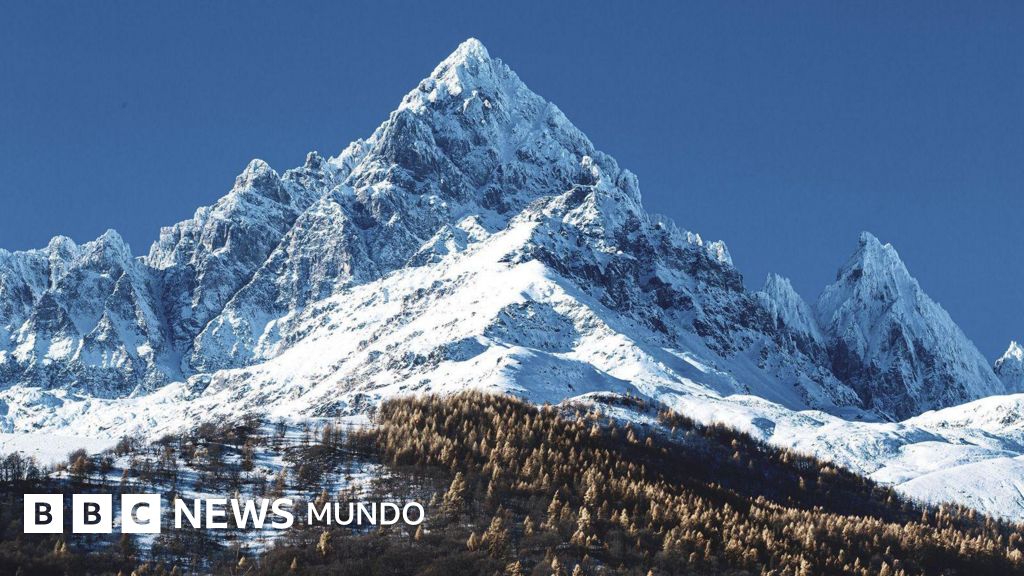

After months of unsuccessful human searches, AI software analyzing drone images identified the body of missing Italian mountaineer Nicola Ivaldo in the Piedmont Alps. The AI system detected a red helmet in the snow, enabling rescue teams to locate the climber, demonstrating AI's critical role in search and rescue operations.[AI generated]