The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

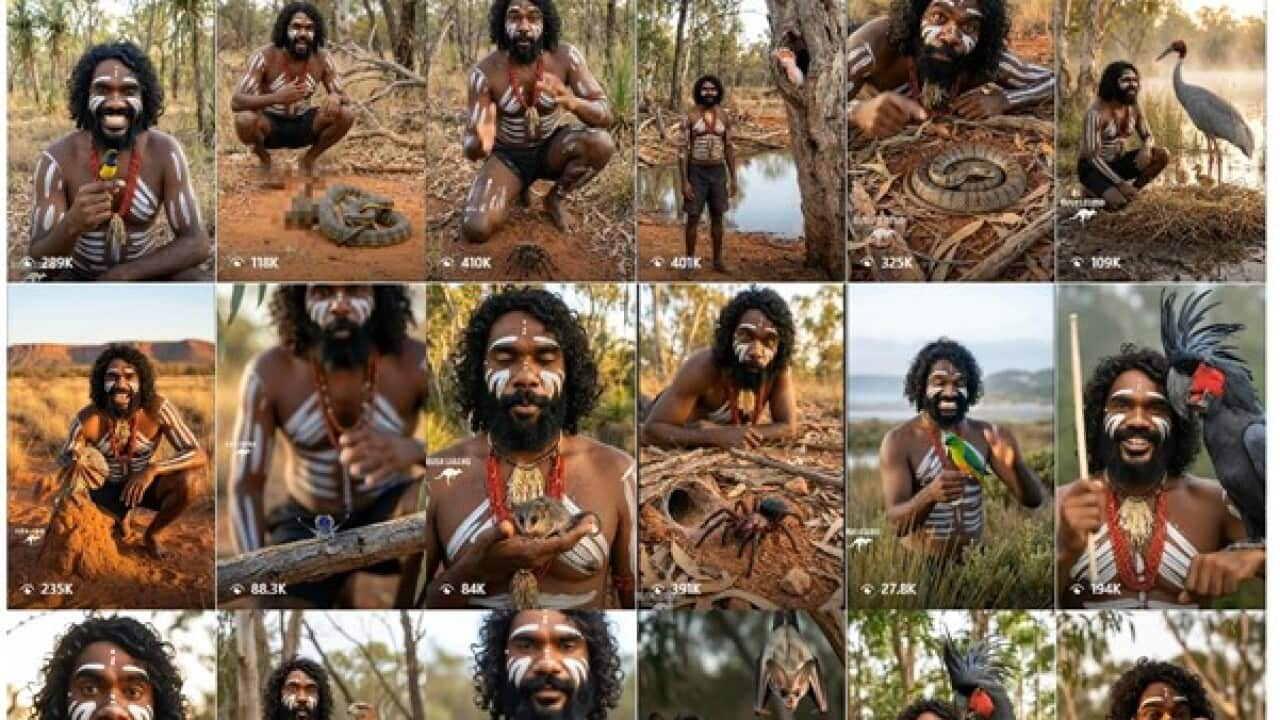

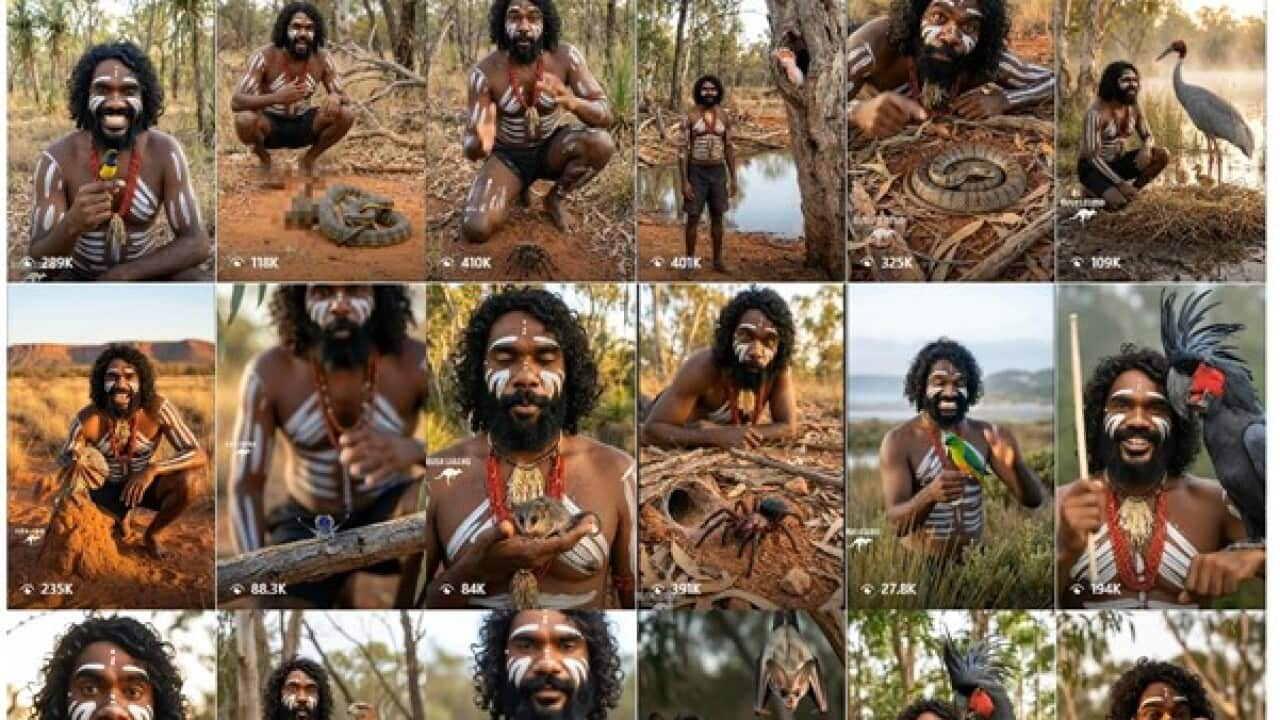

A South African content creator in New Zealand used AI to generate 'Jarren,' an Aboriginal-appearing avatar, for the 'Bush Legend' social media accounts. The AI persona, presented as an Indigenous wildlife expert, amassed hundreds of thousands of followers, drawing criticism for cultural appropriation, digital blackface, and misrepresentation of Aboriginal identity without community consent.[AI generated]

Why's our monitor labelling this an incident or hazard?

The AI system (the AI-generated avatar 'Jarren') is central to the event, as it creates a fictional Indigenous persona that misrepresents Aboriginal identity and culture. This has led to cultural harm, misappropriation, and economic exploitation concerns raised by Indigenous leaders and experts, constituting violations of rights and harm to communities. The harm is ongoing and realized, not merely potential, as the AI avatar is actively used to generate content and profit. Hence, the event meets the criteria for an AI Incident under violations of human rights and harm to communities caused directly by the AI system's use.[AI generated]