The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

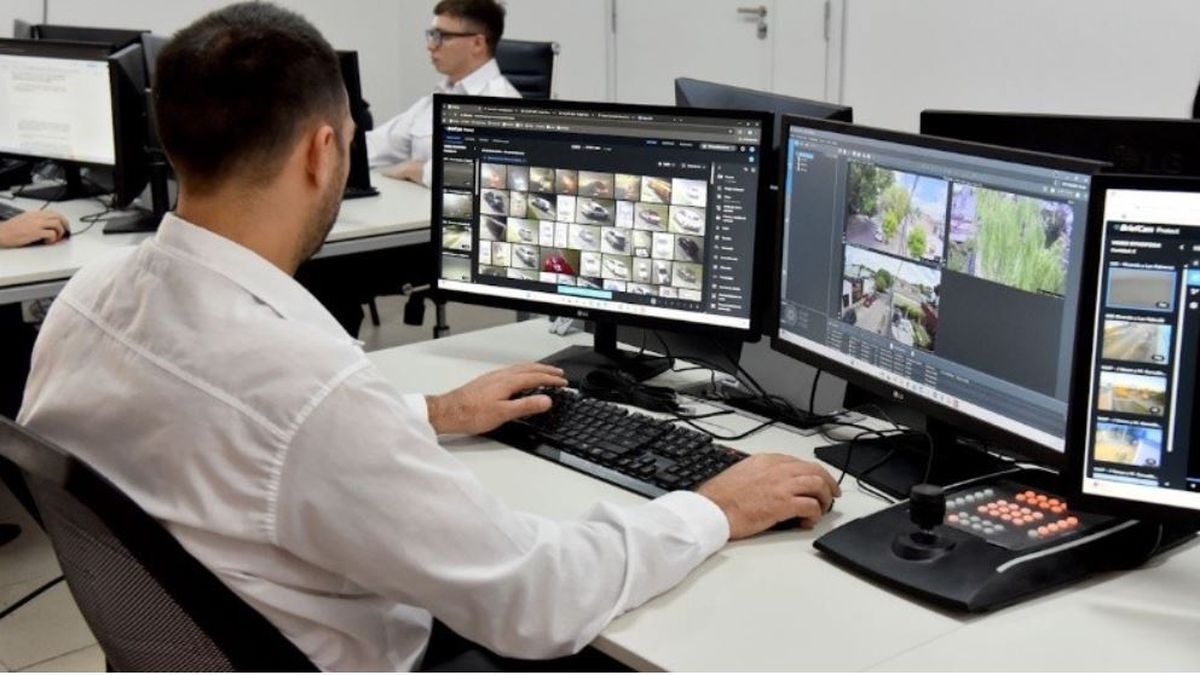

The government of Santa Fe, Argentina, is investing over $32 million to deploy an AI-powered video surveillance system, adding 2,000 new cameras with facial recognition and license plate reading capabilities. The system aims to modernize public security but raises potential privacy and human rights concerns.[AI generated]