The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

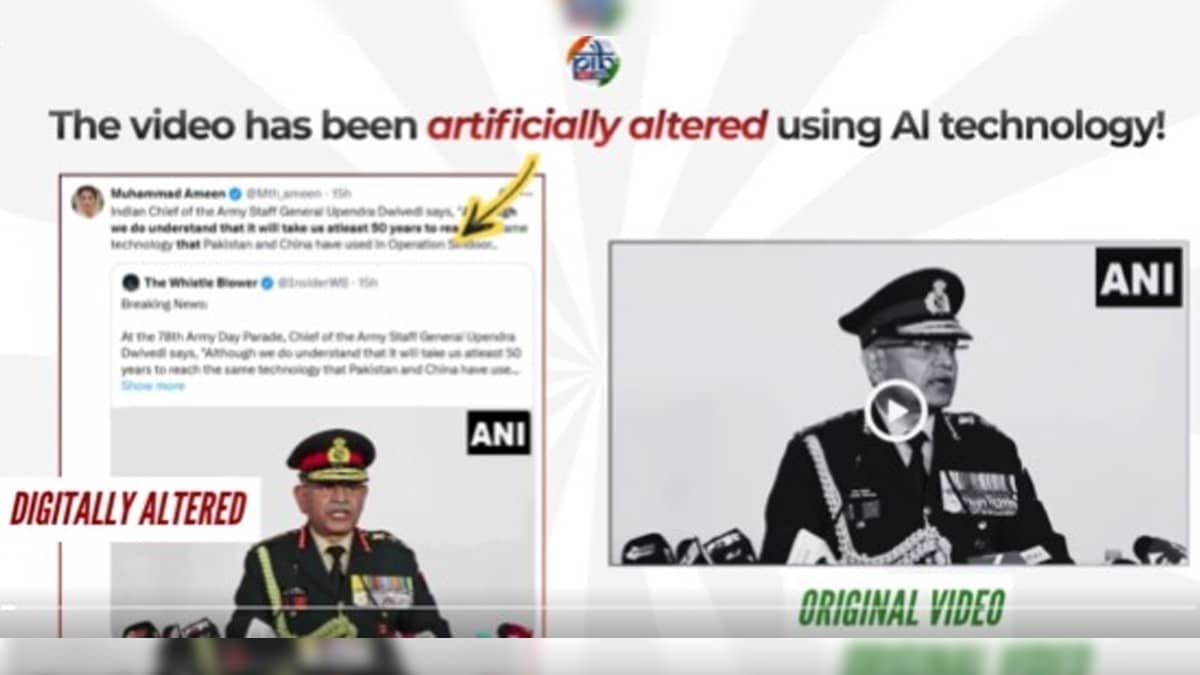

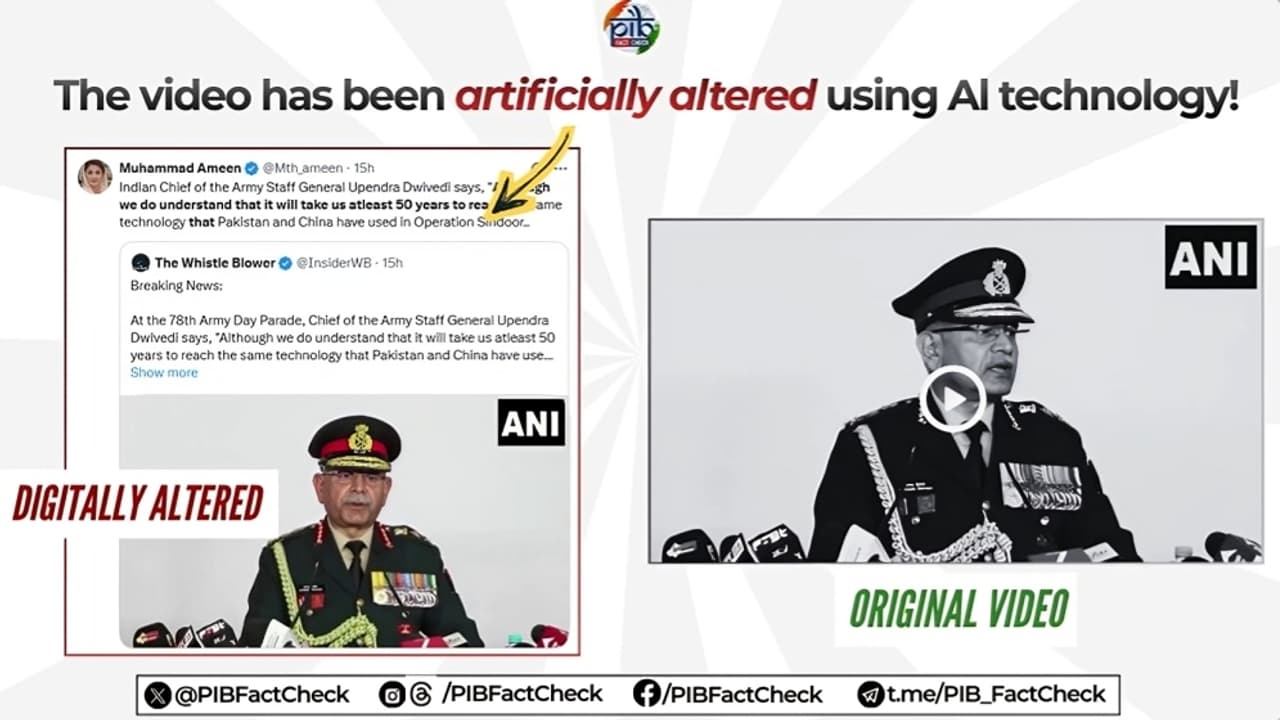

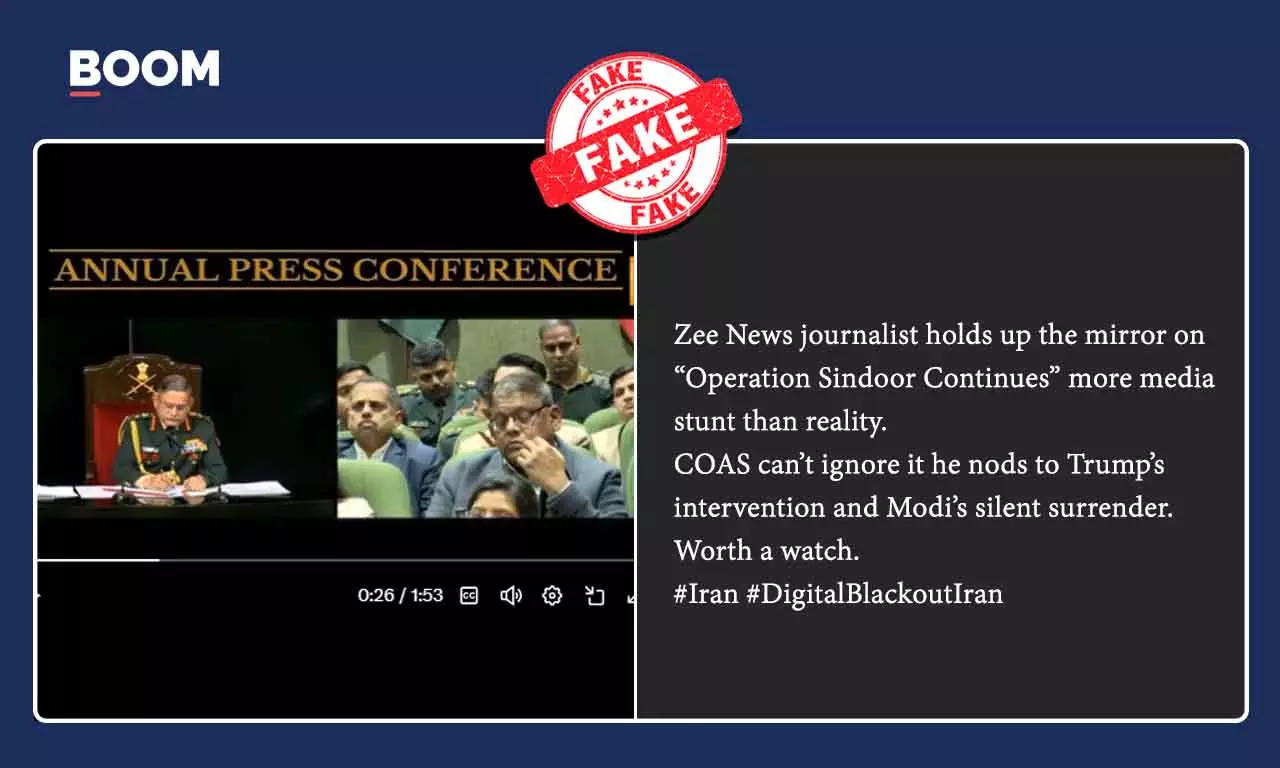

Multiple AI-generated deepfake videos falsely depicting Indian Army Chief General Upendra Dwivedi making controversial statements about military technology and Operation Sindoor have circulated on social media. These manipulated clips, spread by propaganda accounts, have been debunked by Indian authorities, highlighting the harm caused by AI-driven misinformation.[AI generated]