The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

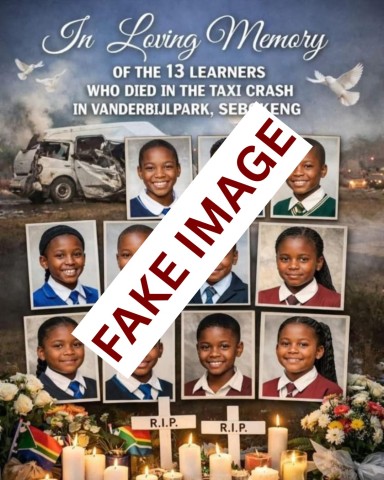

An AI-generated image falsely depicting the victims of the Vaal taxi crash was widely circulated online, causing emotional distress to grieving families and spreading misinformation. The Gauteng education department condemned the hoax, warning that such misuse of AI technology exacerbates trauma and violates the rights of affected communities.[AI generated]