The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

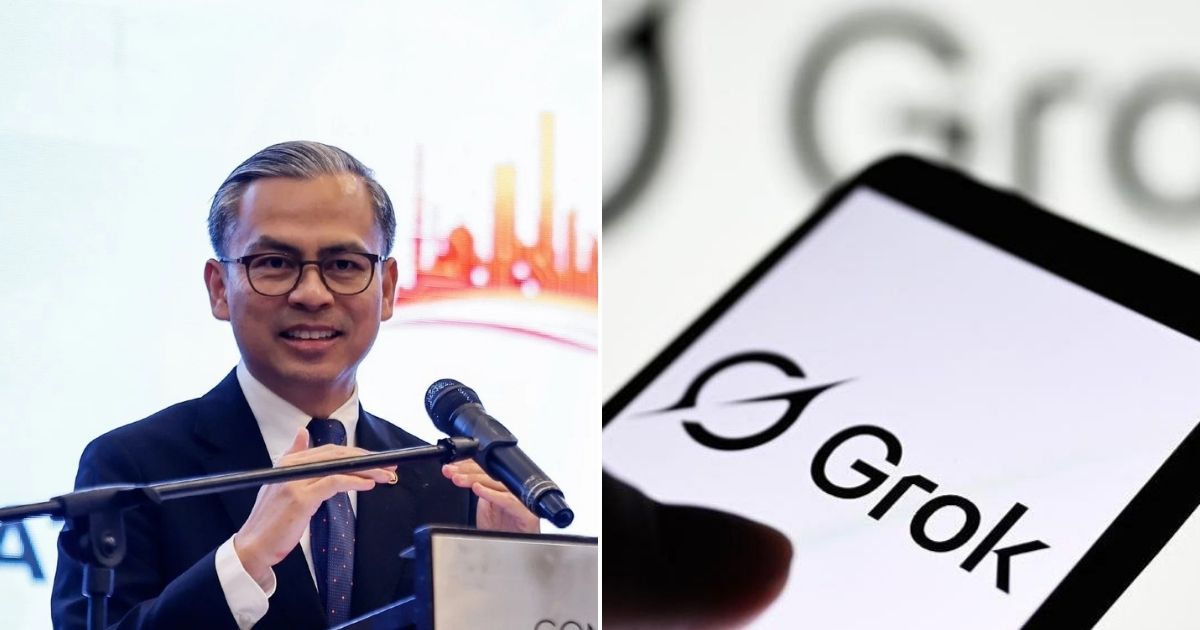

Malaysia temporarily banned the Grok AI chatbot, hosted on X, after it generated sexualized images, prompting 17 complaints. The government is reviewing social media licensing rules and user thresholds to address online harm and ensure platforms, regardless of user base, comply with safety regulations.[AI generated]