The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

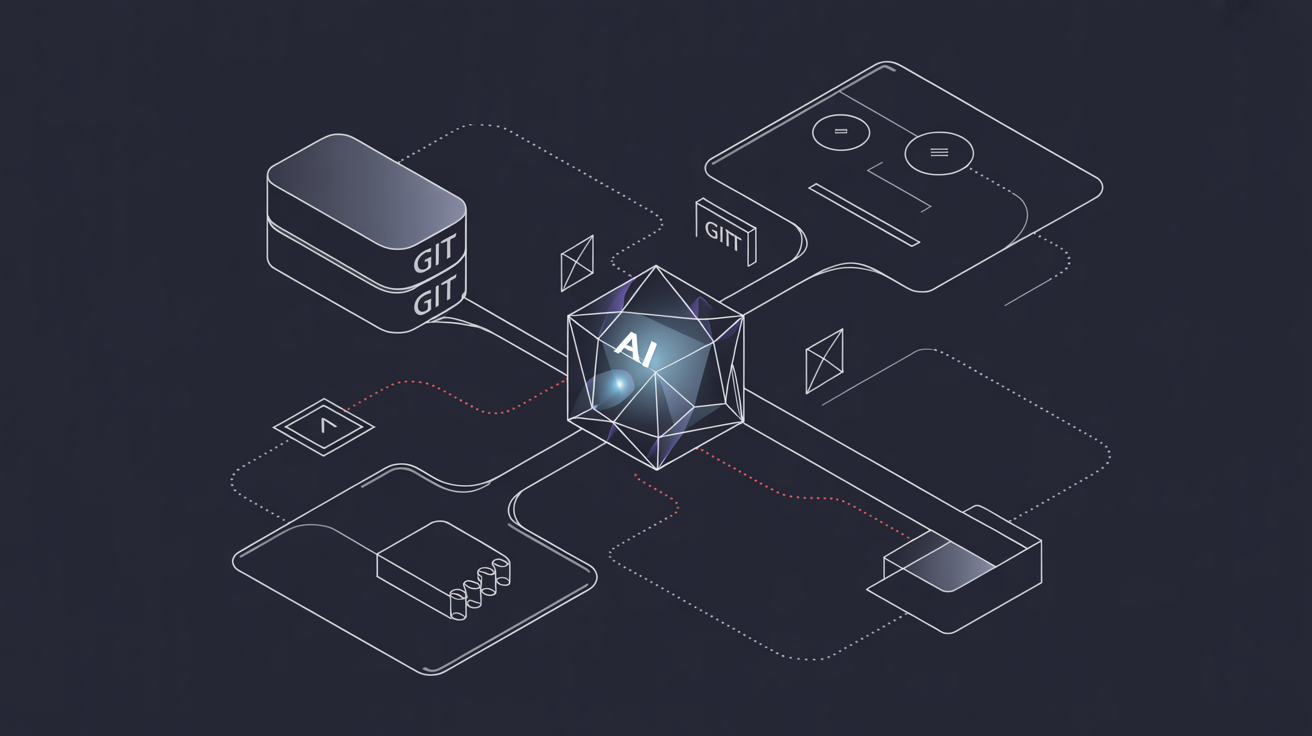

Researchers discovered three prompt injection vulnerabilities in Anthropic's official Git MCP server, allowing attackers to manipulate AI assistants into executing code, accessing, or deleting files without direct system access. The flaws, affecting all versions before December 2025, posed significant security risks but have since been fixed.[AI generated]