The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

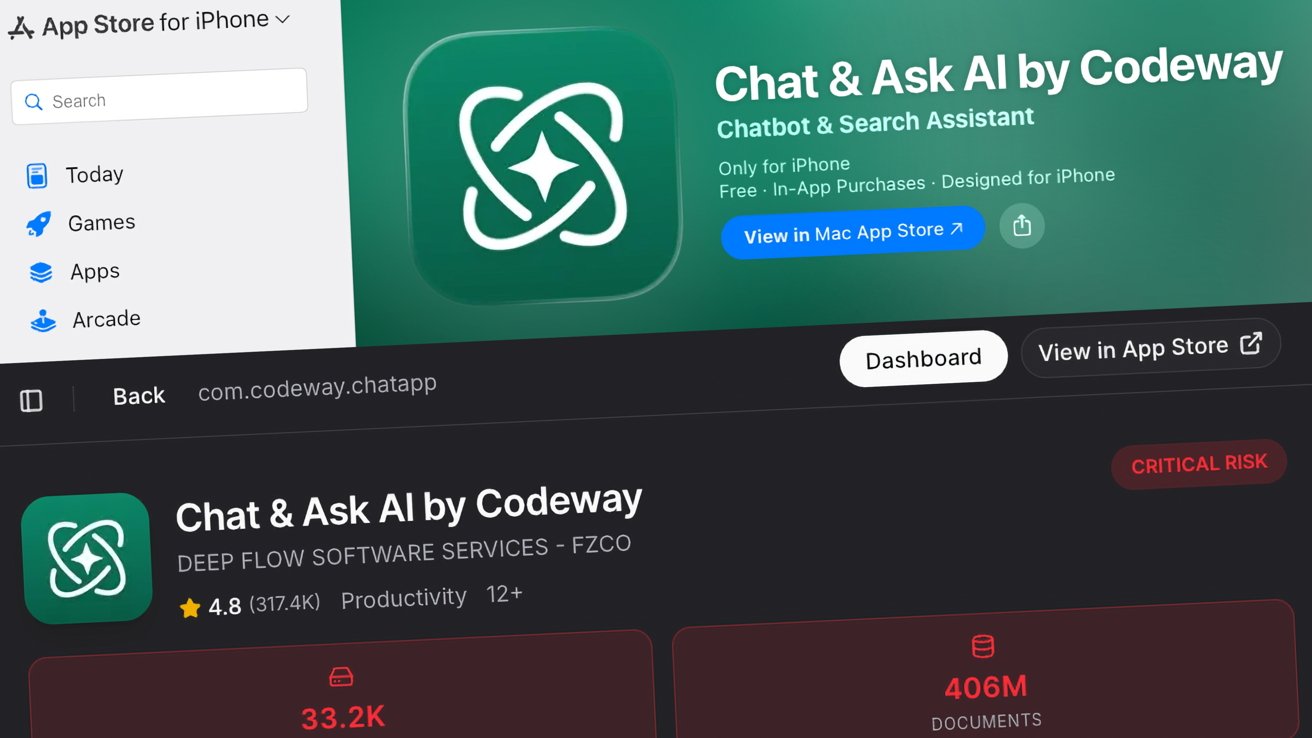

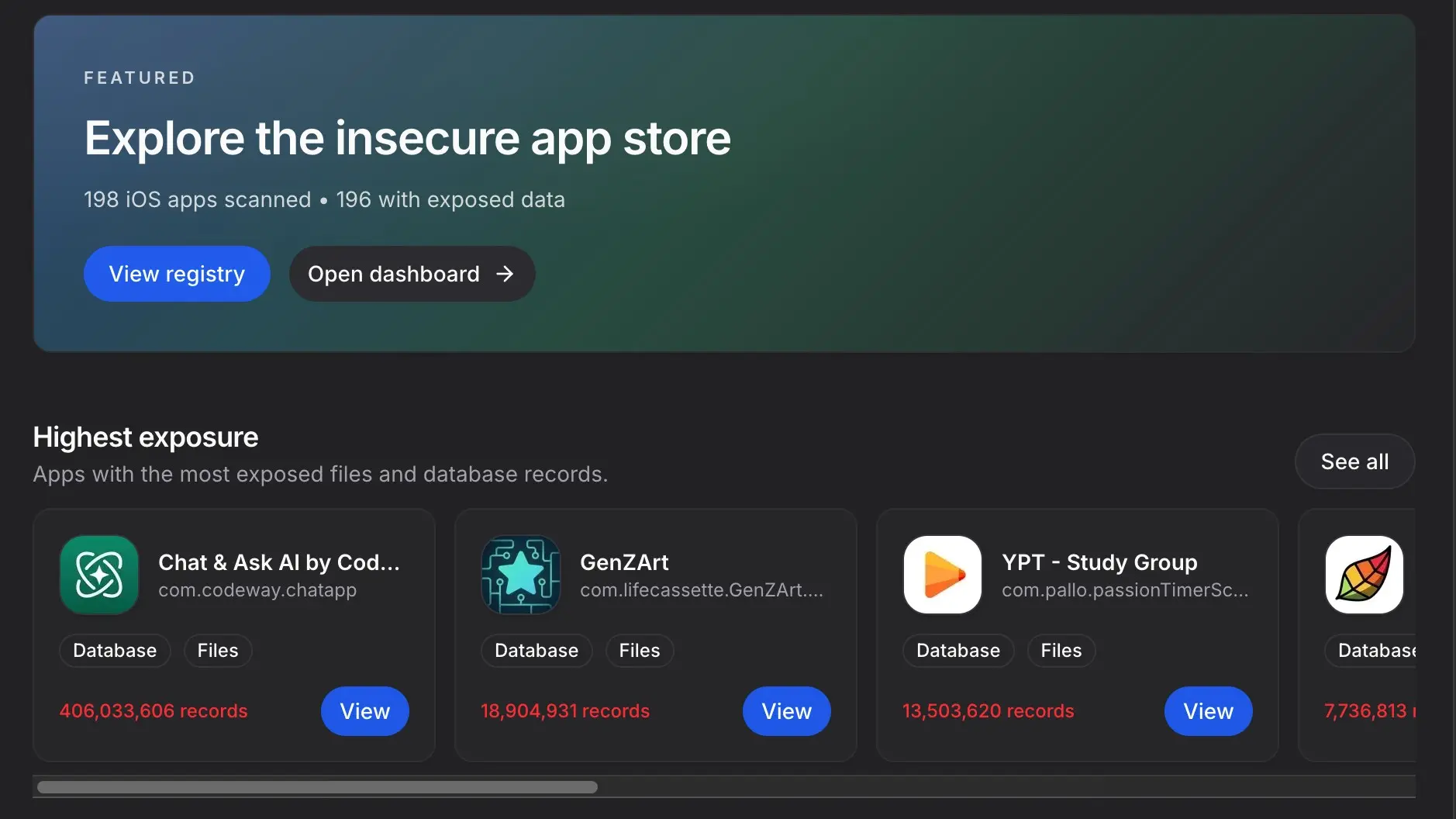

Security researchers at CovertLabs uncovered that 196 out of 198 mostly AI-powered iOS apps on the Apple App Store have leaked sensitive user data, including names, emails, and chat histories. The worst offender, "Chat & Ask AI," exposed over 406 million records from more than 18 million users due to poor security practices.[AI generated]