The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

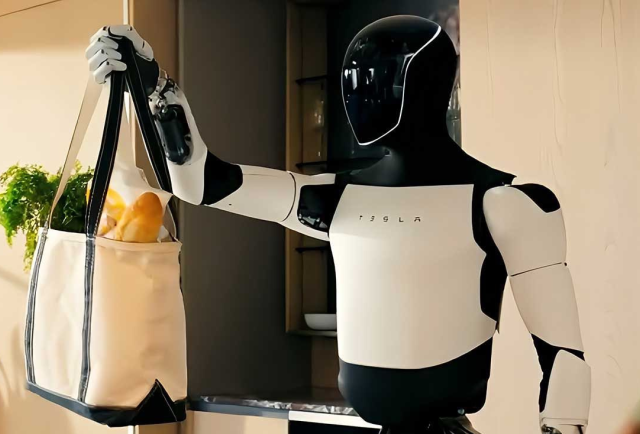

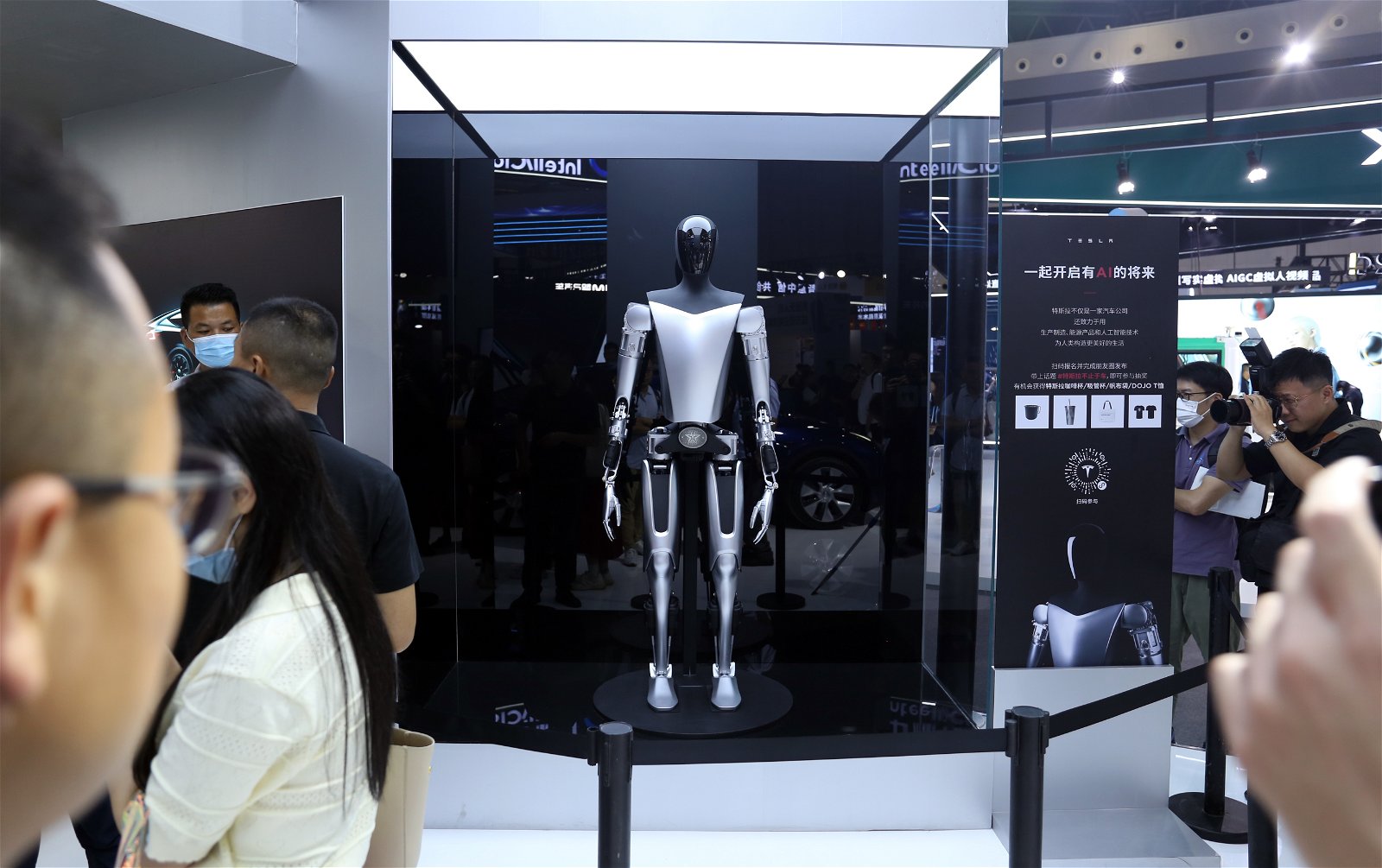

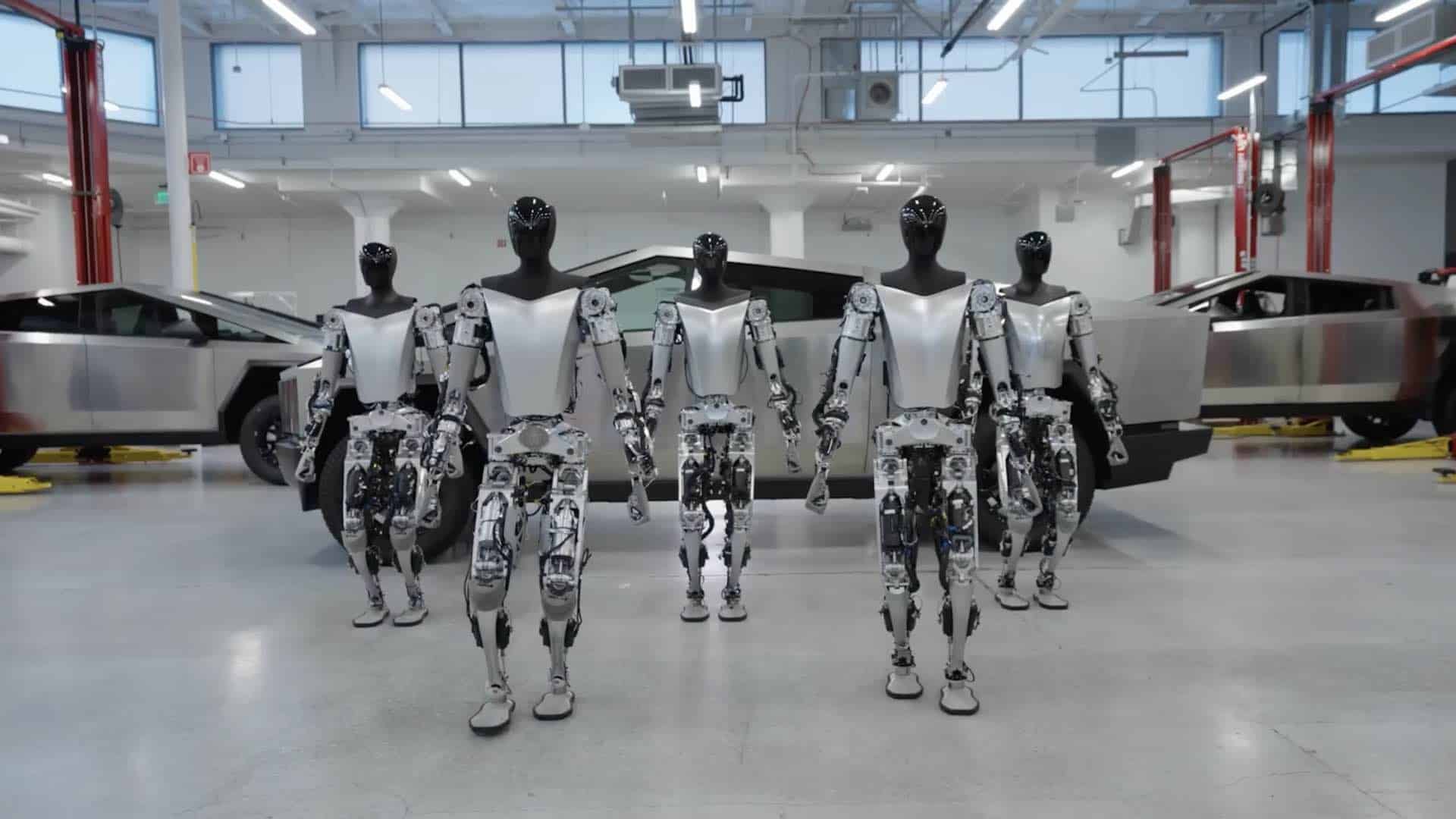

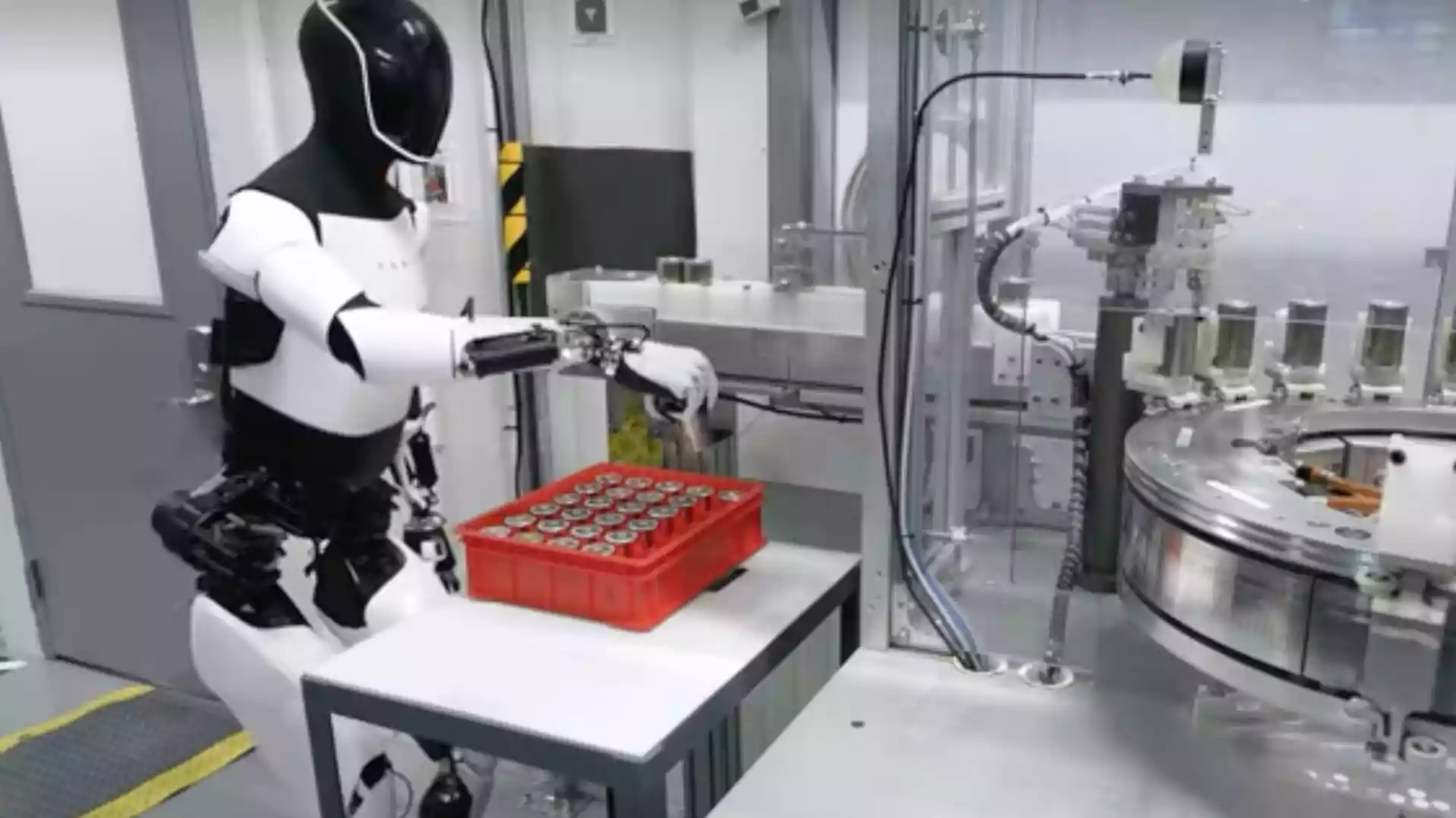

At the World Economic Forum in Davos, Elon Musk announced Tesla's intention to begin selling its AI-powered humanoid robots, Optimus, to the public by the end of 2027. While no incidents have occurred yet, the planned deployment raises potential future AI-related risks and societal impacts.[AI generated]

:quality(75):max_bytes(102400)/https://assets.iprofesional.com/assets/jpg/2022/10/543299_landscape.jpg)