The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

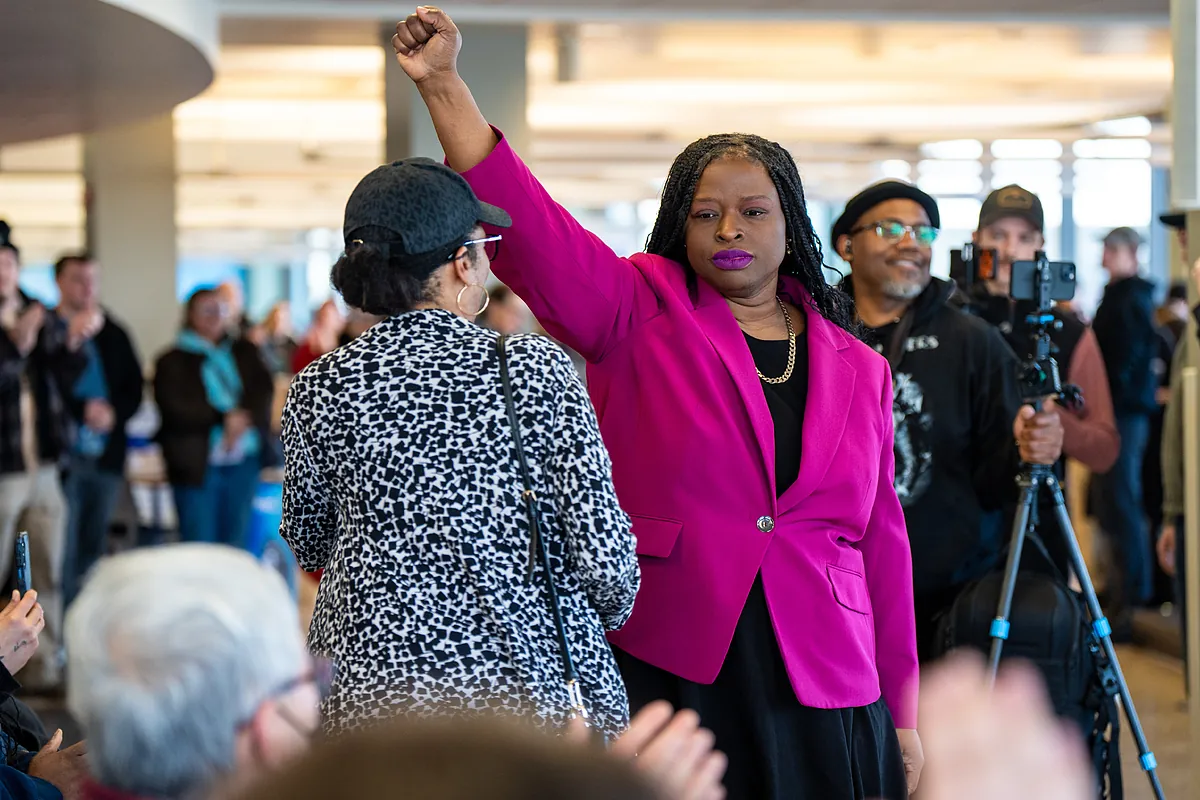

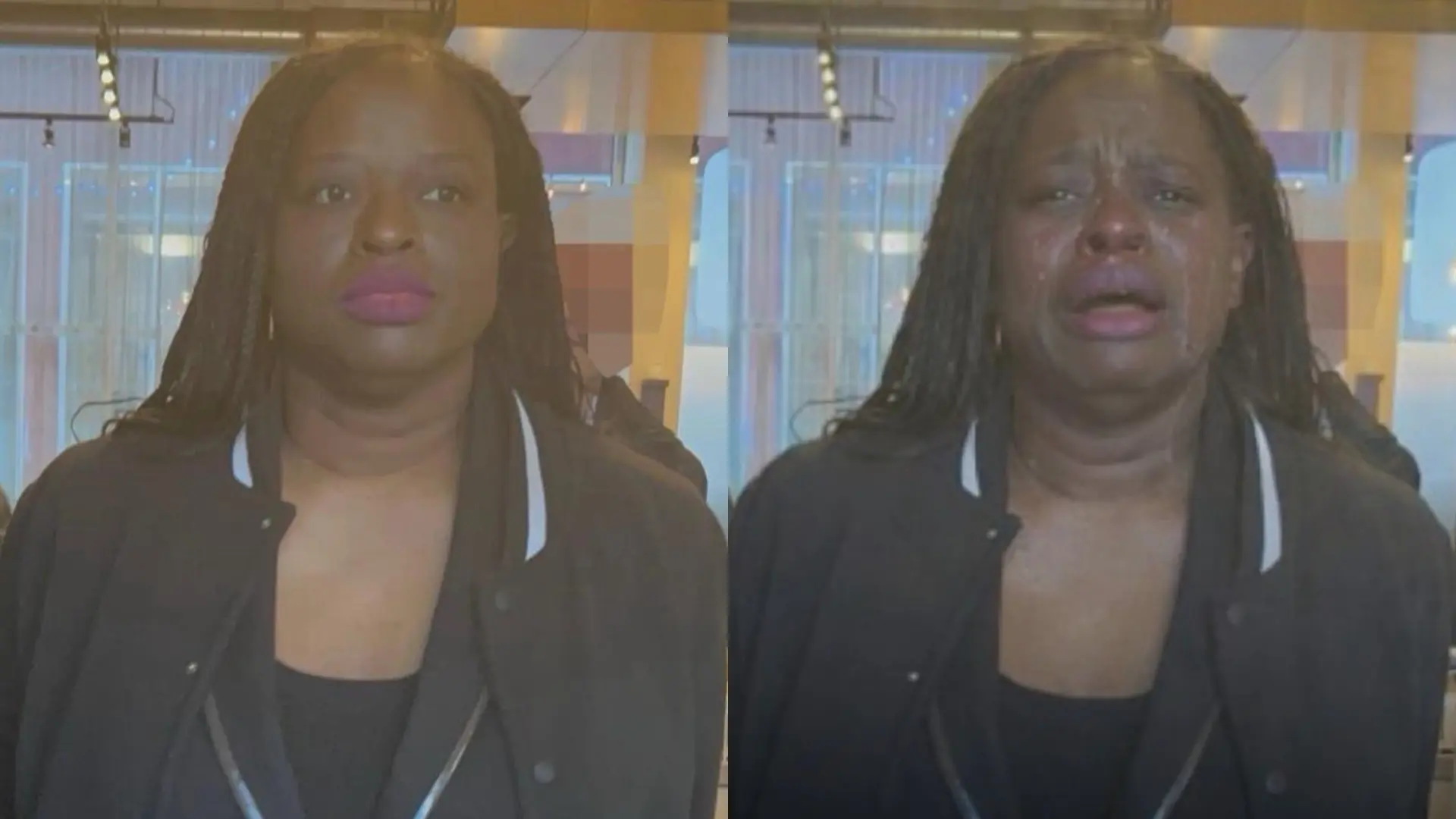

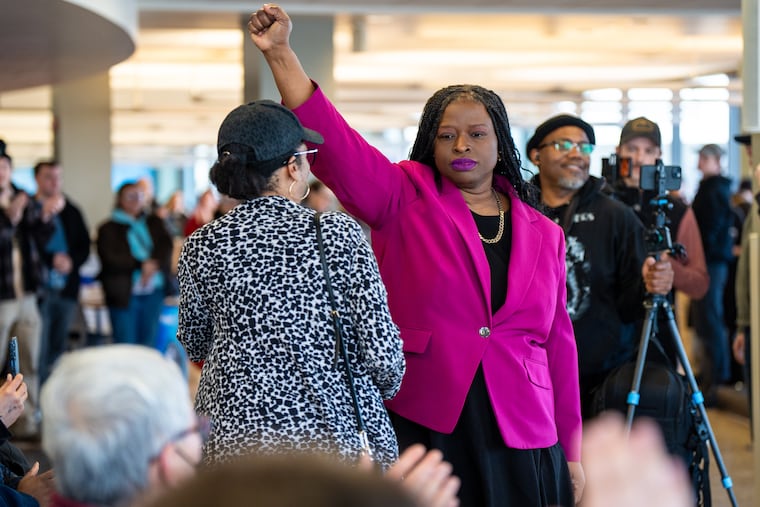

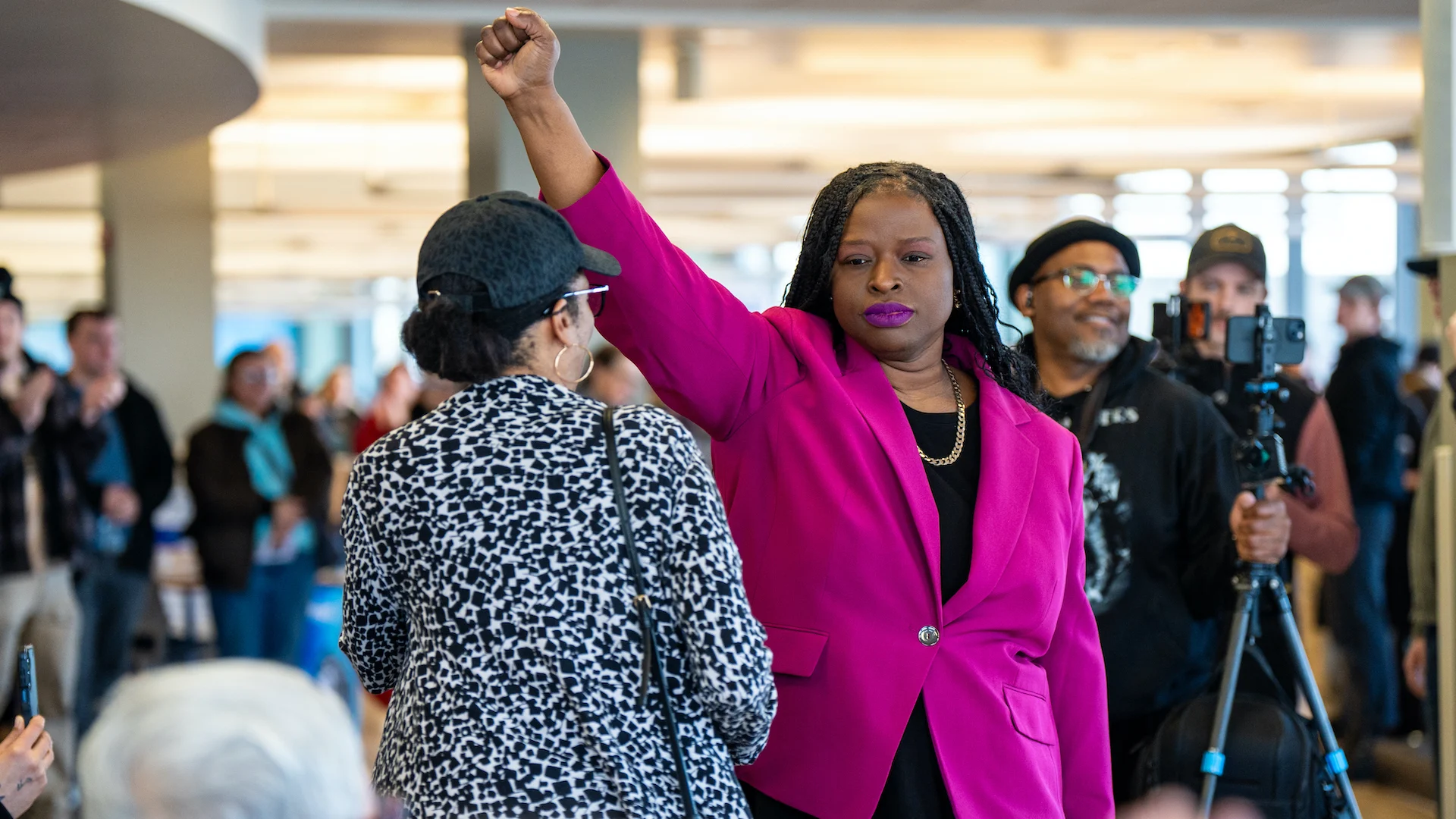

The White House published an AI-altered image of arrested protester Nekima Levy Armstrong in Minnesota, depicting her crying instead of calm. The manipulated photo, shared without disclosure, misrepresented her emotional state, leading to public misinformation and reputational harm, and raising concerns about AI use in official communications.[AI generated]