The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

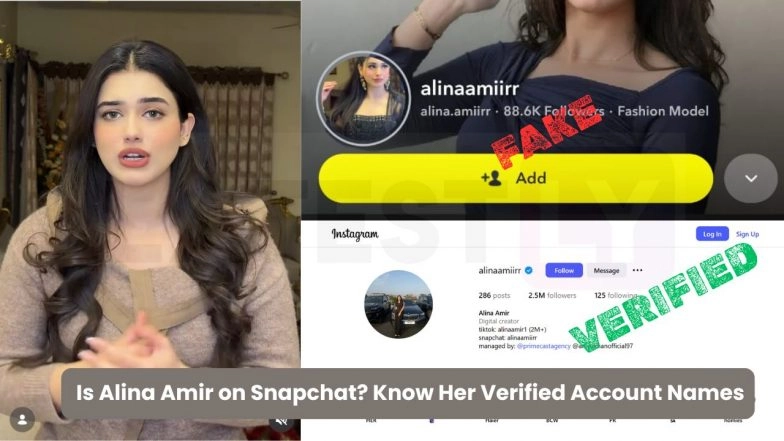

Pakistani influencer Alina Amir was targeted by a viral AI-generated deepfake video falsely attributed to her, resulting in reputational harm and harassment. Amir publicly condemned the misuse of AI technology, called for government intervention, and offered a reward for identifying the perpetrators behind the fabricated content.[AI generated]