The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

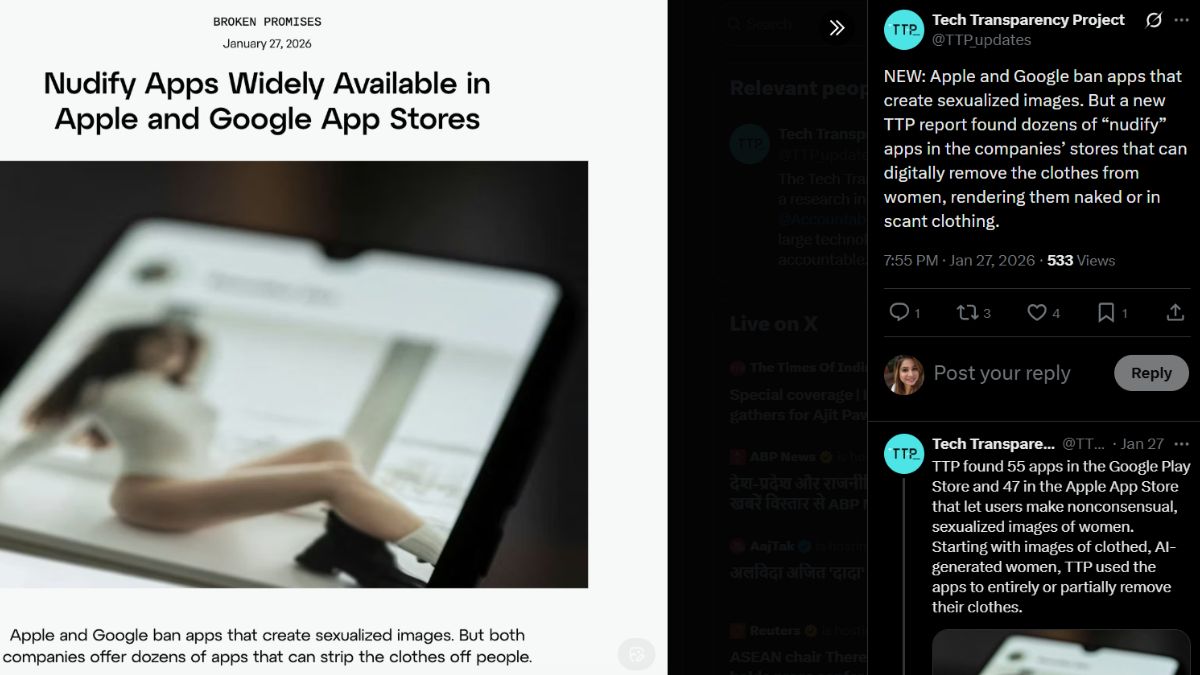

Apple and Google have been criticized for hosting dozens of AI-powered 'nudify' apps on their app stores, which generate non-consensual sexualized deepfake images. Despite policies against such content, these apps have been downloaded hundreds of millions of times, causing significant privacy violations and harm to individuals.[AI generated]

)

:strip_icc():format(jpeg)/kly-media-production/medias/5333063/original/062413300_1756567428-image_2025-08-30_221110500.jpg)