The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

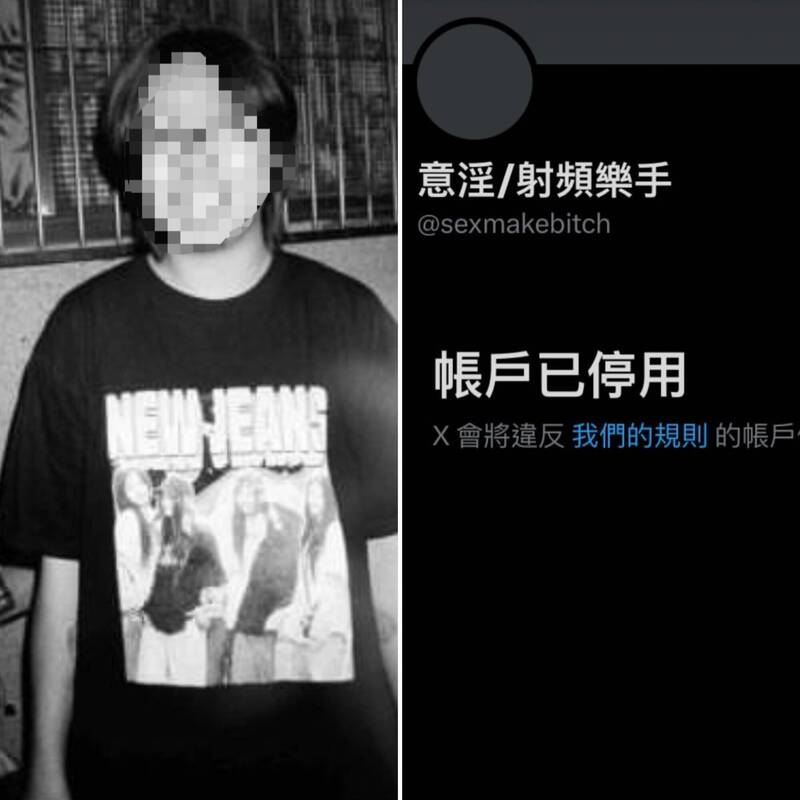

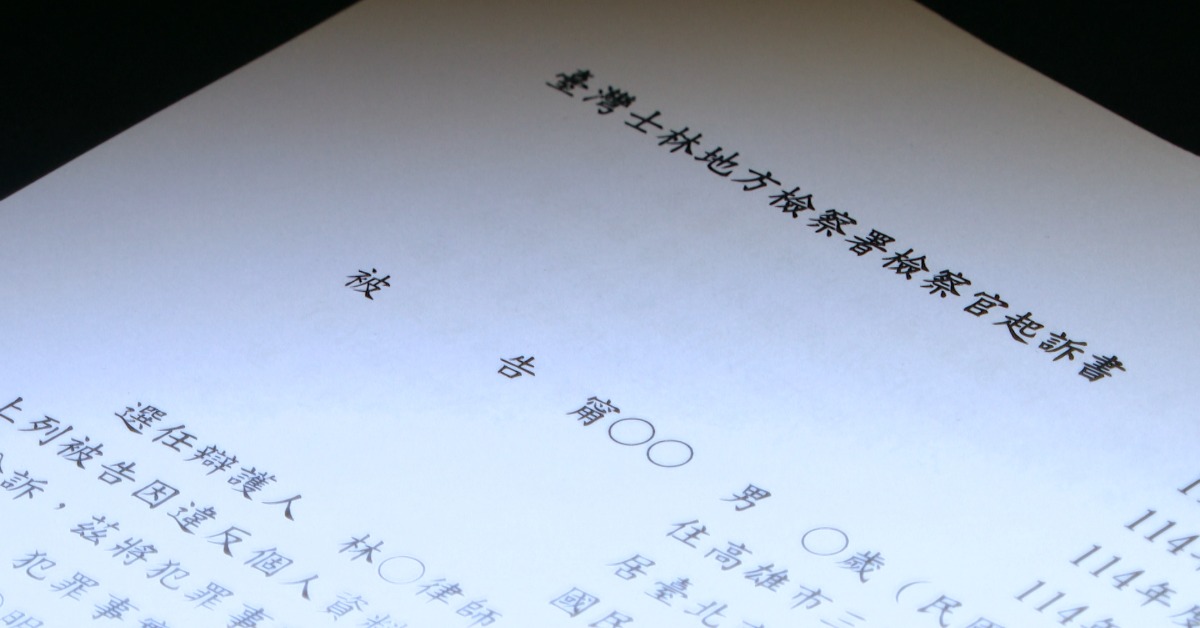

A well-known Taiwanese band photographer used AI deepfake technology to create and distribute non-consensual sexual images of at least five female musicians by combining their social media photos with explicit content. The images were shared online, causing severe harm to victims' privacy and reputation. The photographer was prosecuted under personal data and criminal laws.[AI generated]