The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

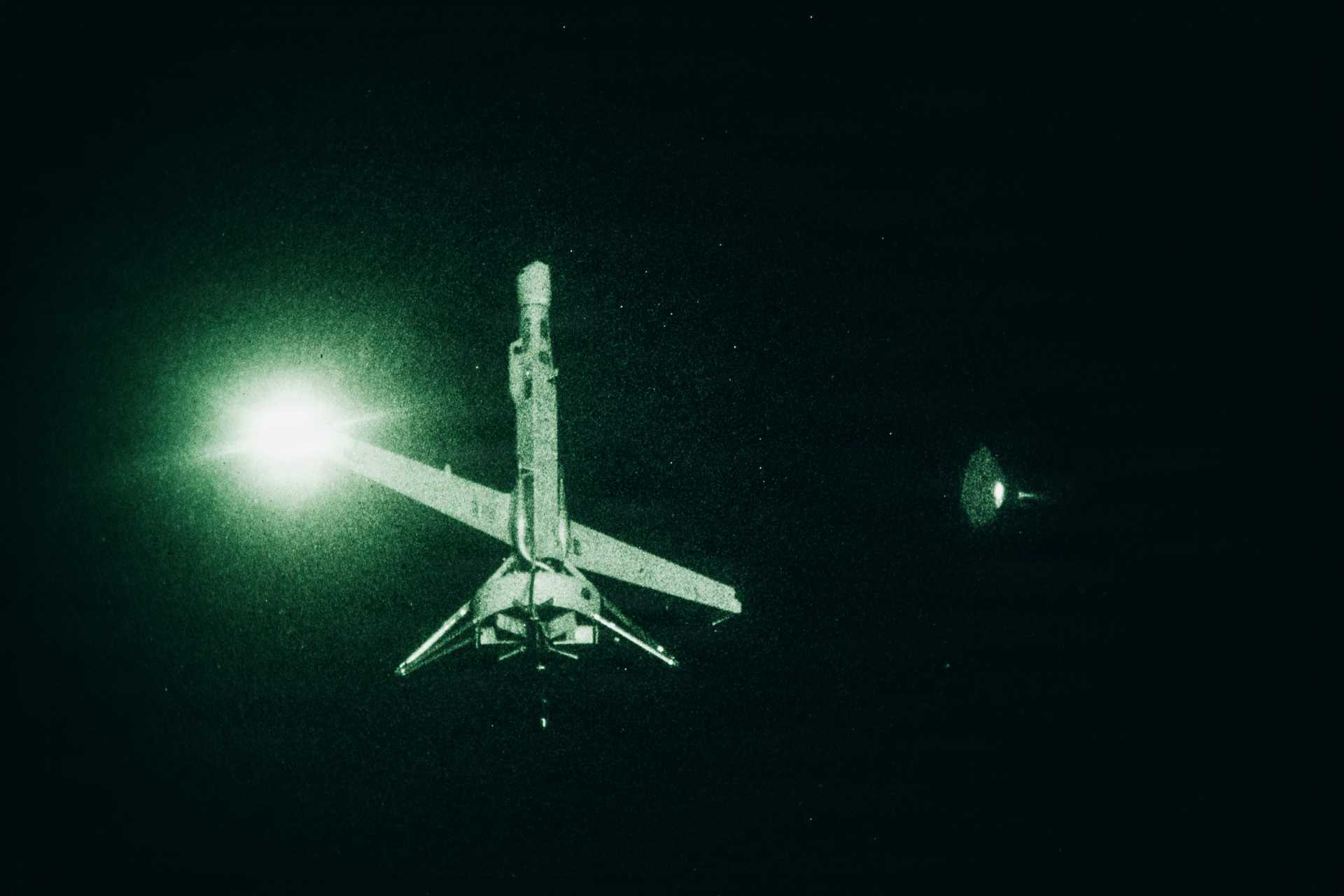

The Indian Army has procured V-BAT unmanned aircraft systems from US firm Shield AI, integrating the Hivemind autonomy software for deployment on sensitive borders. The AI-enabled drones can sense, decide, and act autonomously in complex environments, raising credible future risks of harm if misused or malfunctioning.[AI generated]