The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

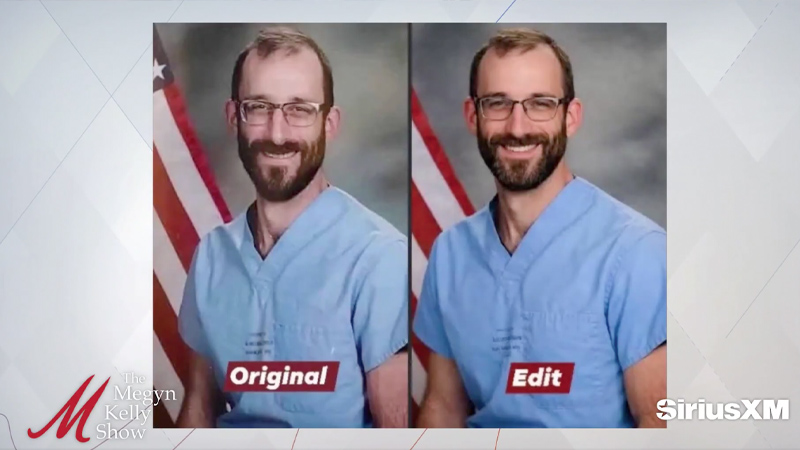

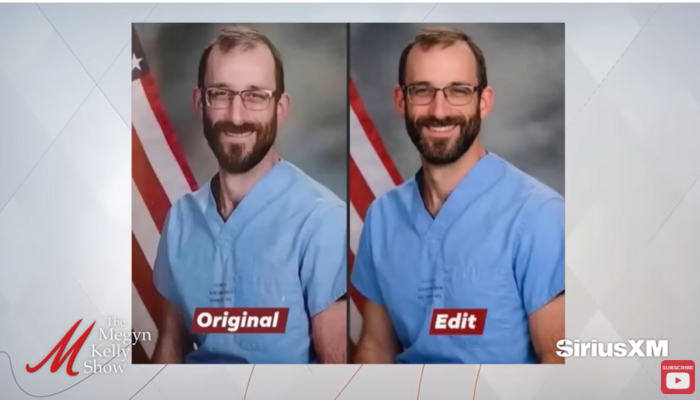

MS NOW (formerly MSNBC) broadcast and published an AI-enhanced image of Alex Pretti, who was fatally shot by federal agents in Minneapolis. The altered image, which made Pretti appear more attractive, was used in news segments and online without disclosure, leading to public misinformation and criticism before a quiet correction was issued.[AI generated]