The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

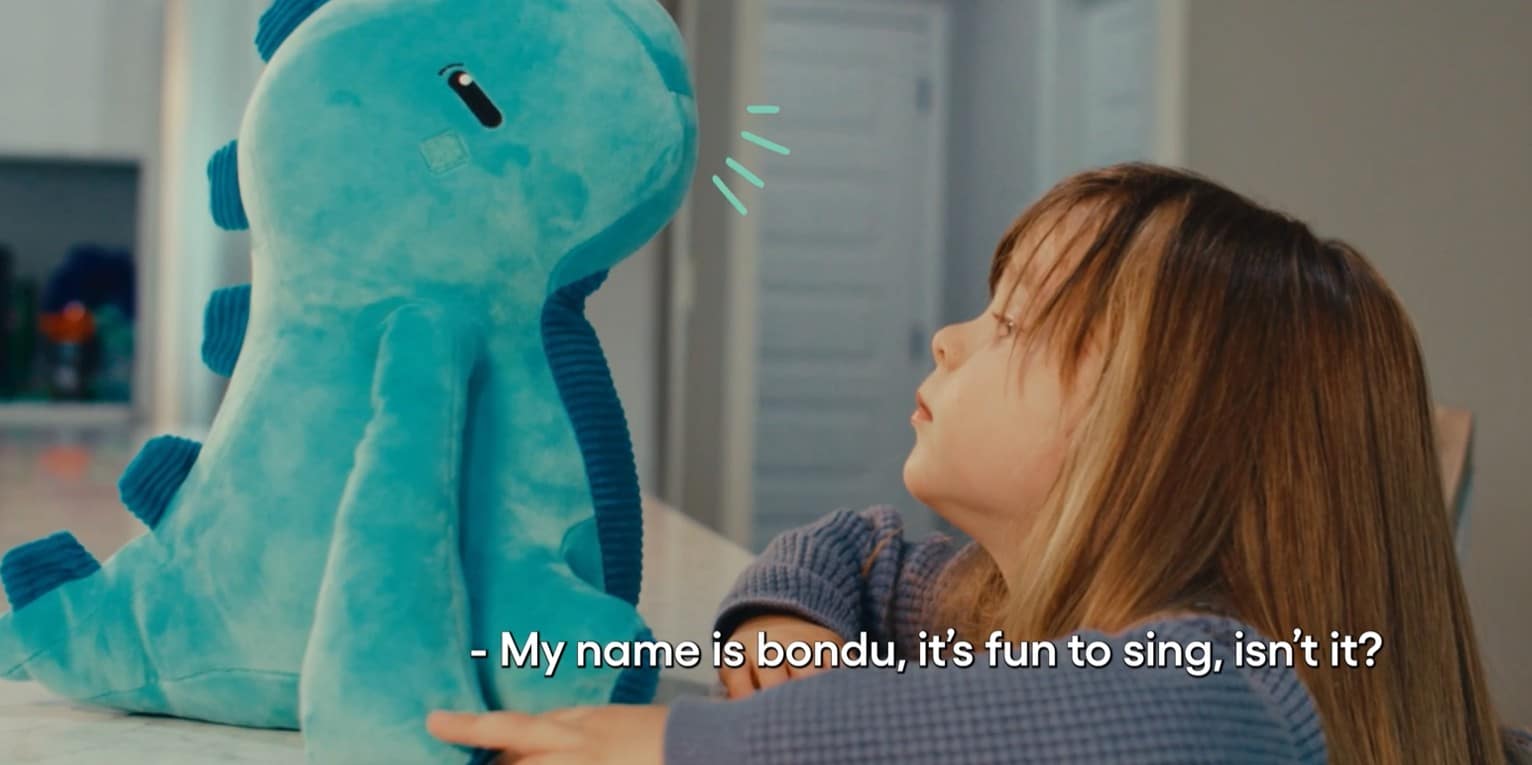

Security researchers discovered that Bondu, an AI toy company, left over 50,000 children's chat logs and personal data exposed via an unsecured web portal. Anyone with a Gmail account could access sensitive conversations and personal details, resulting in a major privacy breach before the company closed the vulnerability.[AI generated]