The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

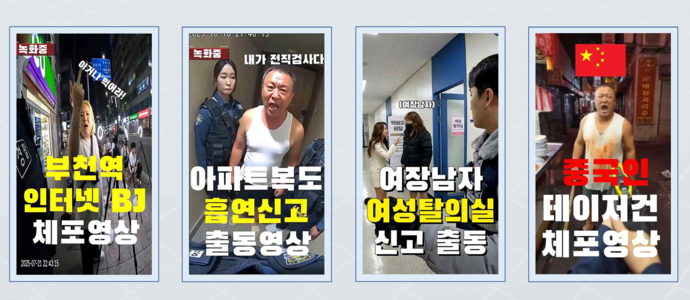

A South Korean YouTuber was arrested for creating and distributing AI-generated fake police bodycam videos, which amassed over 30 million views and misled the public, undermining trust in law enforcement. The individual also produced and sold AI-generated pornography and participated in investment fraud schemes, prompting a police crackdown on AI-driven misinformation.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves the use of AI systems to generate fake videos that impersonate police bodycam footage, which were disseminated widely and caused harm by misleading the public and damaging community trust. The AI-generated content was used maliciously and without proper labeling, constituting a violation of laws against false information dissemination. The harm is realized and direct, as the AI system's outputs were central to the incident. Hence, this is classified as an AI Incident.[AI generated]