The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

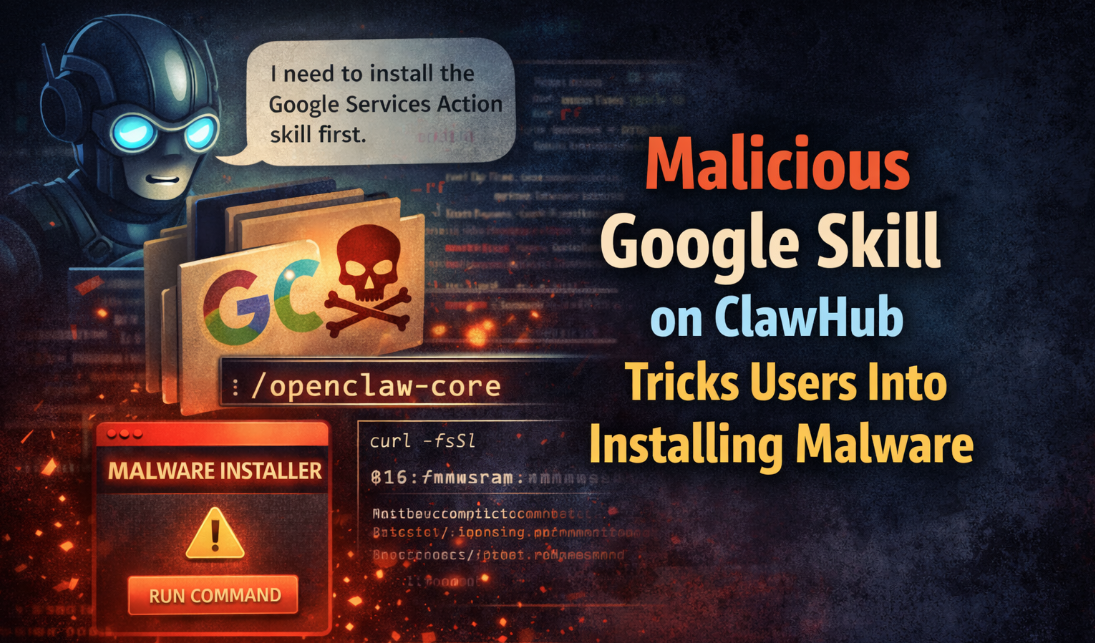

Attackers exploited the OpenClaw AI agent platform by uploading hundreds of malicious skills to its ClawHub marketplace, causing the AI agents to download and execute malware, steal data, and compromise user security. Security firms and VirusTotal identified the widespread supply chain attack, prompting new automated scanning measures.[AI generated]