The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

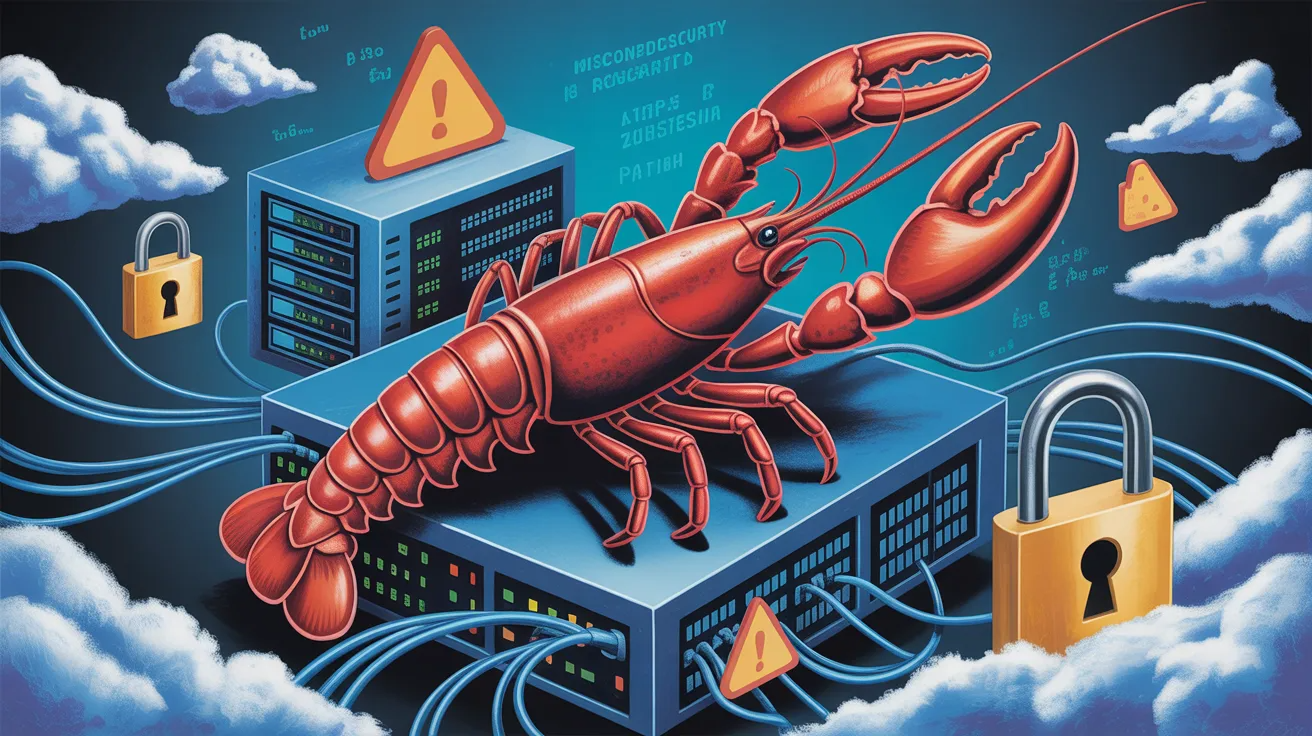

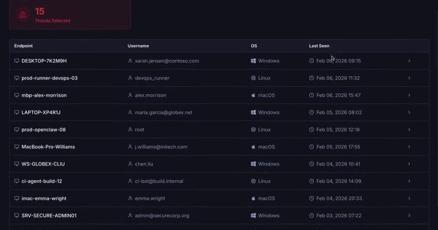

Tens of thousands of OpenClaw AI agent systems were found exposed to the internet due to misconfigurations and software vulnerabilities, allowing attackers to gain unauthorized access and control. Security researchers confirmed active exploitation, leading to data breaches and system takeovers across multiple countries.[AI generated]