The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

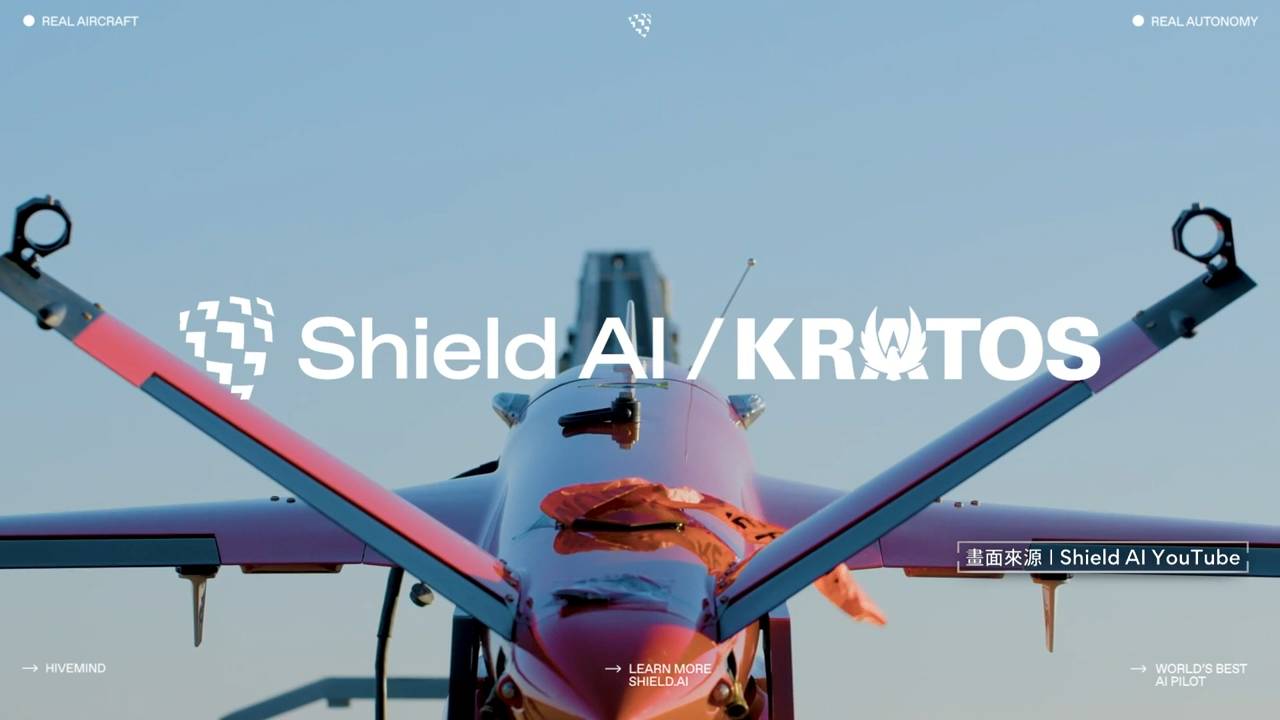

Shield AI and Taiwan's NCSIST have partnered to integrate Shield AI's Hivemind AI platform into Taiwanese unmanned systems, enabling autonomous mission execution and swarm coordination for military drones. The deployment of these AI-driven autonomous weapon systems raises concerns about potential future harm due to their combat capabilities and operational autonomy.[AI generated]