The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

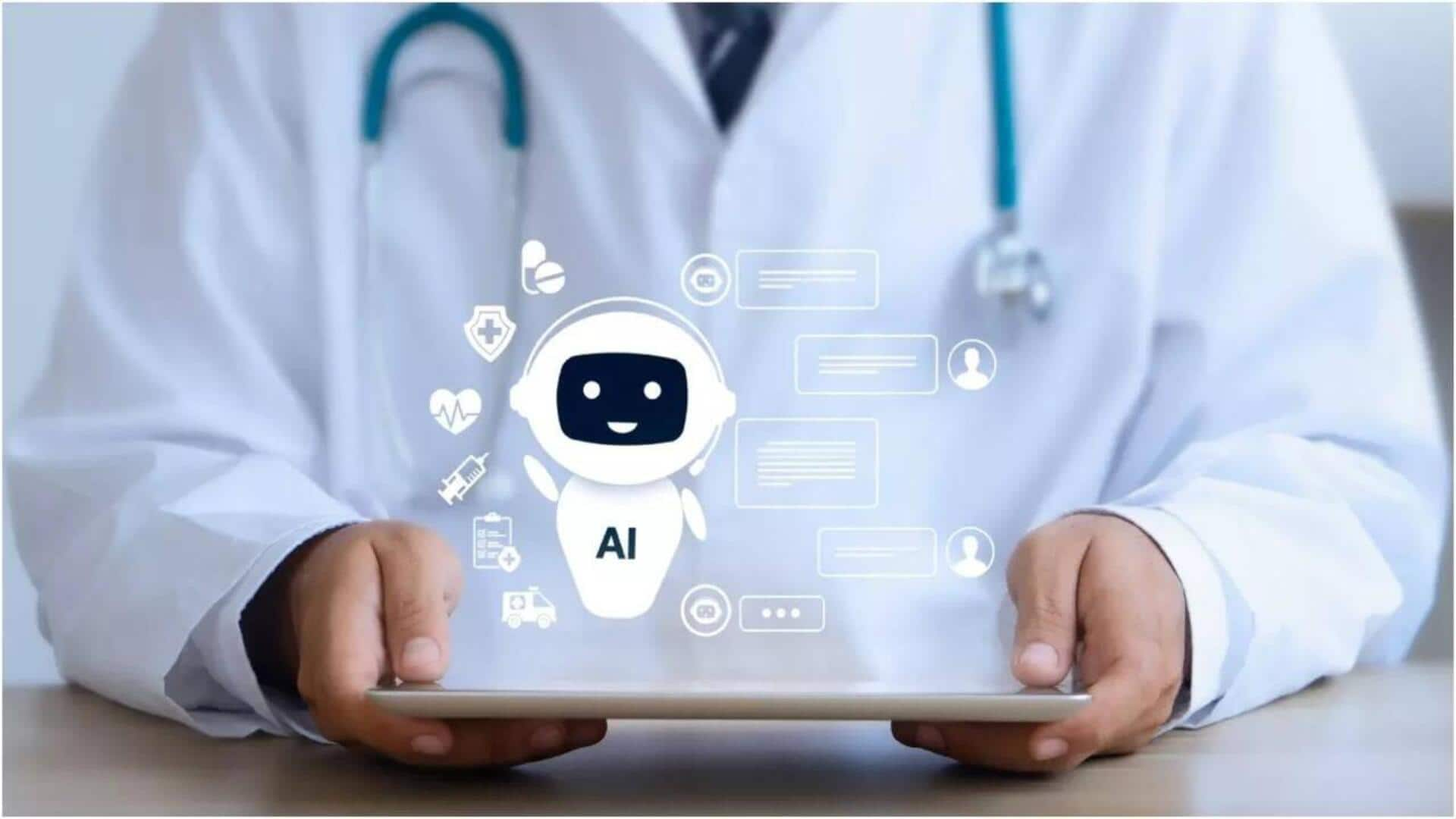

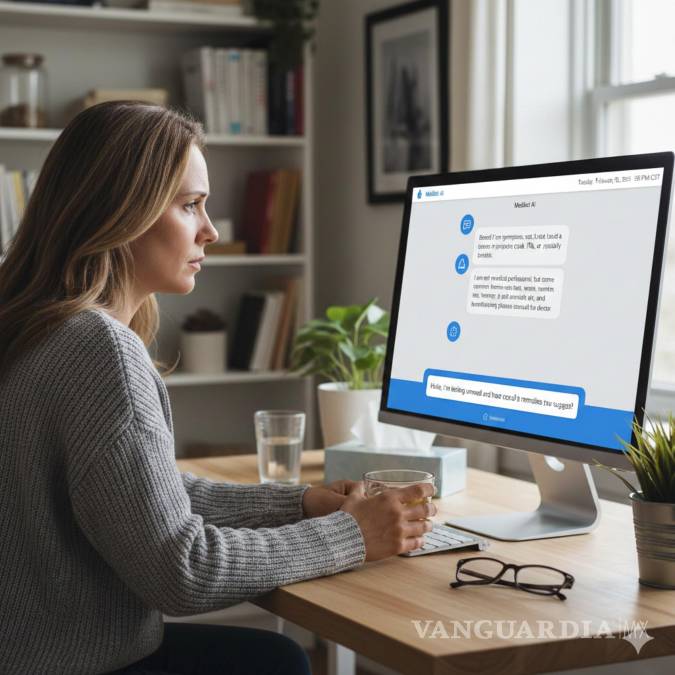

A large-scale study led by Oxford University found that AI chatbots, including those based on large language models, frequently provide incorrect or inconsistent medical advice, sometimes failing to recognize urgent health needs. The research warns that relying on these chatbots for health guidance can be dangerous and is not superior to traditional sources.[AI generated]