The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

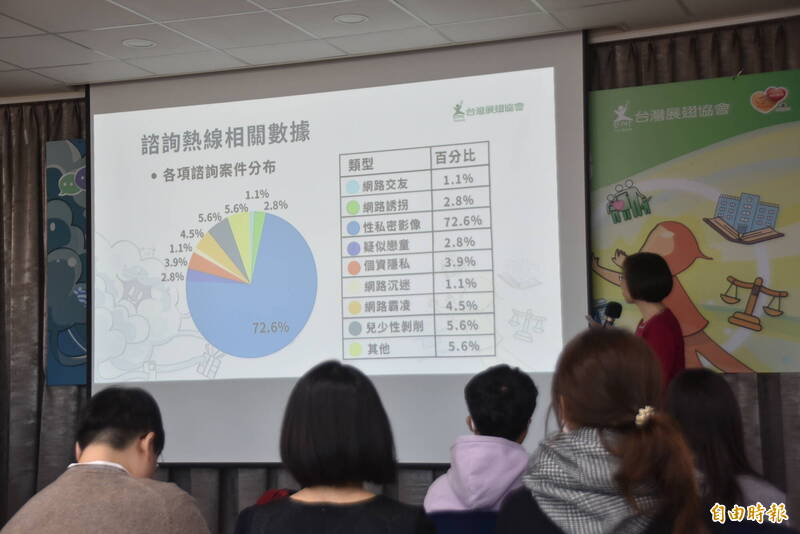

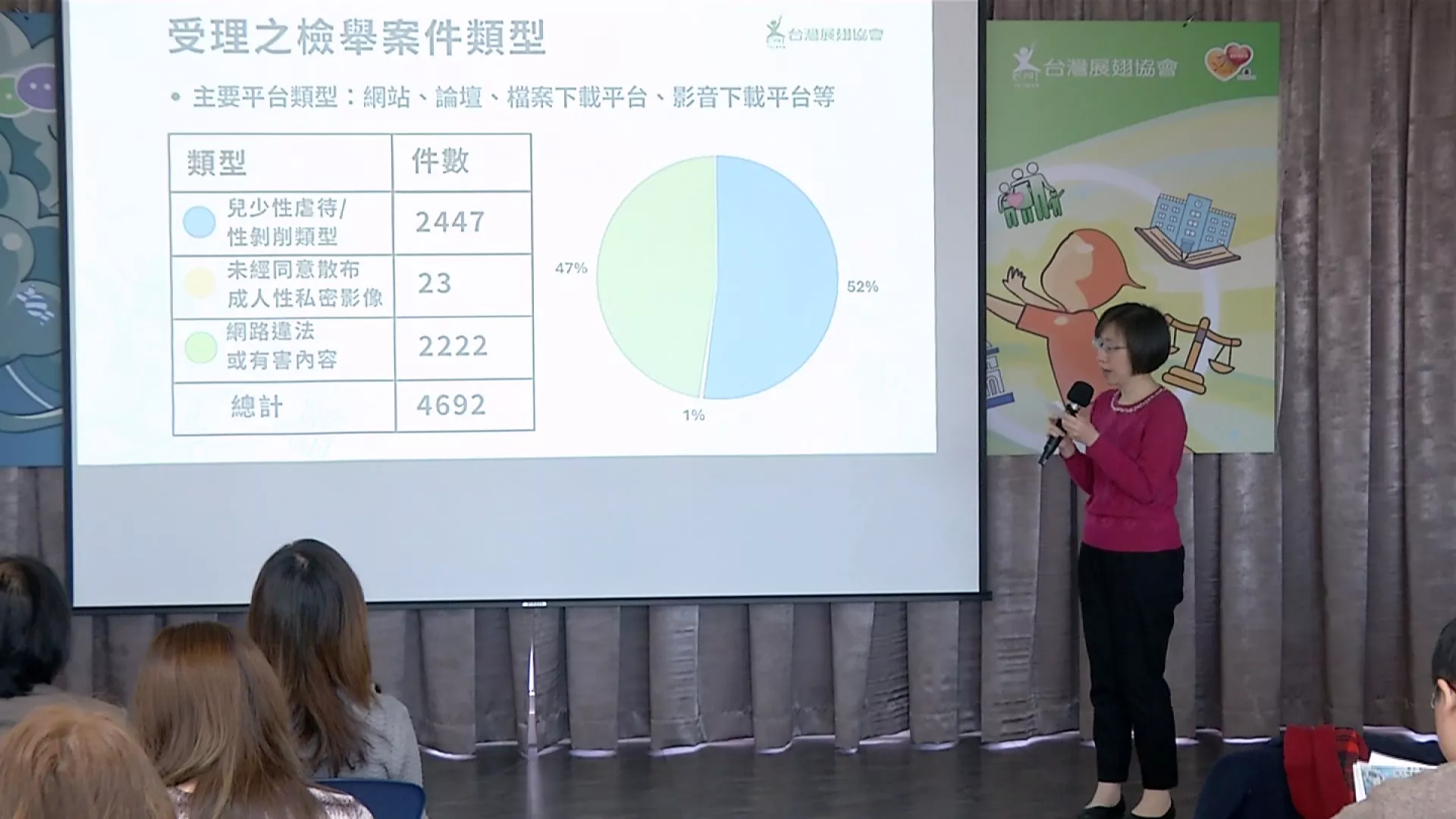

In Taiwan, generative AI tools such as 'one-click undressing' and deepfake platforms have enabled the creation and spread of sexually exploitative images of minors, with over half of reported online abuse cases involving such content. NGOs and officials are calling for stricter regulation and bans on these AI tools to protect children.[AI generated]