The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

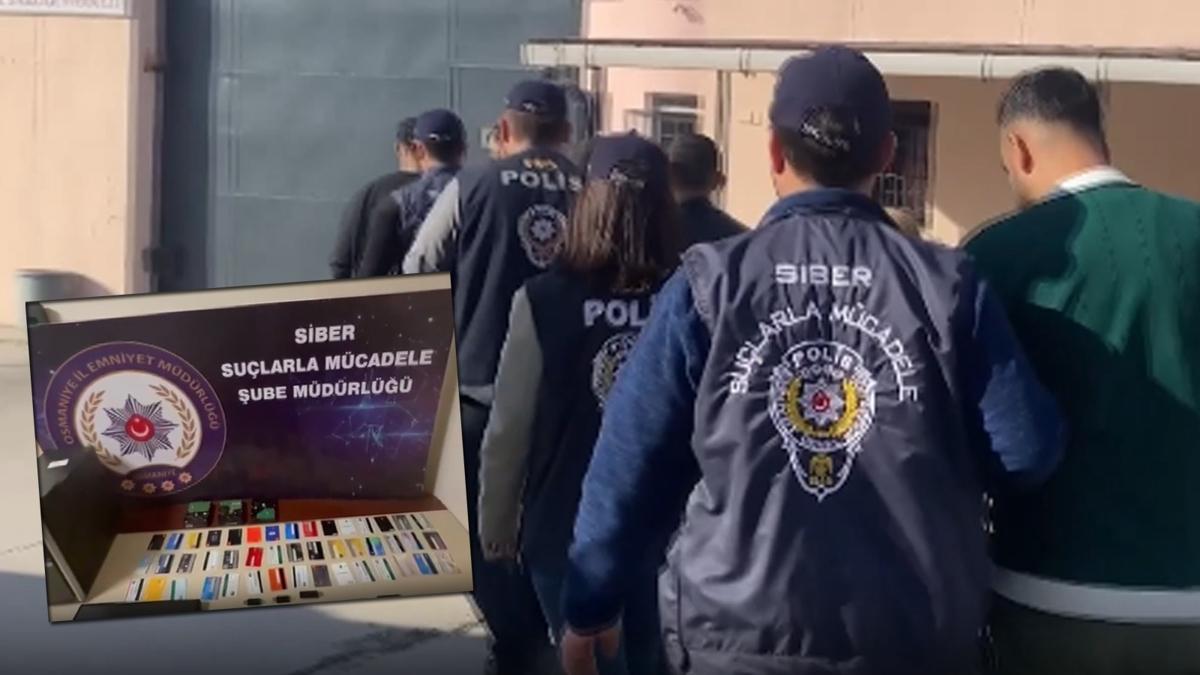

In Osmaniye, Turkey, a criminal group used AI to create deepfake images and voices of government officials, promoting fake investment schemes on social media. The scam resulted in 410 million TL in financial losses. Police arrested eight suspects, with five subsequently jailed.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves the use of AI systems to generate realistic fake images and voices (deepfake technology) to deceive people into fraudulent investment schemes. This use of AI directly led to significant financial harm (fraud involving 410 million TL) to individuals, which qualifies as harm to property and communities. Therefore, this is an AI Incident because the AI system's use directly caused harm through fraudulent activity.[AI generated]