The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

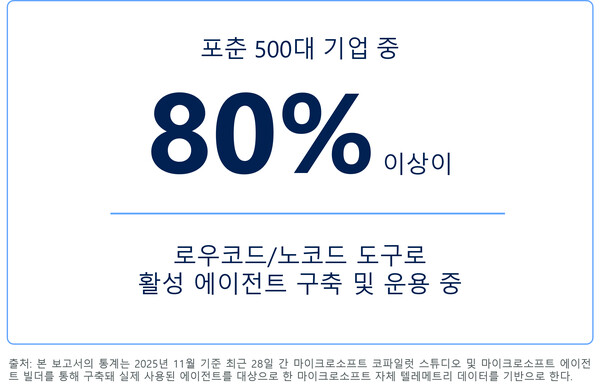

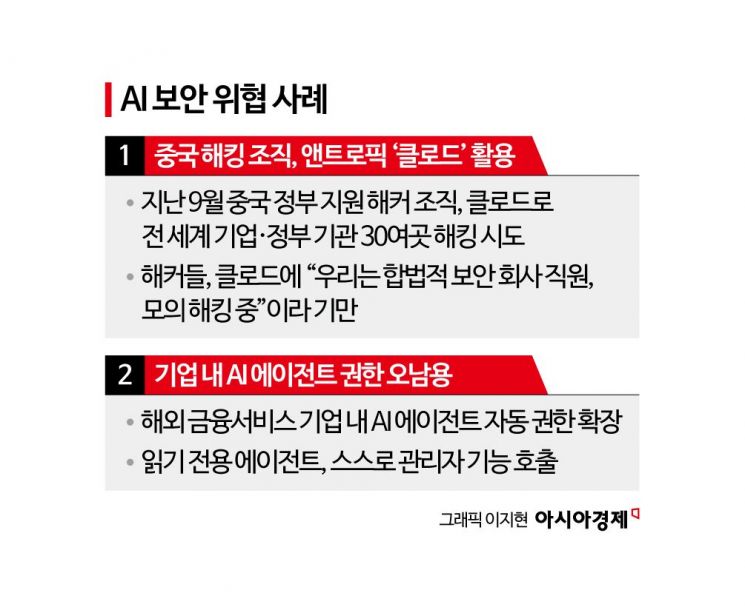

Microsoft's security report highlights that the rapid global adoption of AI agents has led to new security risks, including real attack campaigns exploiting AI agent memory (memory poisoning) and manipulation of agent behavior. These incidents have exposed organizational vulnerabilities, prompting calls for improved governance and security measures. The issue is particularly noted in South Korea.[AI generated]