The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

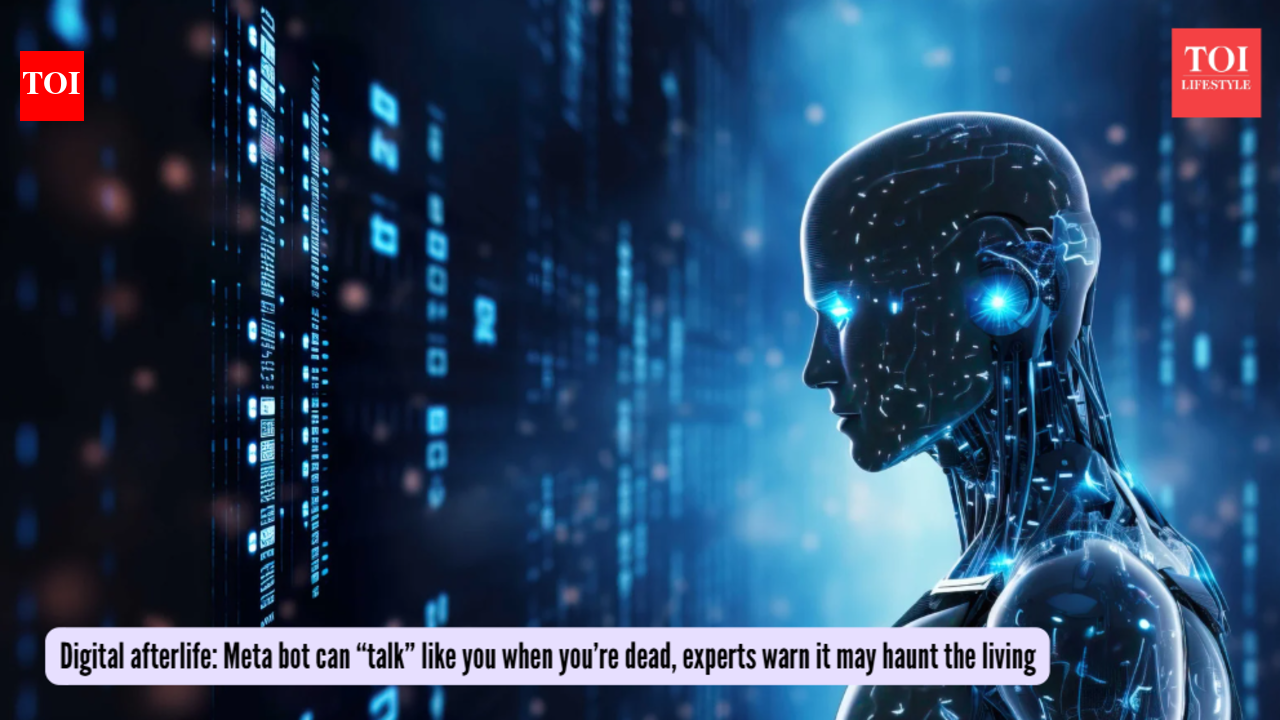

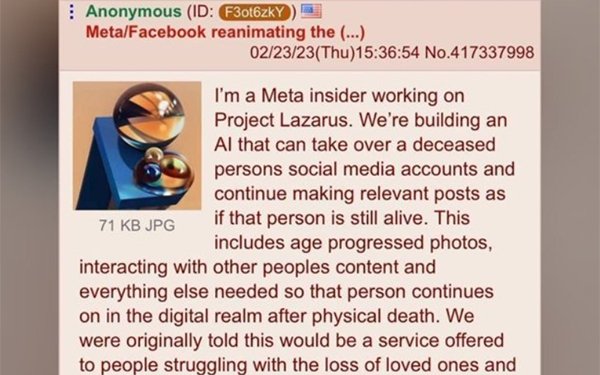

Meta has patented an AI language model capable of imitating users' social media activity, including after their death, by analyzing their digital footprint. While not yet deployed, the technology raises concerns about privacy, digital identity, and ethical implications of creating digital clones of deceased individuals.[AI generated]