The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

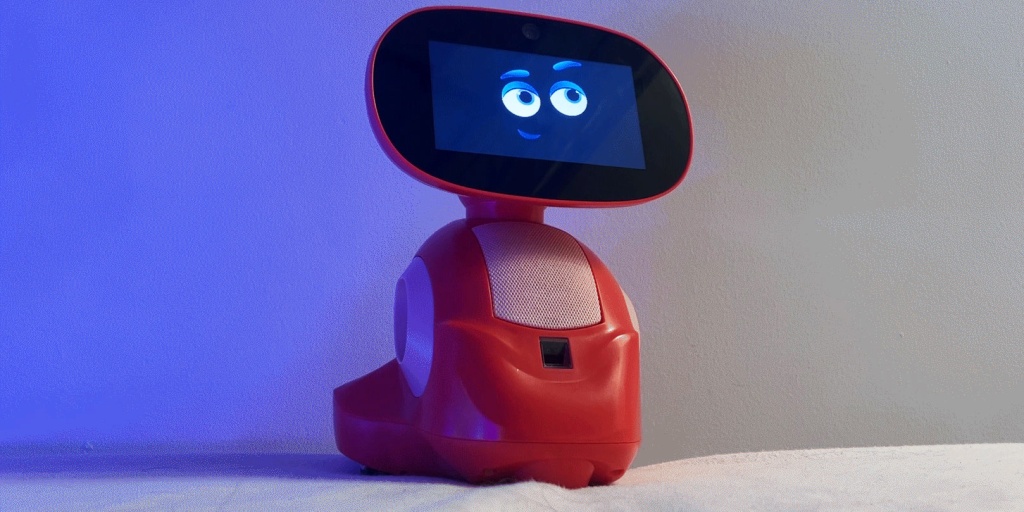

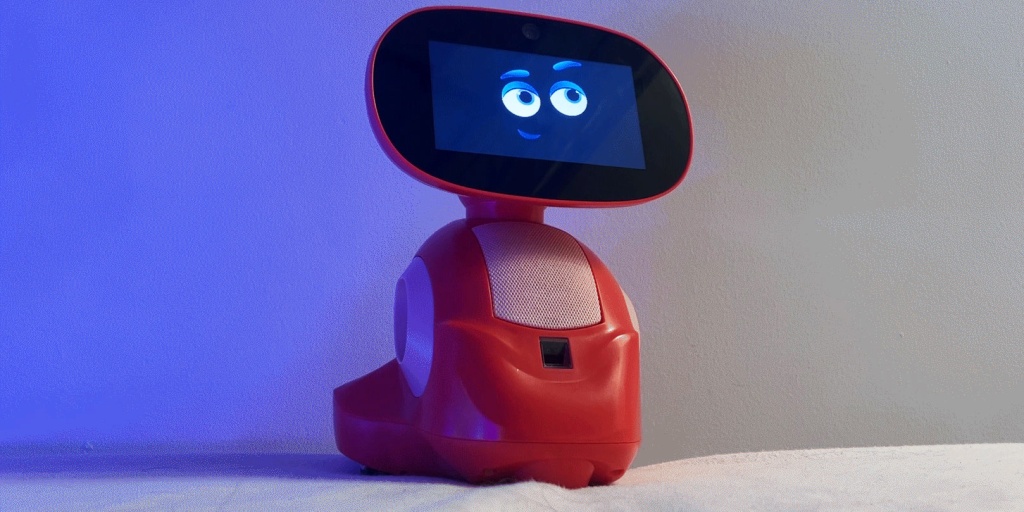

U.S. senators revealed that Miko, an AI toy manufacturer, exposed thousands of audio responses from its toys' conversations with children in an unsecured, publicly accessible database. The incident compromised children's privacy by leaking personal details, prompting a federal investigation and raising concerns about data protection in AI-powered toys.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves an AI system (AI-powered toys with conversational capabilities) whose use and security failure led to the exposure of sensitive data related to children, constituting harm under the category of violations of human rights and legal protections (privacy and data security). The exposure of thousands of audio responses with personal details is a realized harm, not just a potential risk. Therefore, this qualifies as an AI Incident rather than a hazard or complementary information. The involvement of AI is explicit and central to the incident, and the harm is direct and significant.[AI generated]