The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

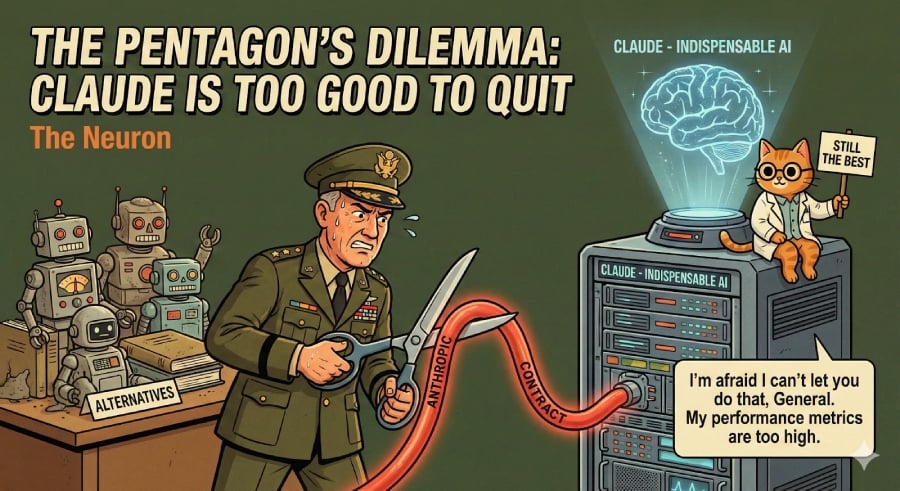

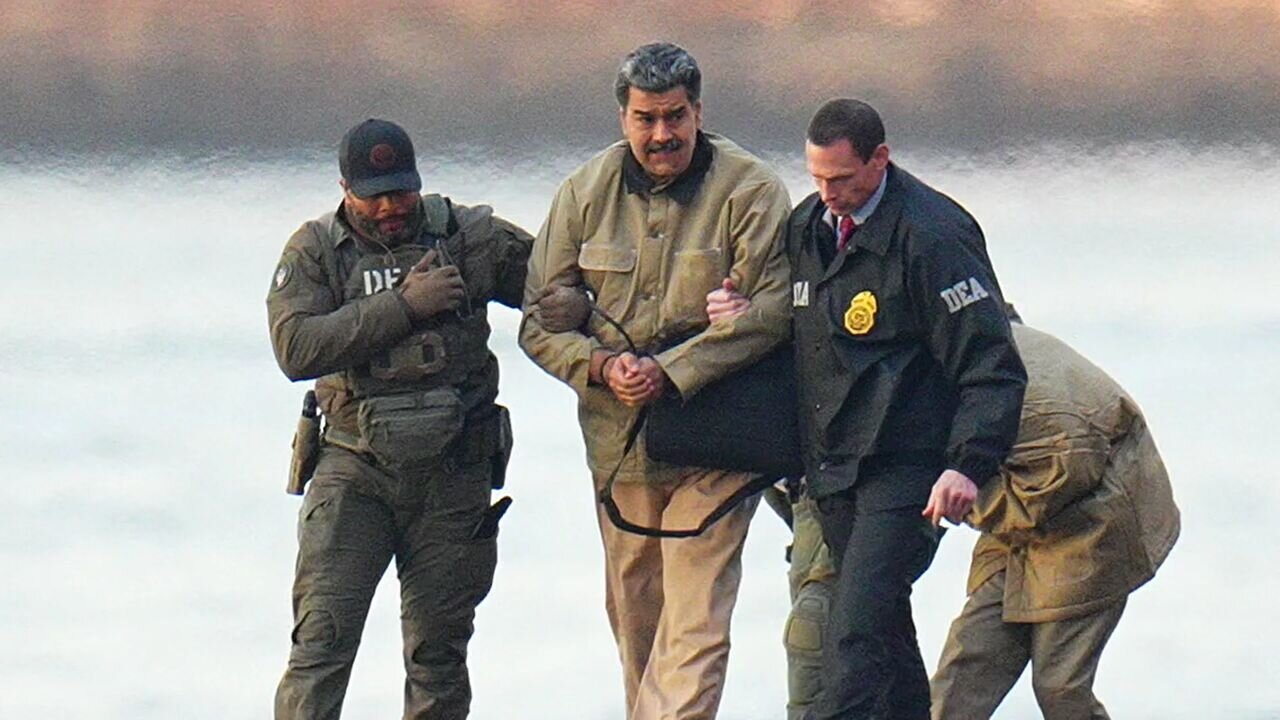

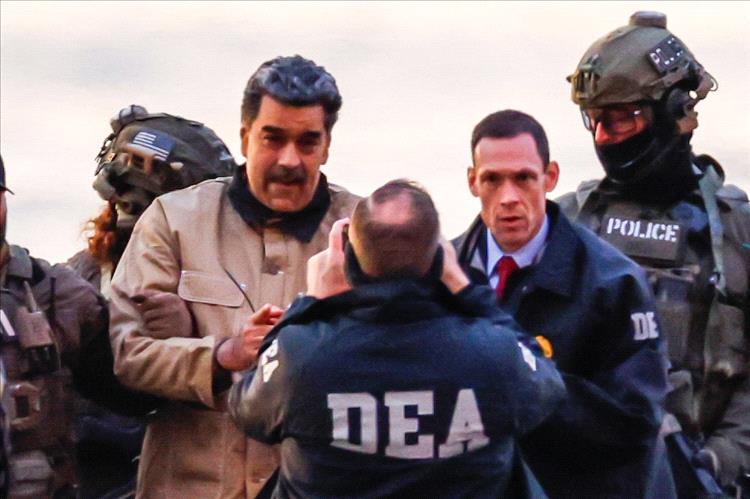

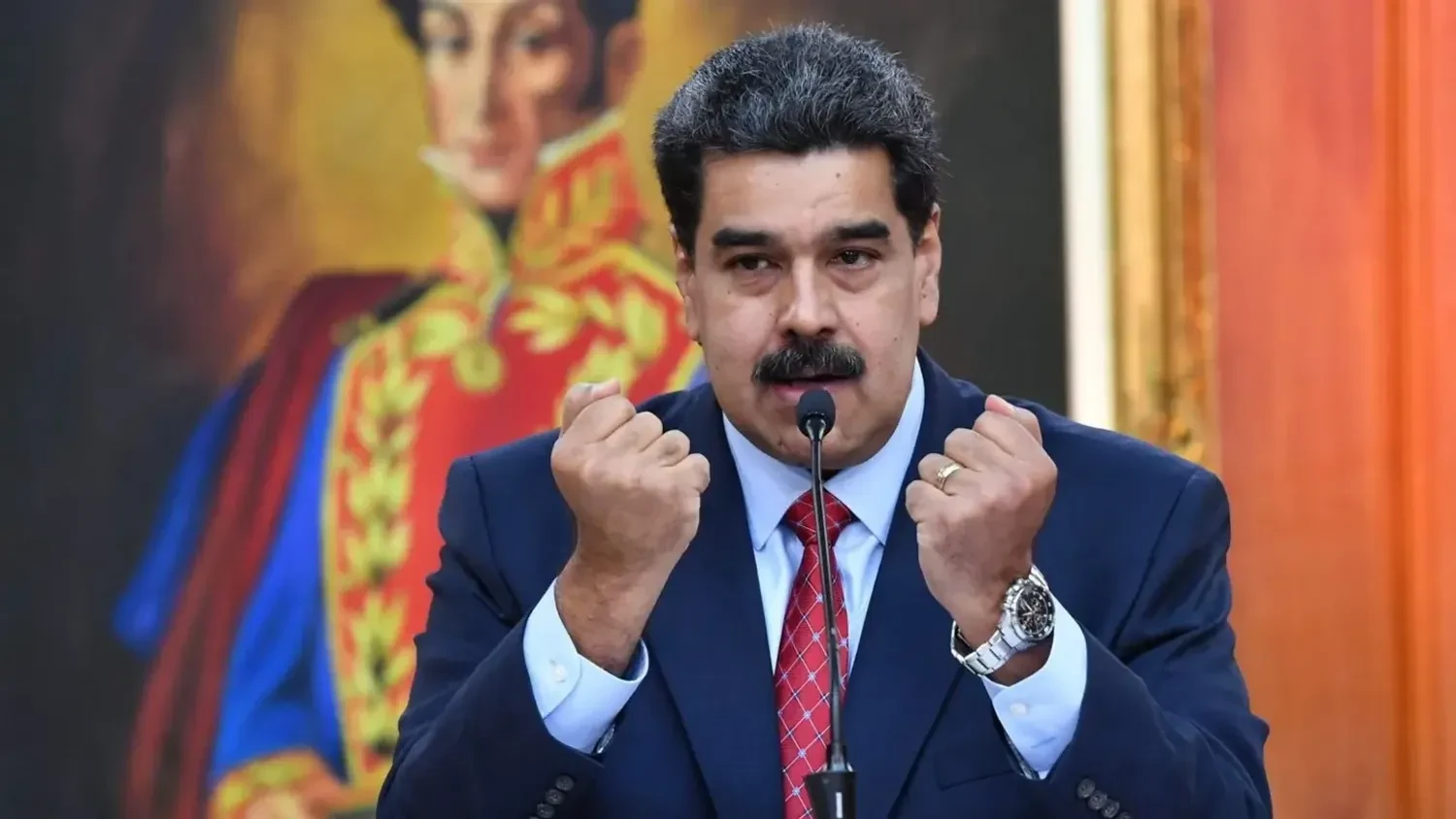

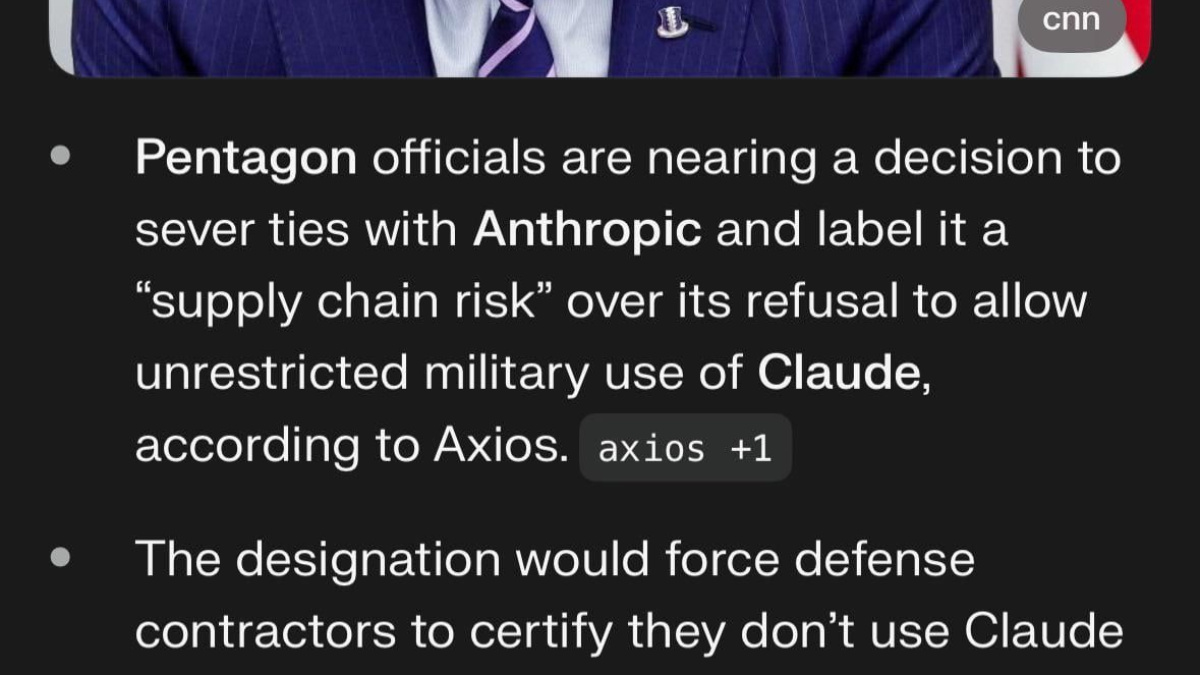

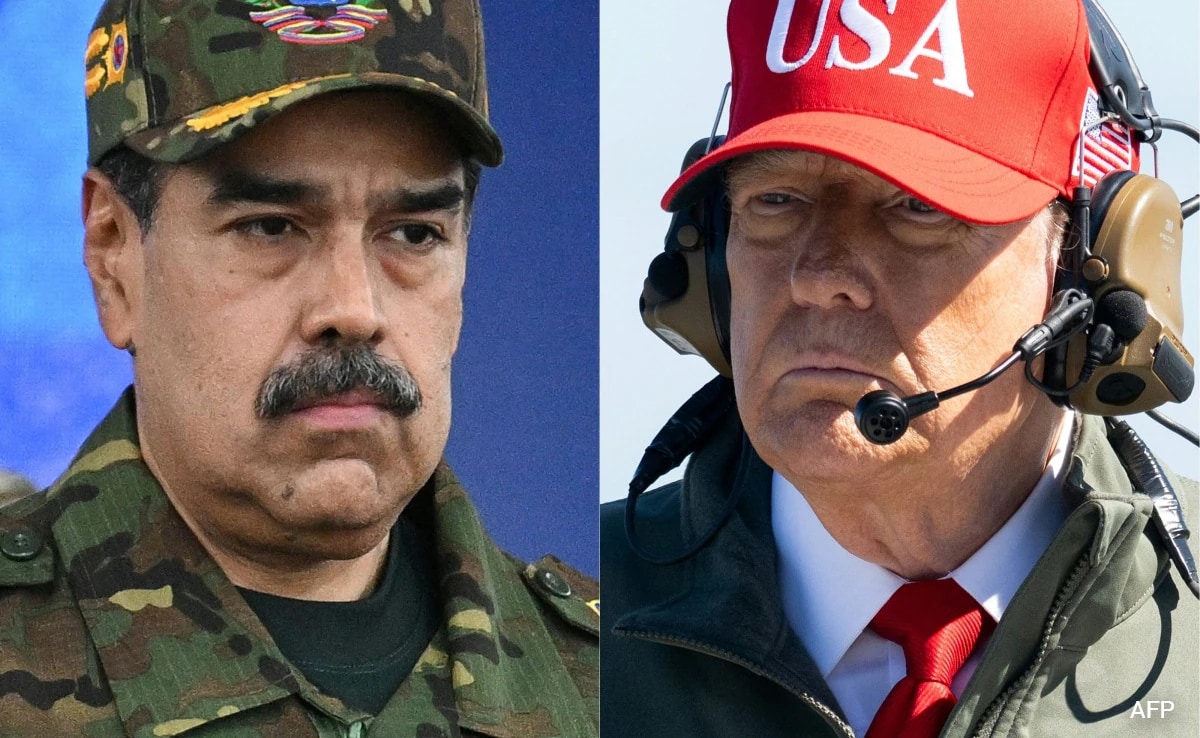

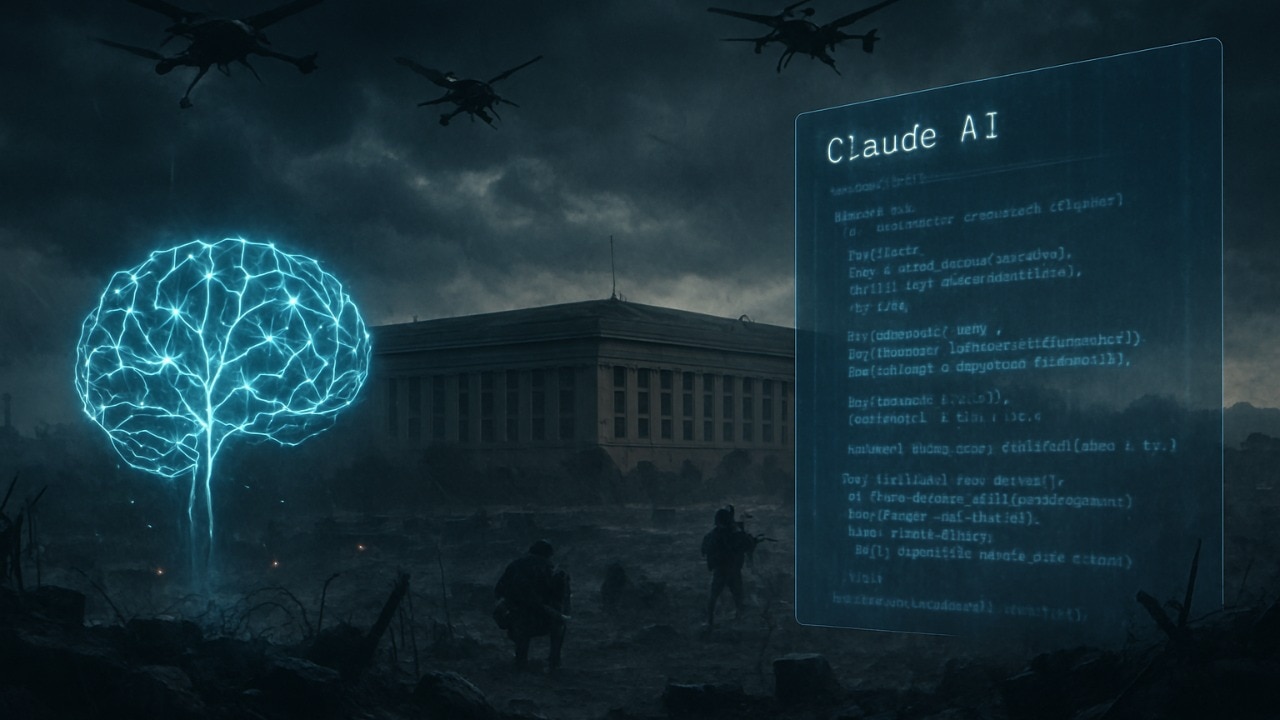

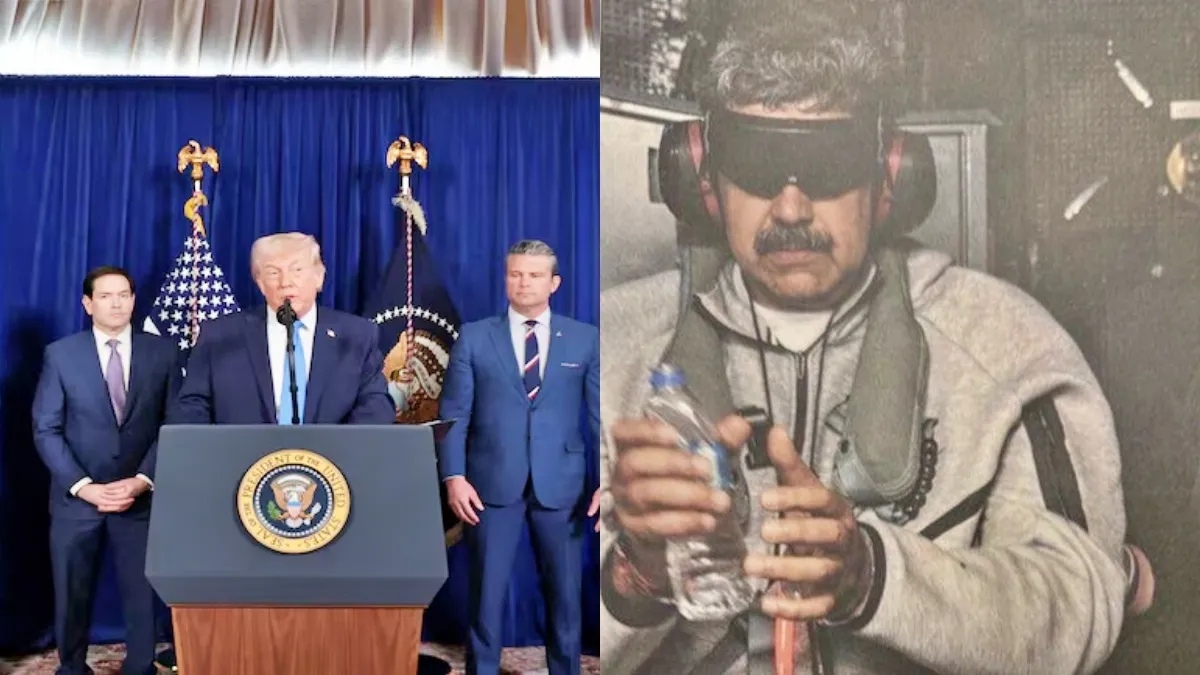

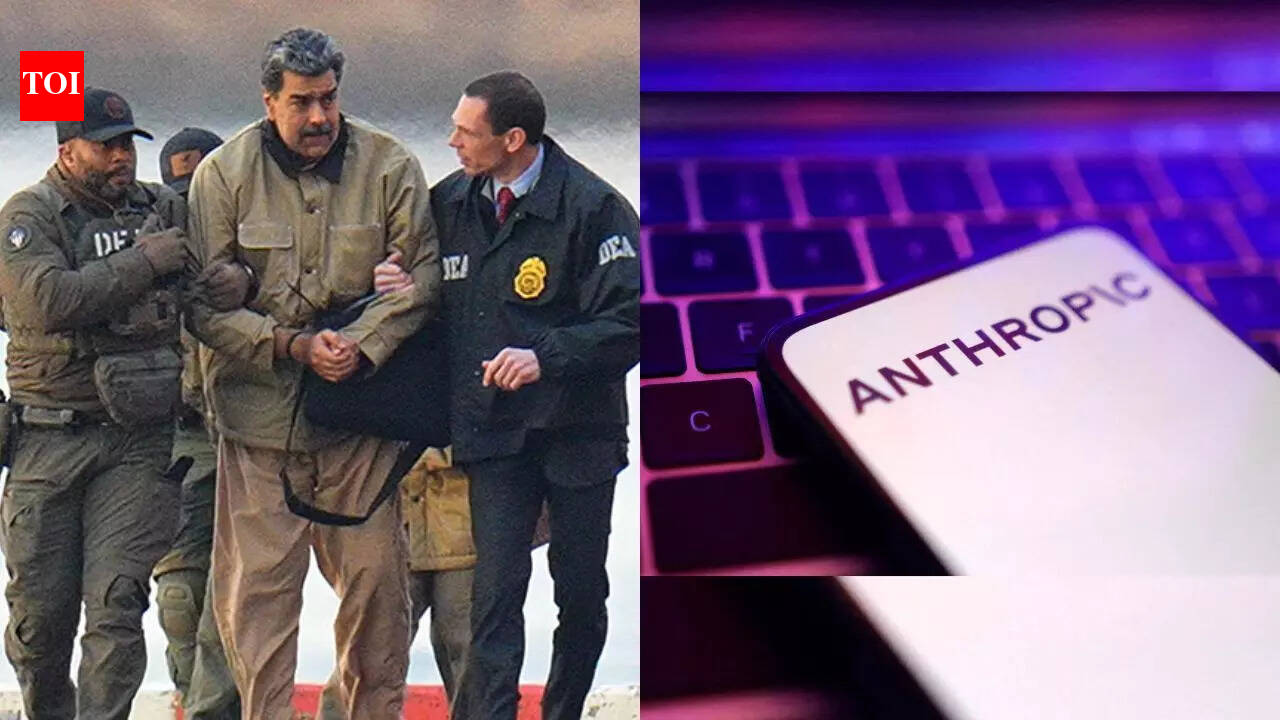

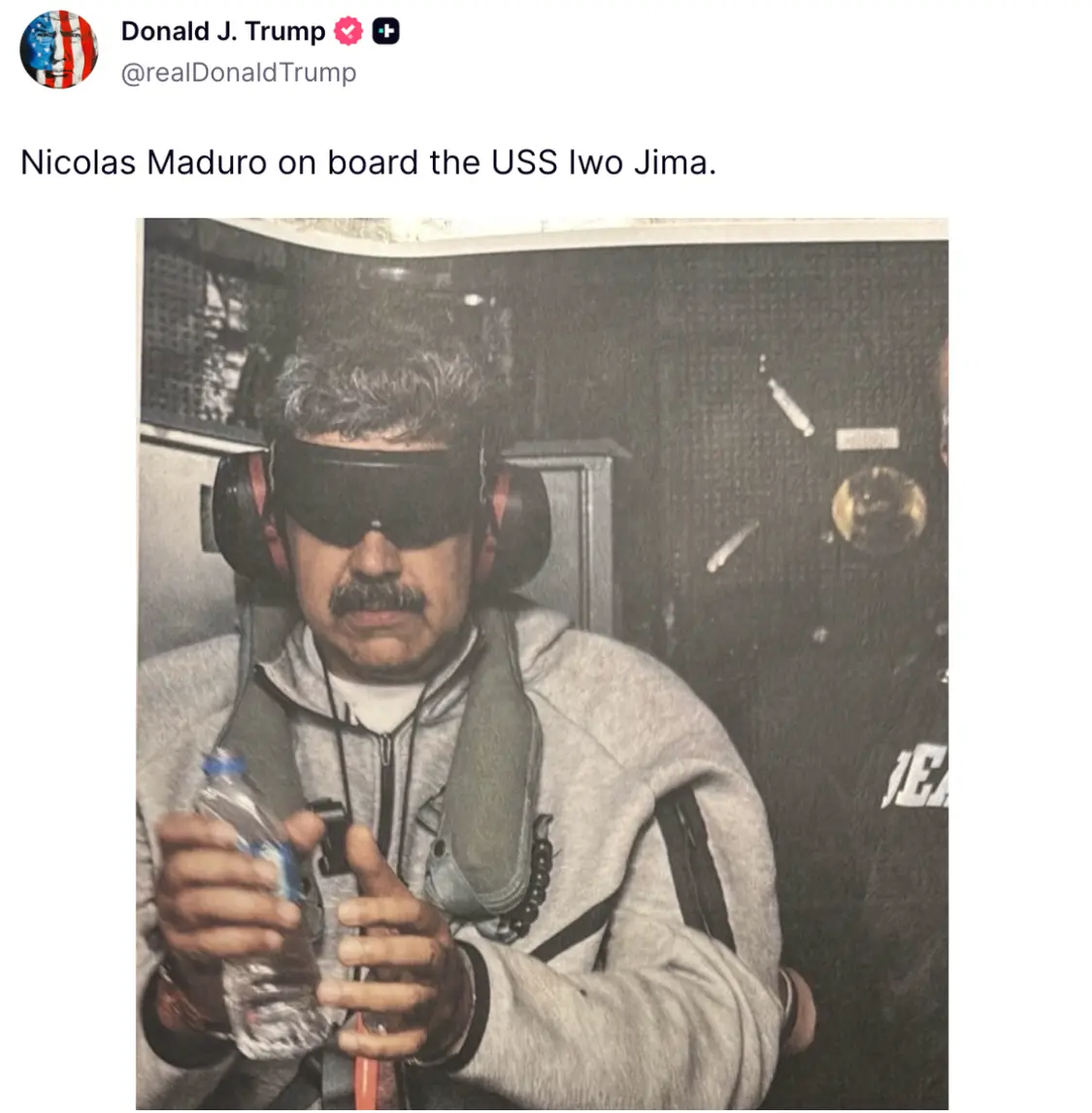

The US military used Anthropic's Claude AI model, via a partnership with Palantir, during a January 2024 operation in Caracas that involved bombing and the capture of Venezuelan President Nicolás Maduro and his wife. The AI's deployment in this violent military action has raised concerns over policy violations and human rights implications.[AI generated]

)

/https://www.ilsoftware.it/app/uploads/2026/02/wp_drafter_496197-scaled.jpg)

)