The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

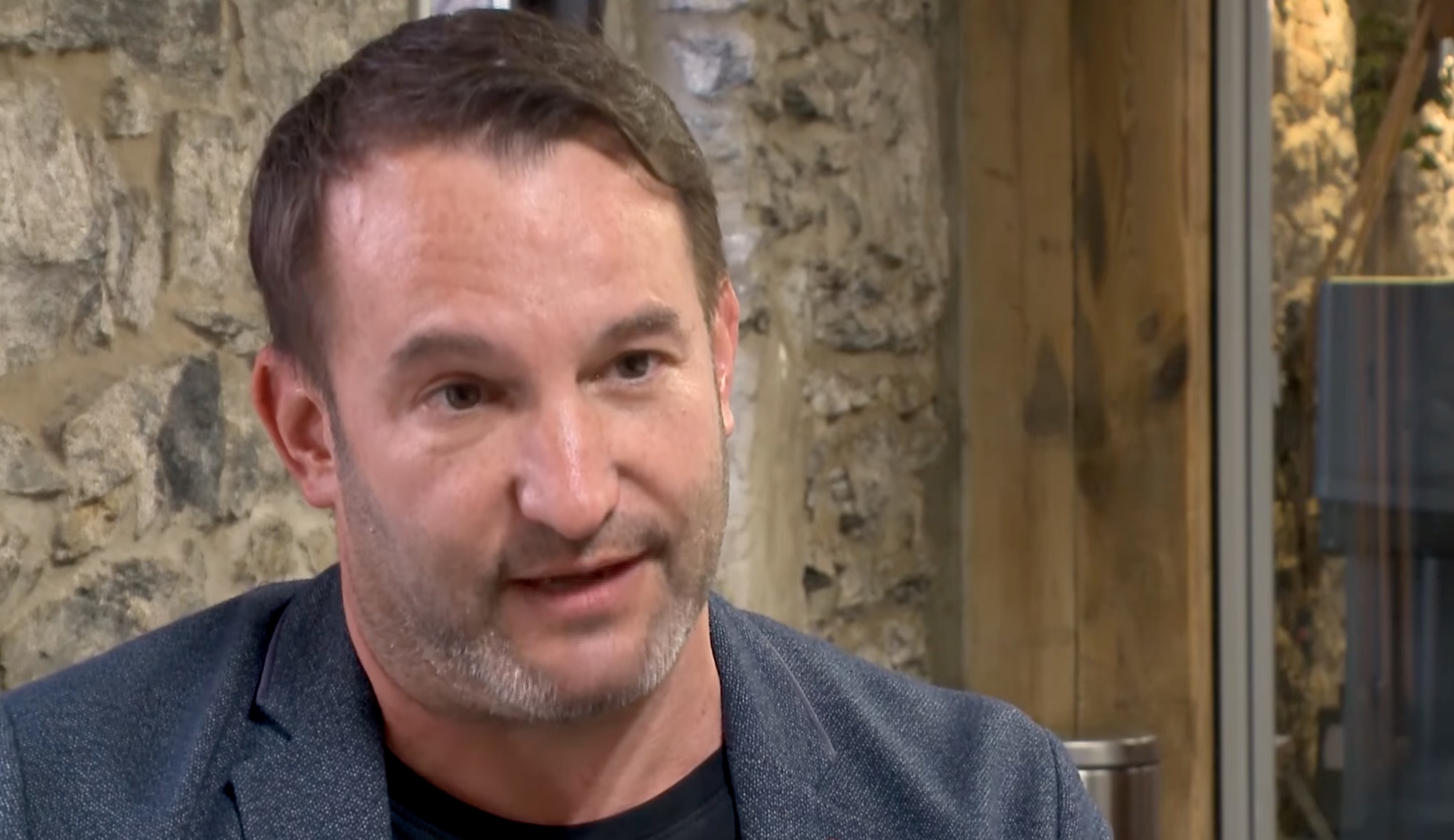

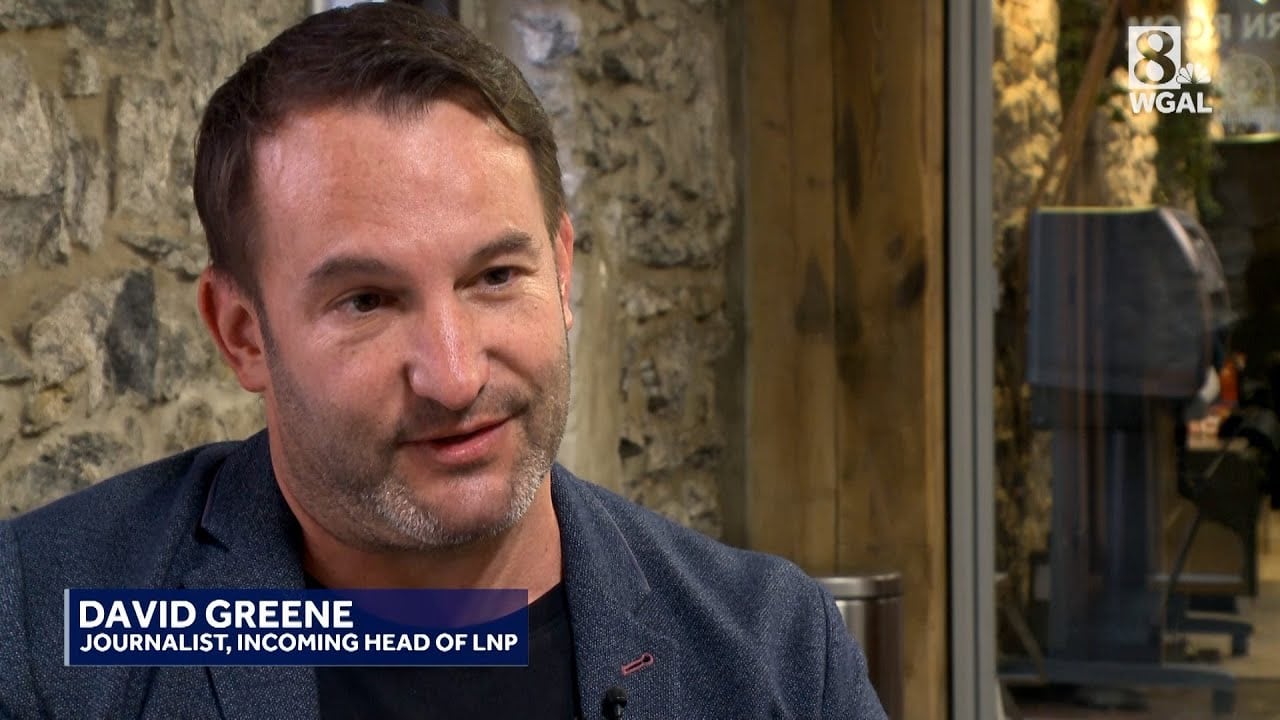

David Greene, former NPR host, is suing Google, alleging its AI tool NotebookLM used a synthetic podcast voice closely resembling his own without consent. Greene claims this violates his rights and professional identity. Google denies the allegations, stating the voice is based on a paid actor.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves an AI system (NotebookLM) that generates synthetic voices. The lawsuit alleges that the AI system replicated Greene's voice without consent, which constitutes a violation of his rights and intellectual property. This is a direct harm caused by the AI system's use, fulfilling the criteria for an AI Incident. The harm is realized (not just potential), as Greene experiences personal and reputational harm and is pursuing legal action. The case also raises broader legal and ethical questions about AI voice replication and rights, but the primary focus is on the realized harm to Greene from the AI system's use.[AI generated]