The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

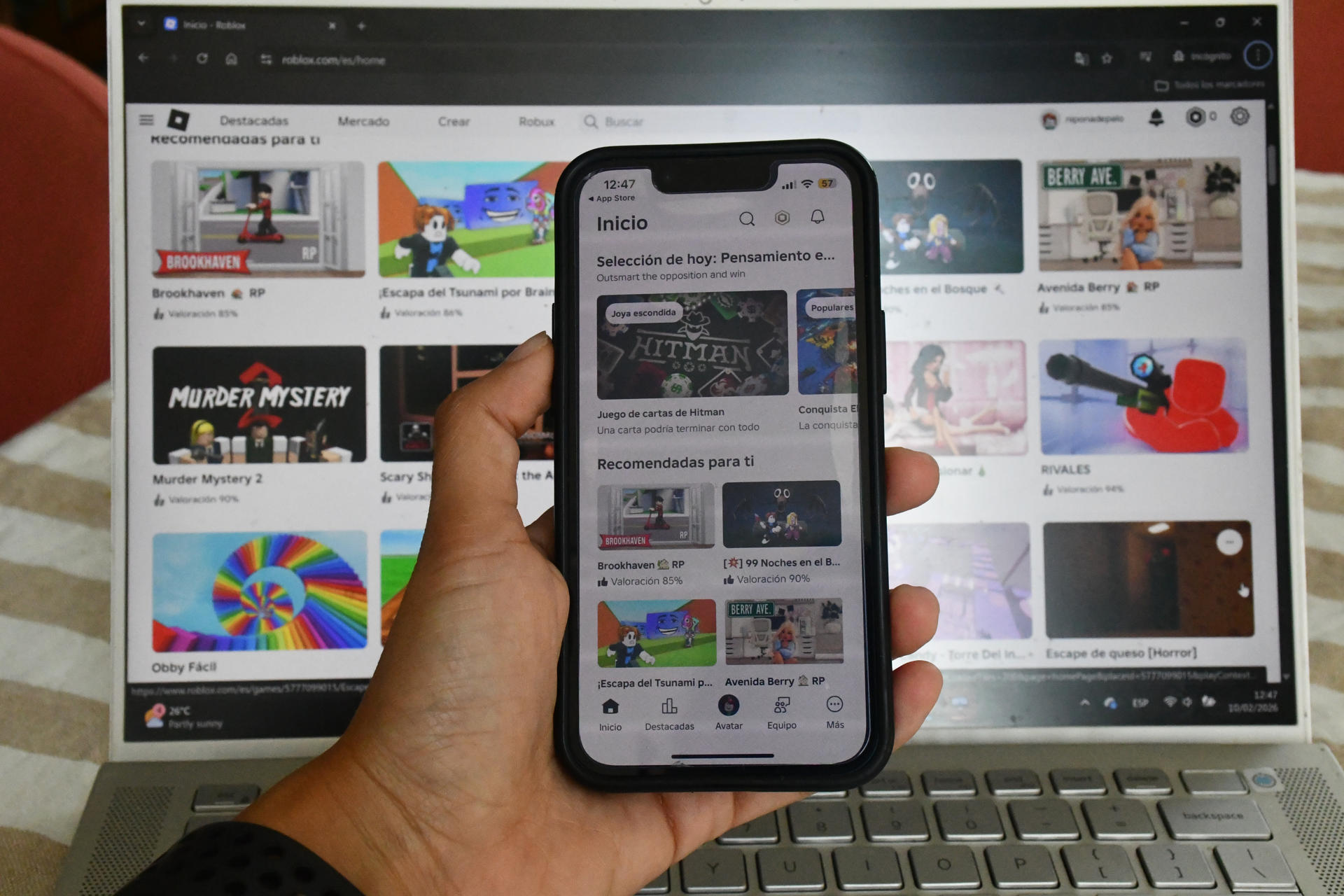

Los Angeles County has sued Roblox, alleging its AI-driven content moderation and age verification systems failed to protect children from sexual content and exploitation. The lawsuit claims these AI systems are inadequate, enabling exposure to online predators and harm to minors on the platform.[AI generated]