The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

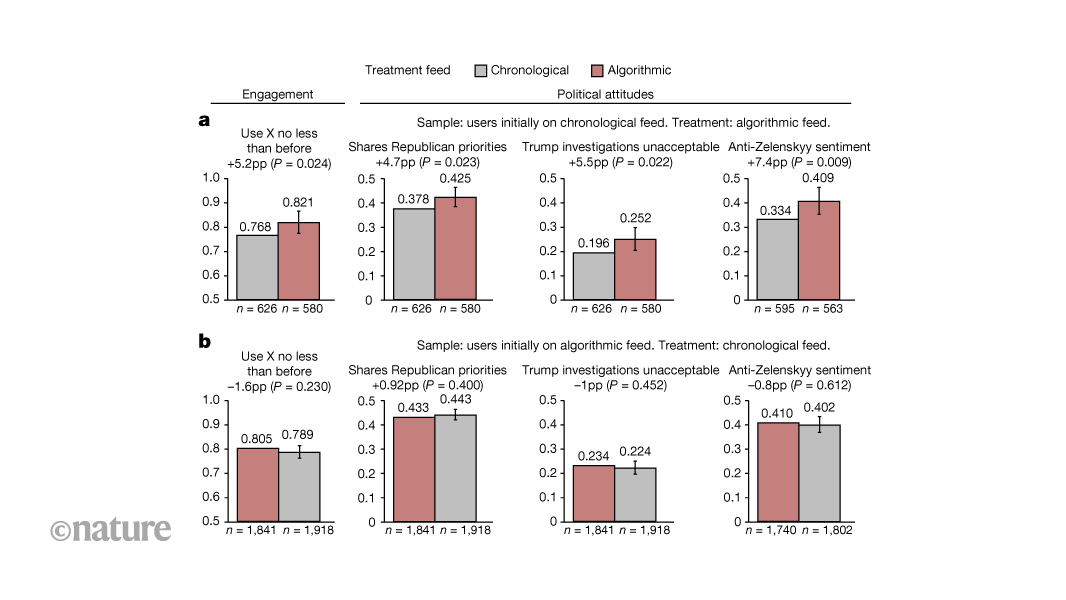

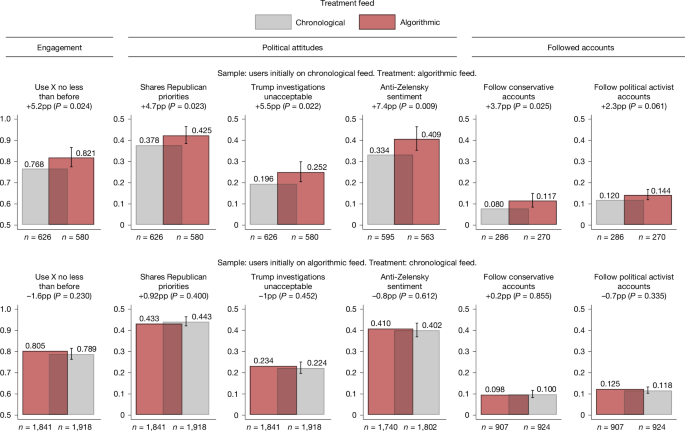

A large-scale study published in Nature found that X's (formerly Twitter) AI-driven feed algorithm nudges users toward more conservative political attitudes. The experiment with nearly 5,000 US users showed these effects persist even after switching back to a chronological feed, raising concerns about algorithmic influence on democratic discourse.[AI generated]