The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

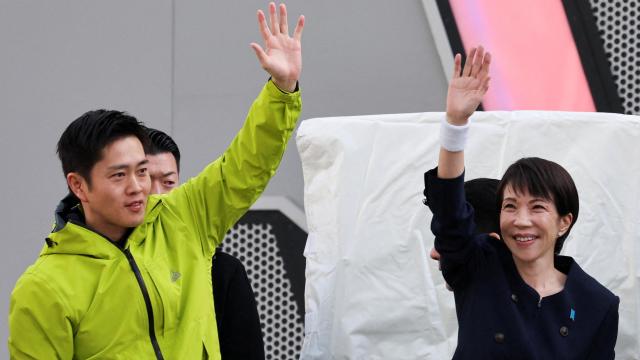

During Japan's House of Representatives election, around 400 China-linked social media accounts used generative AI to produce and spread disinformation targeting Prime Minister Sanae Takaichi. The campaign involved AI-generated images and coordinated posts, aiming to manipulate public opinion and undermine the democratic process.[AI generated]