The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

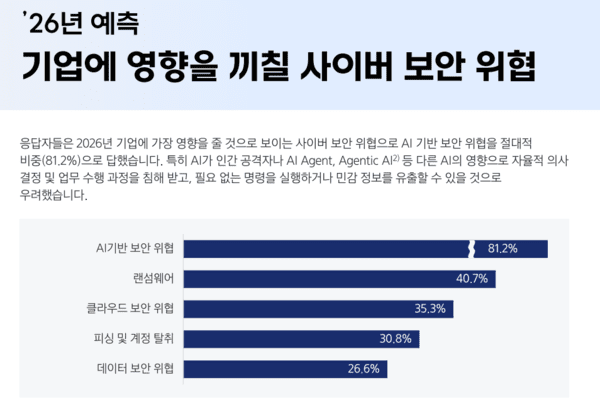

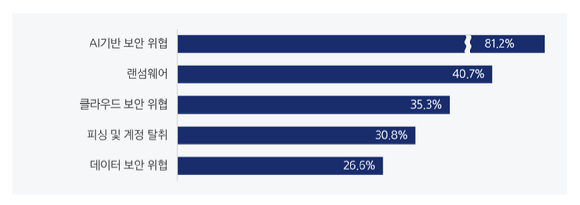

South Korean companies face rising cybersecurity threats from AI systems, particularly generative AI and AI agents. Incidents include AI chatbots generating sensitive data upon request, highlighting risks of data leaks and unauthorized actions. Experts urge strict access controls, comprehensive AI audits, and real-time monitoring to mitigate these AI-driven security vulnerabilities.[AI generated]