The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

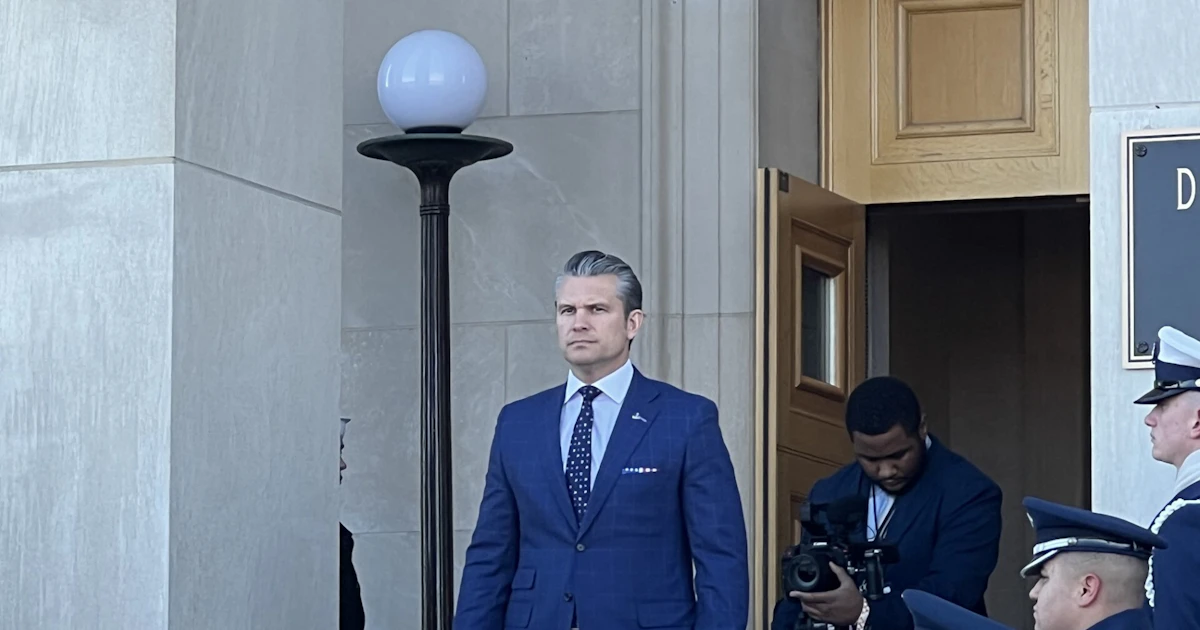

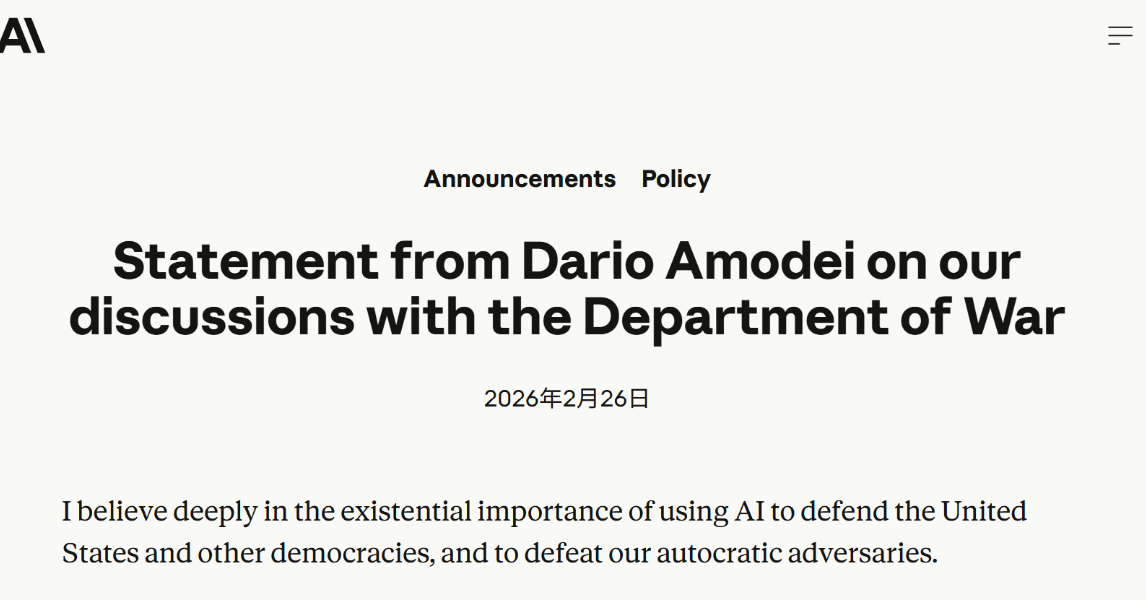

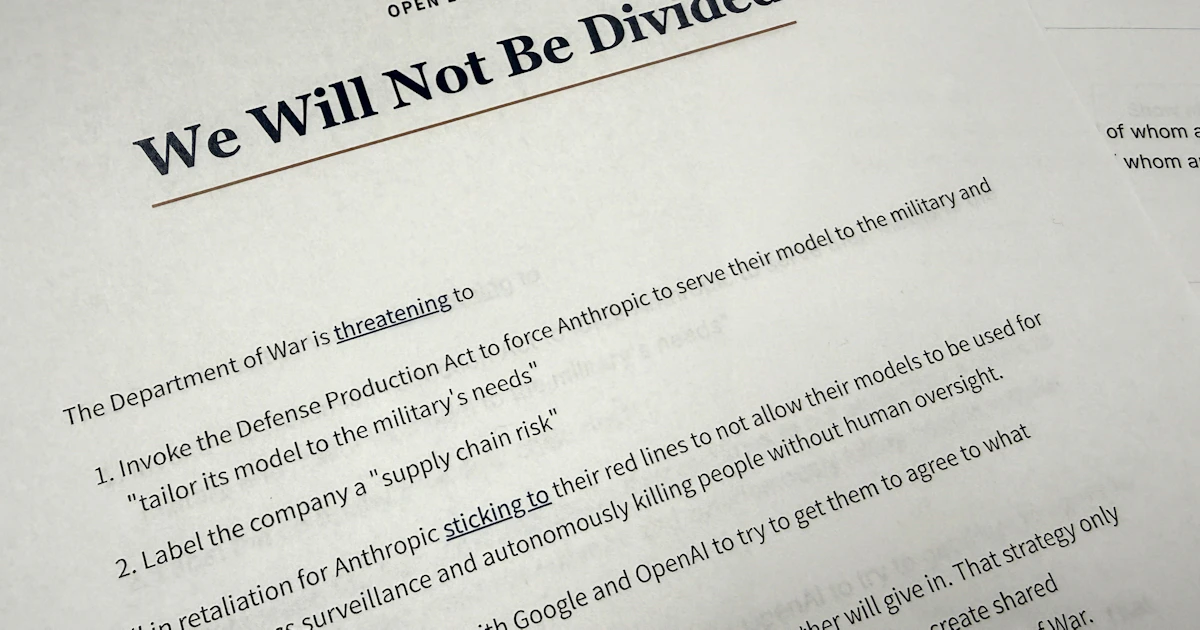

The US military reportedly used Anthropic's Claude AI in a 2026 attack in Venezuela, raising concerns over AI's role in warfare and ethical guidelines. Separately, Anthropic revealed that Chinese AI firms illicitly used Claude via thousands of fake accounts to improve their own models, violating intellectual property rights.[AI generated]