The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

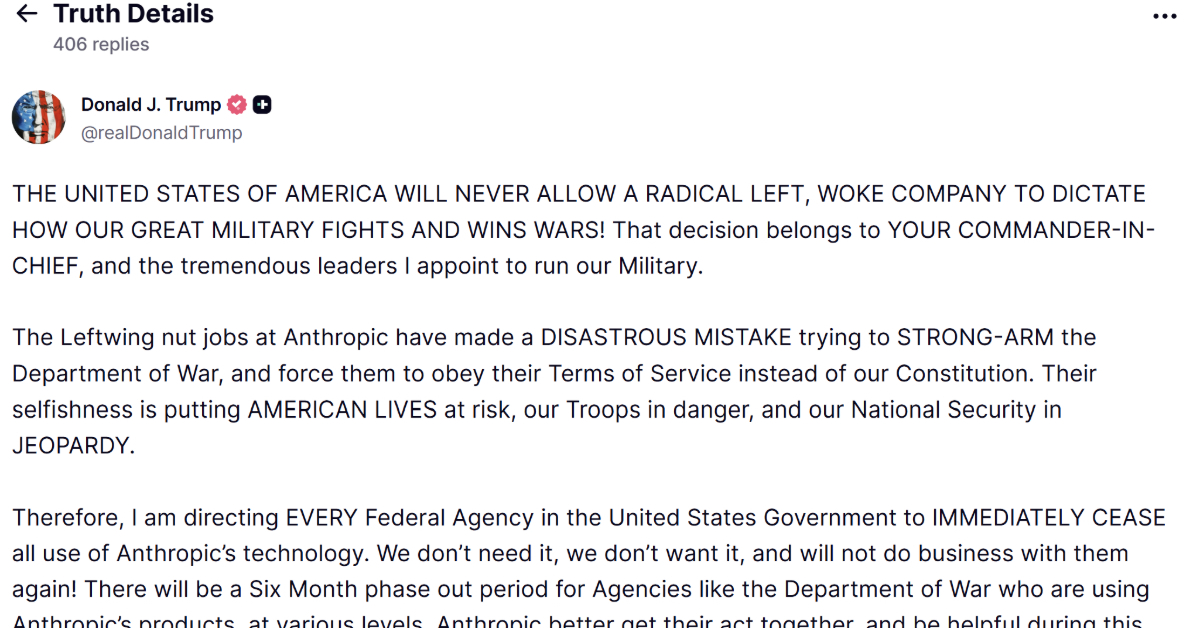

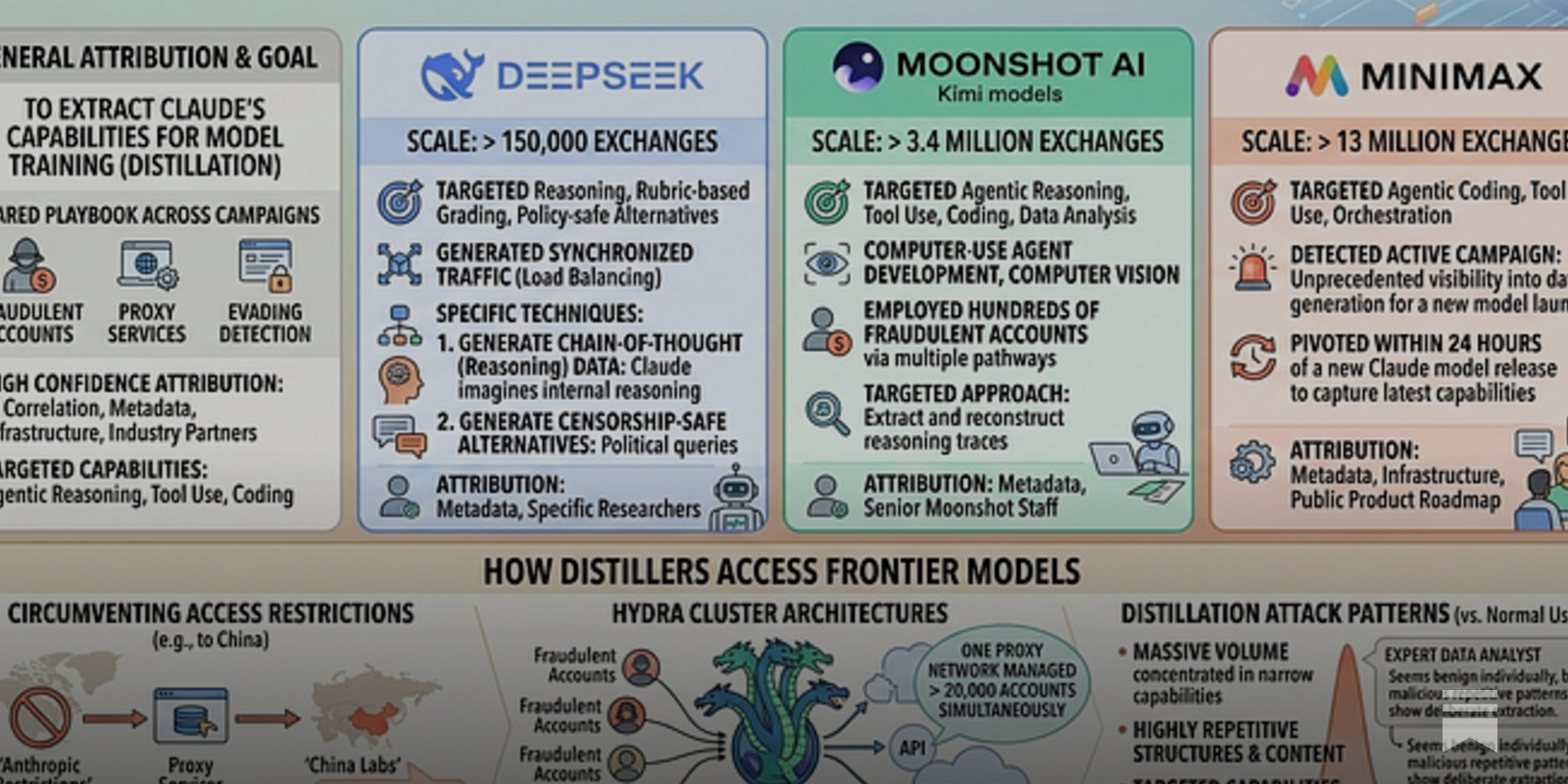

US AI company Anthropic accused Chinese firms DeepSeek, Moonshot AI, and MiniMax of creating over 24,000 fake accounts to extract data from its Claude chatbot. The data, obtained through over 16 million interactions, was allegedly used to train competing AI models, violating Anthropic's terms and raising intellectual property and security concerns.[AI generated]

)

/https://i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2024/t/G/Ee6BoXTMiTzQKKh5gZqA/anthropic-jackie-molloy-for-the-new-york-times.jpg)

)