The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

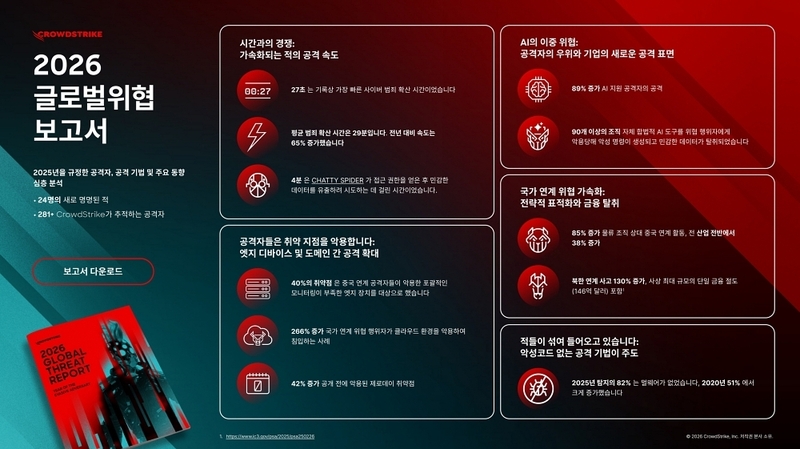

CrowdStrike's 2026 Global Threat Report reveals an 89% surge in AI-enabled cyberattacks, with criminals using generative AI tools to automate and accelerate breaches. Average breakout time dropped to 29 minutes in 2025, with some attacks taking just seconds, leading to rapid data theft and compromised enterprise systems.[AI generated]