The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

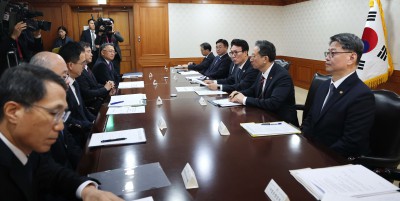

Ahead of local elections, South Korea's government, led by Prime Minister Kim Min-seok, is intensifying efforts to combat AI-generated fake news and misinformation. Authorities are coordinating across agencies to enforce strict legal responses, enhance detection, and raise public awareness to protect democratic processes from AI-enabled manipulation.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article discusses the use and potential misuse of AI systems to generate and spread fake news that can disrupt political and election order, which constitutes harm to communities and democratic processes. However, it does not report a specific incident where AI-generated fake news has already caused harm; rather, it focuses on the risk and the government's planned responses. Therefore, this is best classified as an AI Hazard, as the AI system's involvement could plausibly lead to harm but no concrete incident is described.[AI generated]