The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

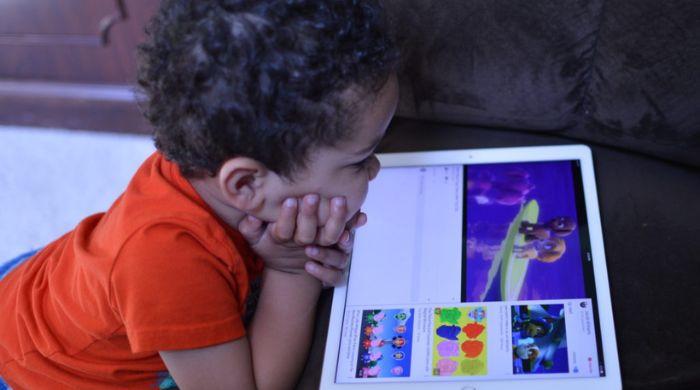

Investigations reveal YouTube's AI-driven recommendation system systematically promotes low-quality, misleading, and developmentally inappropriate AI-generated videos to children. These videos, often disguised as educational, feature distorted visuals and misinformation, raising concerns about cognitive and emotional harm to young viewers. YouTube has removed some content, but the issue persists.[AI generated]