The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

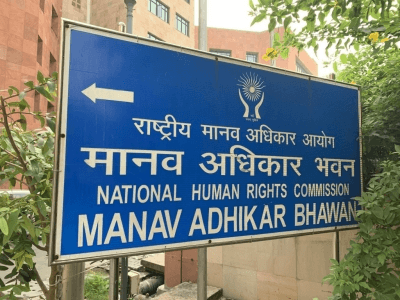

India's National Human Rights Commission has issued notices to government bodies after complaints about privacy risks in an AI-powered education initiative by US-based Anthropic and NGO Pratham. The AI system processes children's academic data, raising concerns about potential violations of privacy and data protection laws under India's DPDP Act.[AI generated]