The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

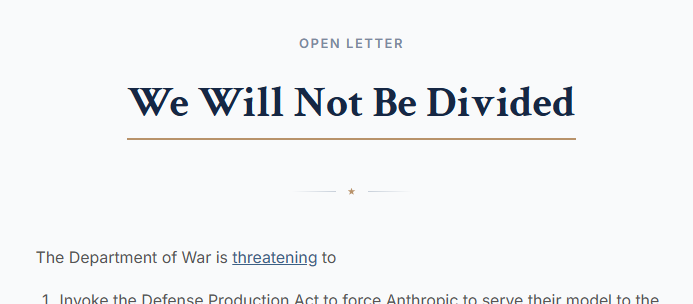

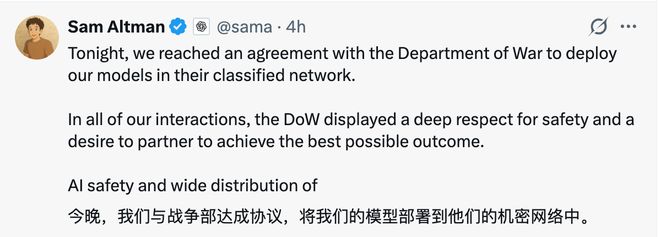

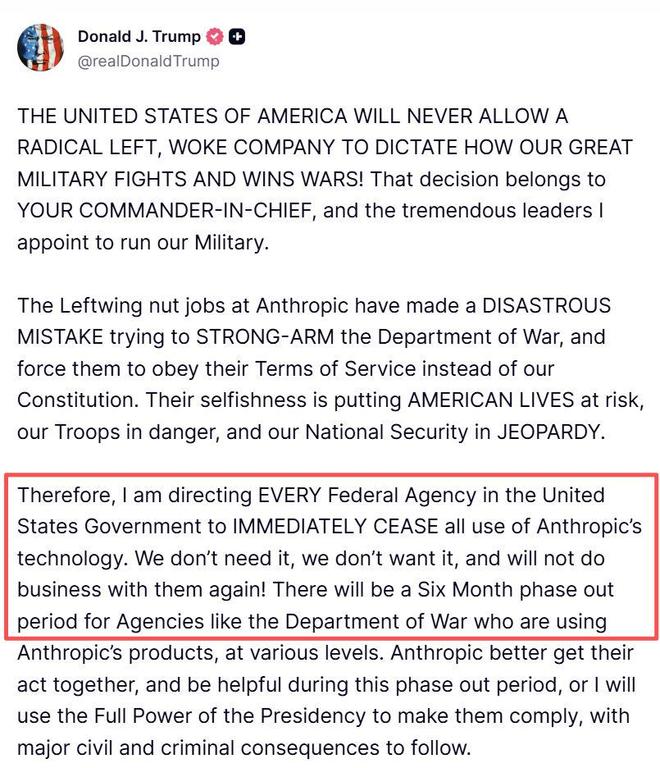

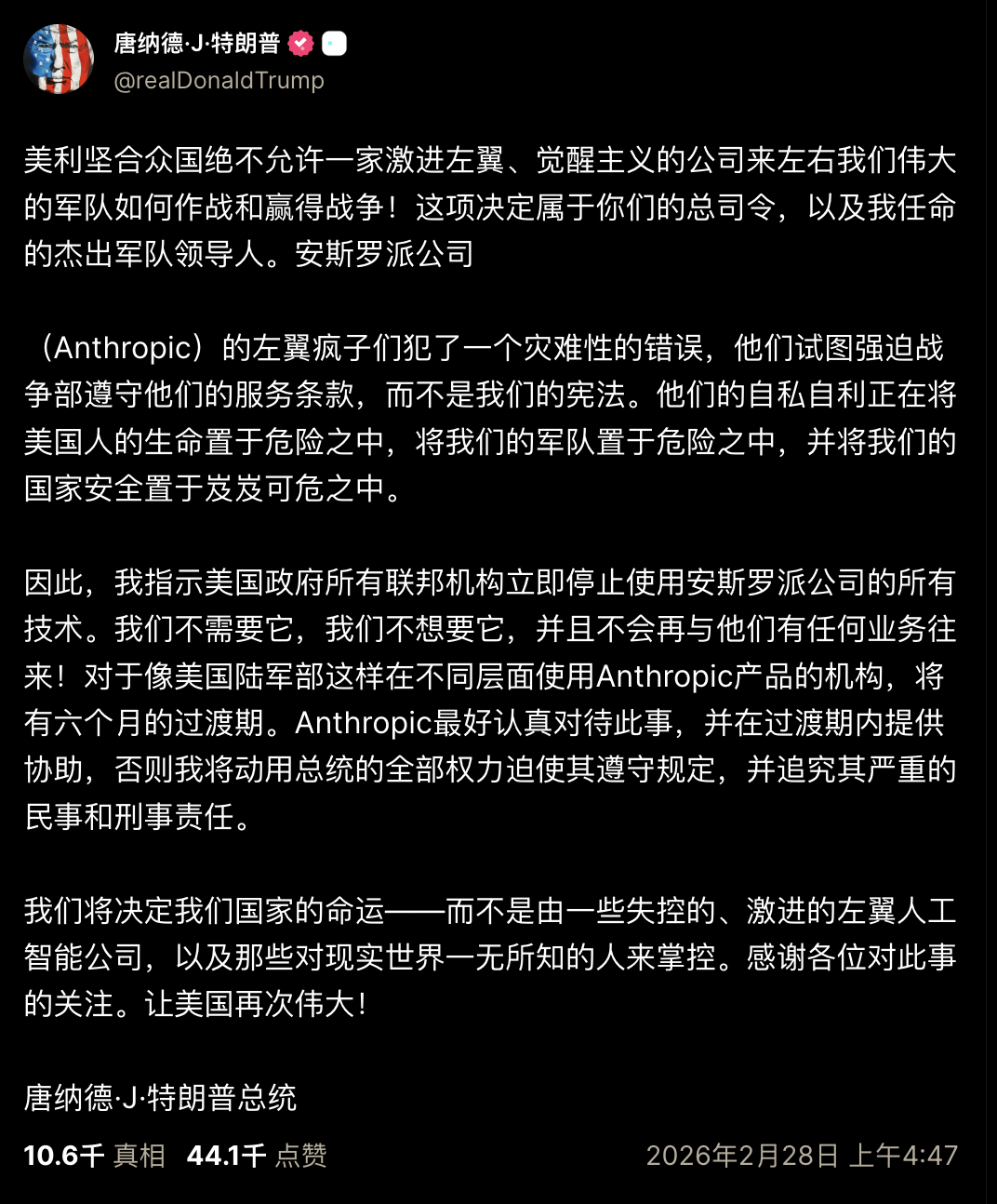

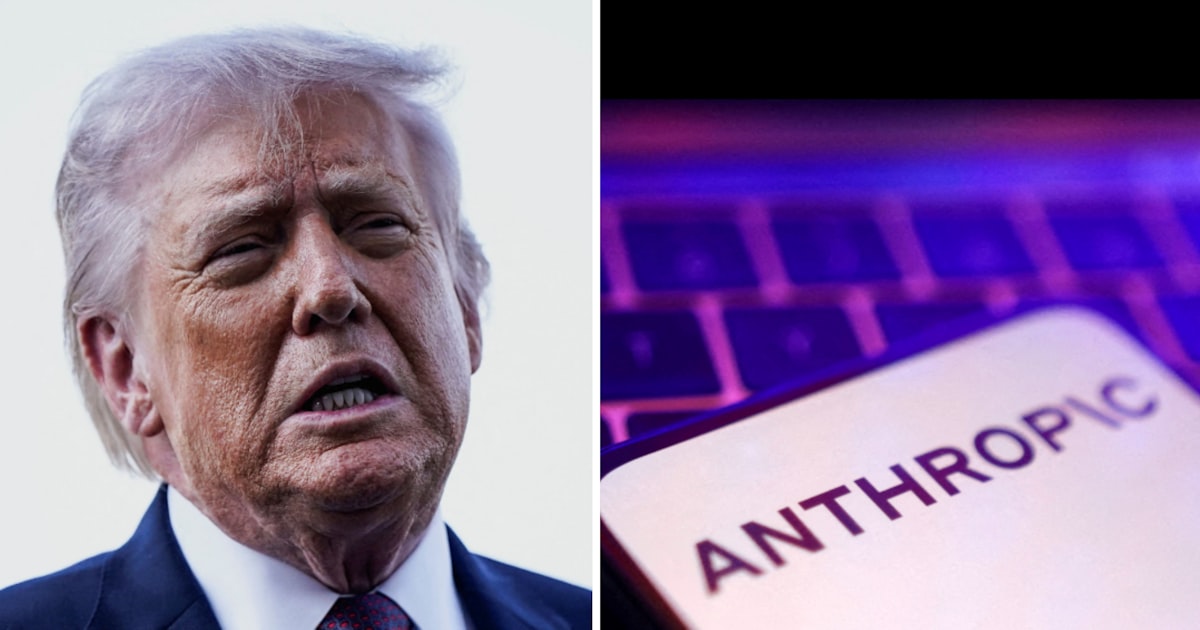

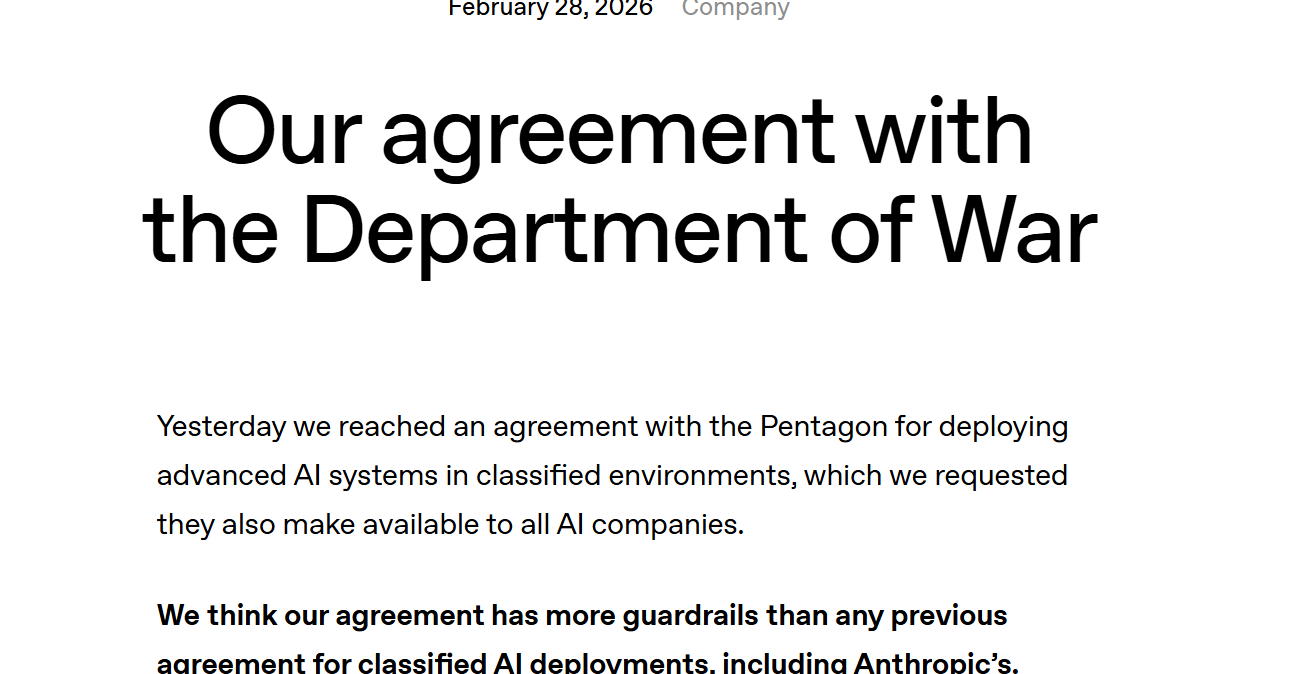

The US Department of Defense demanded unrestricted military use of Anthropic's AI, leading to a standoff over ethical constraints on autonomous weapons and surveillance. After Anthropic refused, the government banned its technology and partnered with OpenAI, which agreed to deploy its AI models with some safeguards in military networks.[AI generated]

:format(jpg):quality(99)/f.elconfidencial.com/original/68c/7d2/624/68c7d26244d0ac96882d6f7008c17908.jpg)

:format(jpg):quality(99)/f.elconfidencial.com/original/75f/019/14a/75f01914a7a8780b3b706d5517898a7e.jpg)

)

のロゴ(Getty-Images).jpg)