The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

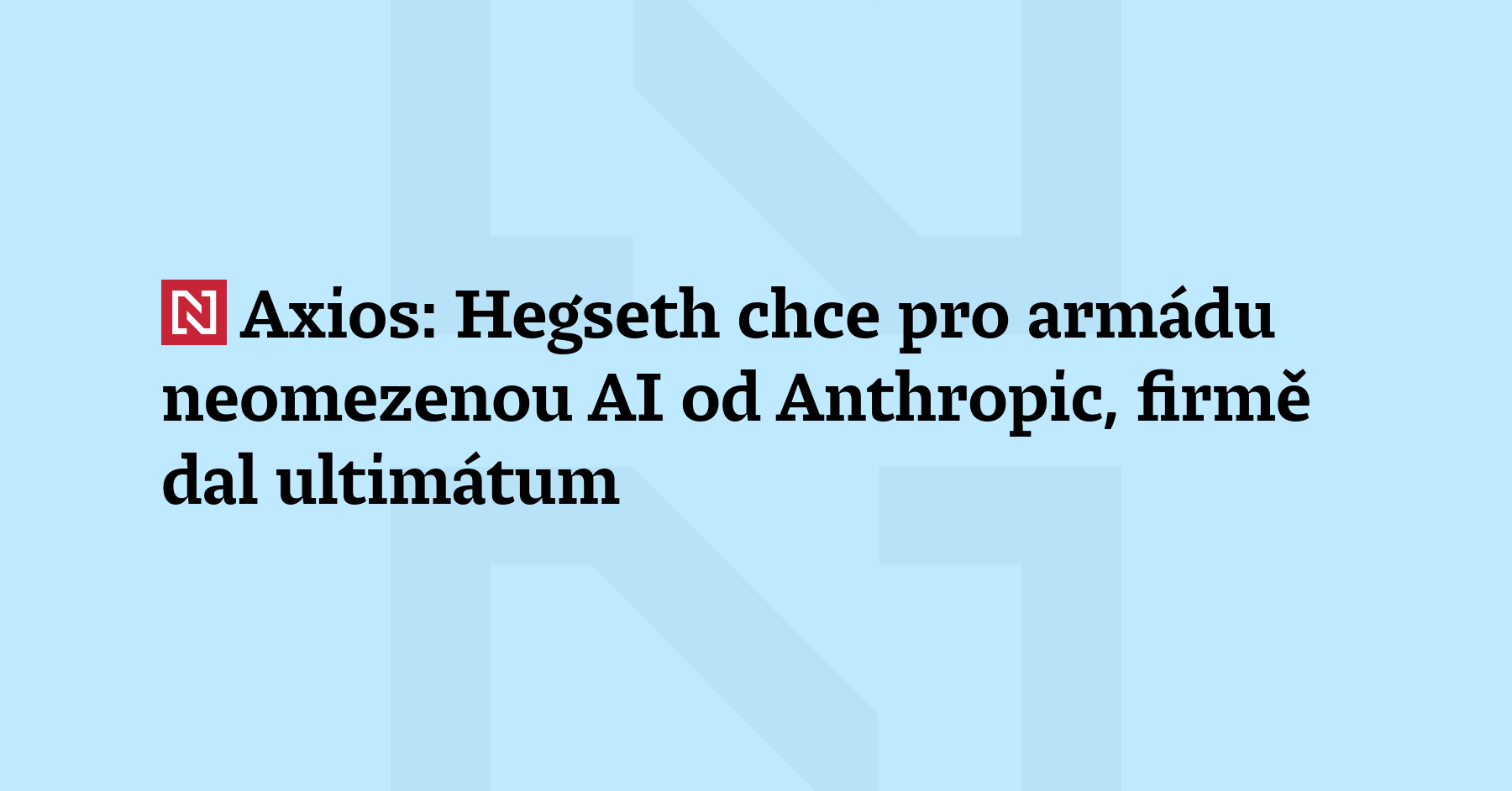

U.S. President Donald Trump ordered all federal agencies, including the Department of Defense, to immediately stop using Anthropic's AI technology due to concerns over its military applications and national security risks. The Pentagon has a six-month transition period to phase out the technology, following disputes over unrestricted military use.[AI generated]

/s3/static.nrc.nl/wp-content/uploads/2026/02/28002211/7c75d996-f5d1-4187-afe9-469c24ebafcc.jpg)