The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

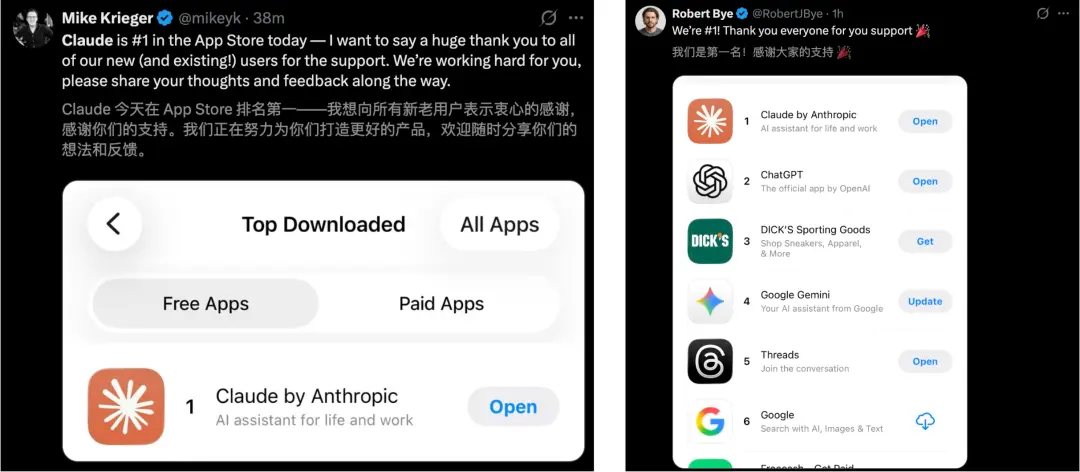

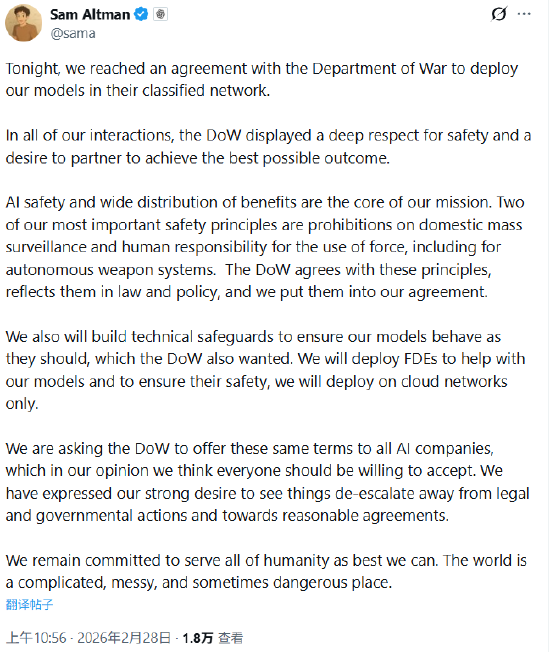

Over 200 Google and OpenAI employees signed an open letter opposing the use of advanced AI for military and surveillance purposes, urging ethical boundaries and transparency. Meanwhile, OpenAI confirmed an agreement to deploy its models on U.S. Department of Defense classified networks, promising safeguards against misuse.[AI generated]