The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

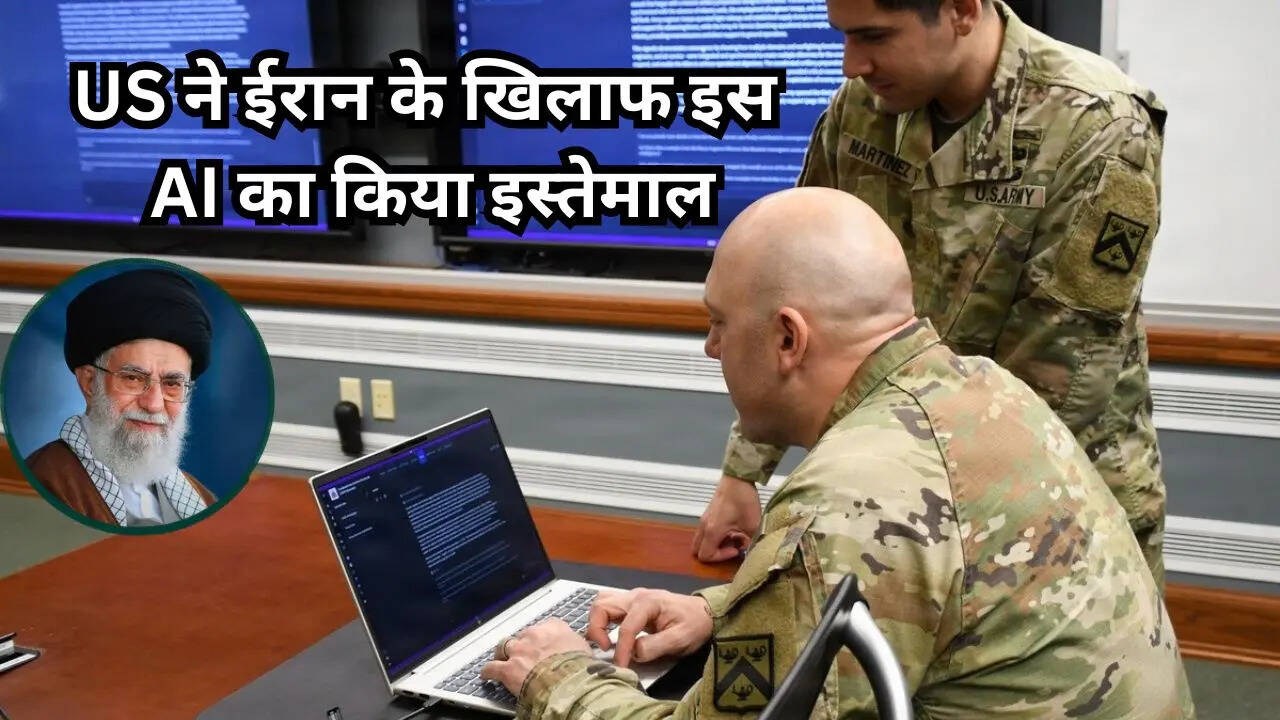

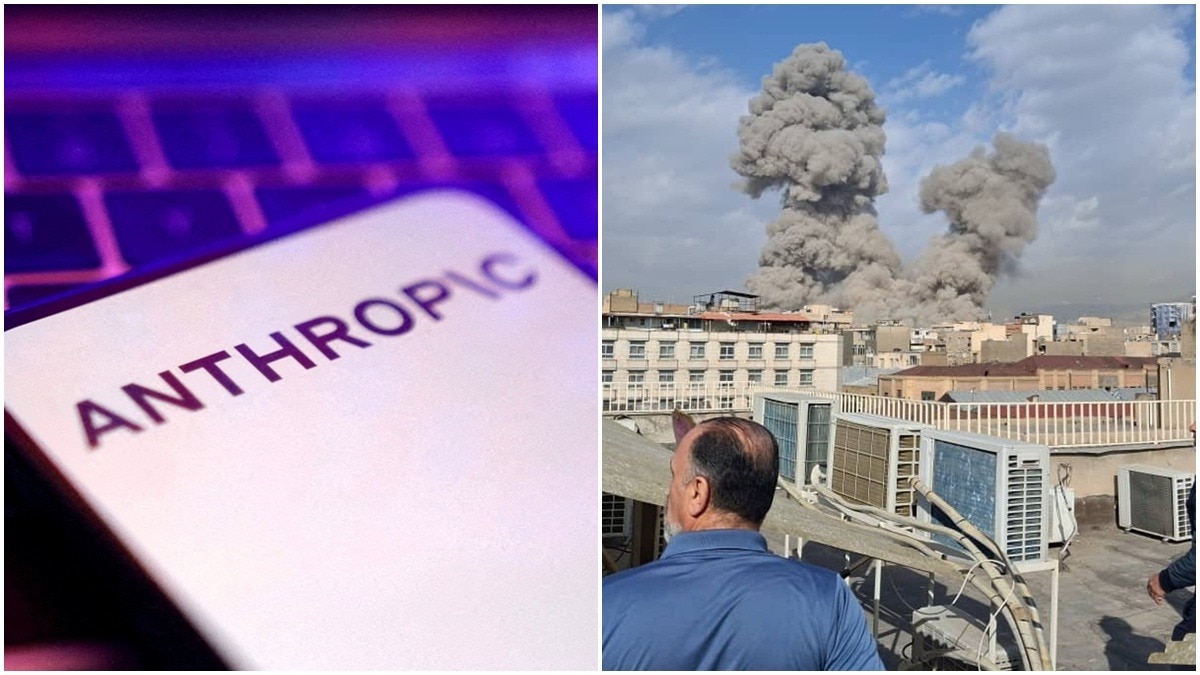

During Operation Epic Fury, the US military used Anthropic's AI services, including Claude tools, alongside B-2 bombers and drones in strikes against Iranian military infrastructure. The AI's specific role is unclear, but its deployment contributed to lethal operations causing significant harm in Iran.[AI generated]