The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

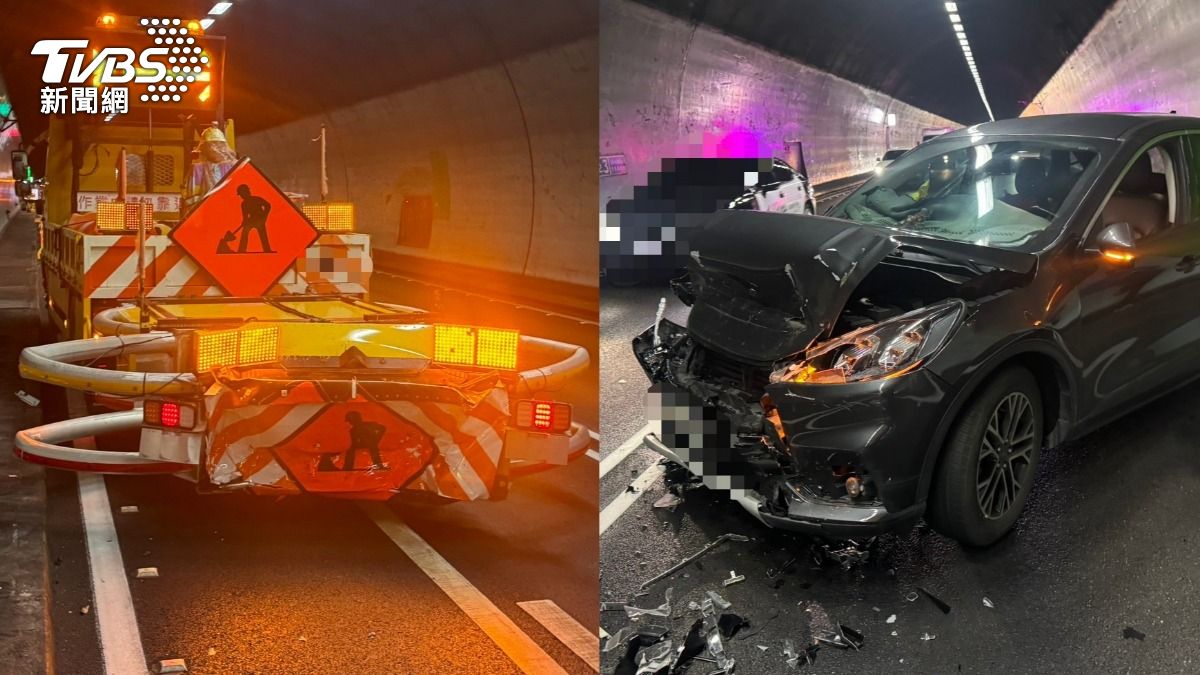

Multiple incidents in Taiwan and China involved misuse or malfunction of AI-based driver assistance systems (such as AEB and autopilot), leading to traffic accidents with fatalities, injuries, and property damage. Courts ruled drivers responsible due to misunderstanding system limitations, highlighting risks of overreliance on AI in vehicles.[AI generated]