The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

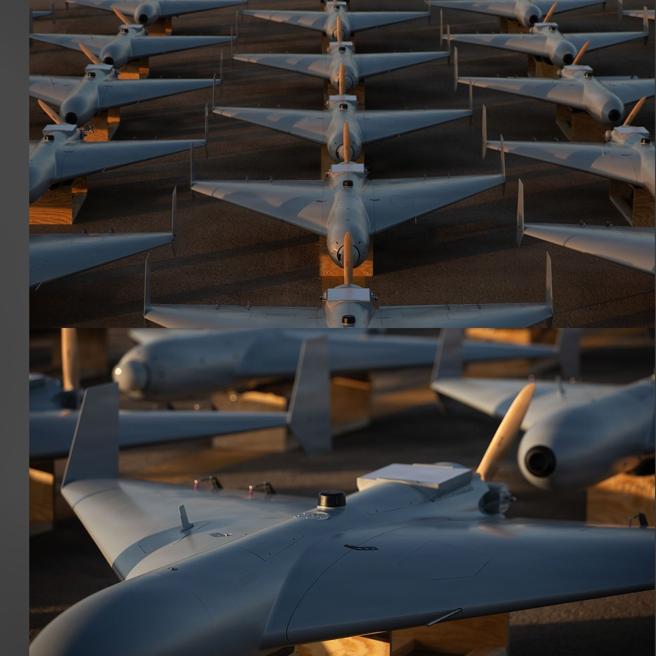

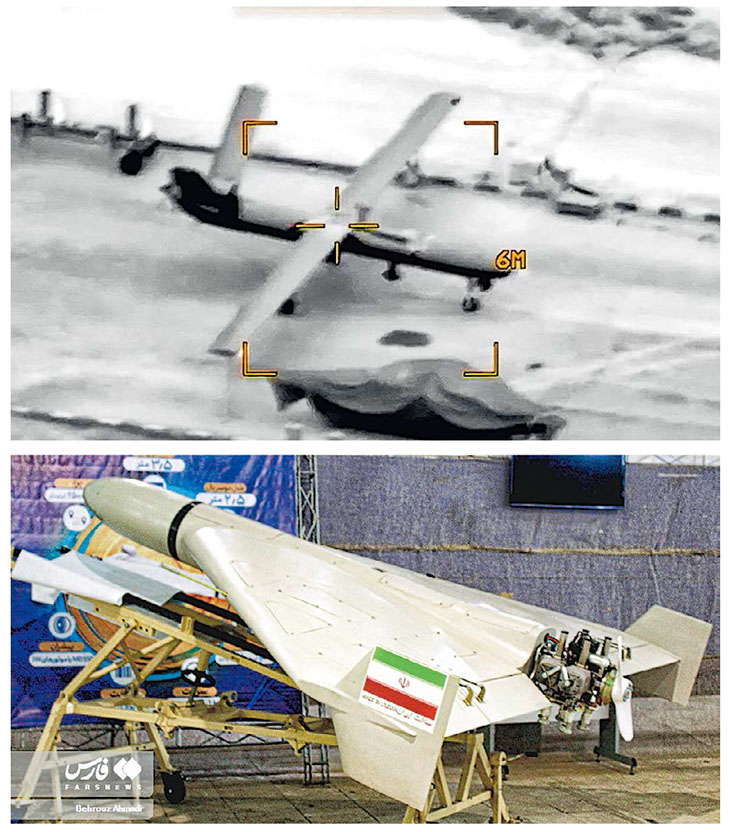

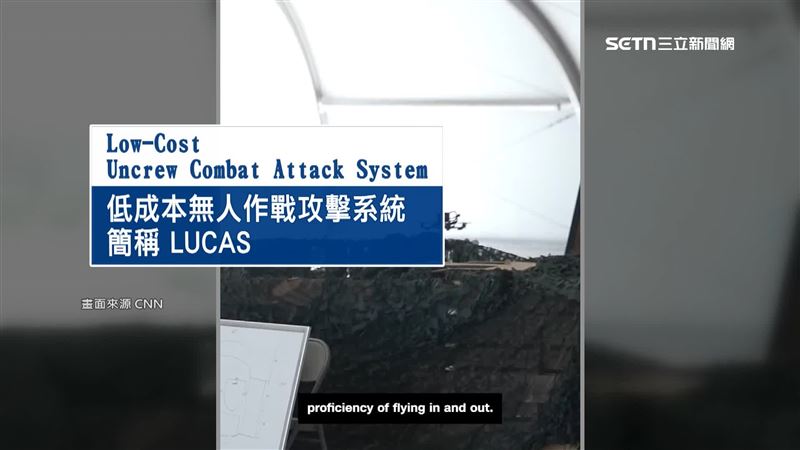

The US military, via its Task Force Scorpion Strike, deployed AI-enabled LUCAS suicide drones—reverse-engineered from Iran’s Shahed-136—in combat against Iranian targets. These autonomous, low-cost drones were used for the first time in large-scale strikes, demonstrating direct harm caused by AI systems in military operations.[AI generated]

.jpg)

)